Editor’s Brief

A creative team in Beijing transformed a DJI Pocket 3 action camera into "eyes" for an AI agent named OpenClaw to conduct a "Human Observation Plan." By capturing snapshots of the office every few minutes and processing them through multimodal models, the system generates whimsical daily reports, academic-style "rainbow farts" (extravagant praise), and memes to foster team culture rather than monitor productivity.

Key Takeaways

- Hardware Integration:** A DJI Pocket 3 was connected via USB to a Mac mini, serving as a high-definition, wide-angle "eye" for the office environment.

- Multimodal Workflow:** The system uses Claude Opus 4.6 for visual reasoning and daily summaries, combined with Volcengine’s Seed 2 models for generating personalized memes.

- Conversational Development:** The entire application was built in minutes using natural language instructions; the AI agent handled API integrations, debugging, and feature testing autonomously.

- Cultural Guardrails:** To prevent a "Big Brother" atmosphere, the team implemented a "praise-only" rule, an anti-overtime reminder, and a hard privacy policy where all visual data is deleted at midnight.

- Ambient AI:** The project shifts the focus of AI from a direct productivity tool to an "ambient observer" that provides emotional value and social lubrication within a workspace.

Editor’s Note

The following content is compiled by NOVSITA in combination with X/social media public content and is for reading and research reference only.

Key Points

- I have been on business trip to Spain for several days.

- Then, I just returned to Beijing yesterday. As soon as I returned to the company, I discovered a very interesting thing.

Disclaimer

For parts involving rules, benefits or judgments, please refer to the original expression and latest official information of Digital Life Kazik.

Editorial Commentary

When I saw the “OpenClaw Human Observation Project” shared by Kazik, my first reaction was not how awesome the technology is, but that these people who are engaged in AI are really able to “make fun of themselves”.

I have been on business trip to Spain for several days.

Then, I just returned to Beijing yesterday. As soon as I returned to the company, I discovered a very interesting thing.

It was the guys from the content creative team who set up a Pocket 3 by the window.

At first I thought they were vlogging to record the company’s daily routine.

Then I discovered that this thing, they actually said, was from OpenClaw in the group, eyes?

Damn it.

I asked what this thing was doing, and they said it used Pocket 3 as a camera, mounted it high by the window, and took pictures of the entire content team’s workstation area.

Take a screenshot every 2 to 5 minutes, feed it to a multi-modal model through OpenClaw, and let it describe what it sees like writing a diary.

Then every day, we record the daily anecdotes in the workstation and the behavioral details that we usually don’t pay attention to.

The recorded results are as follows.

Timestamp records the time when the picture was captured.

photo_path records where the picture is stored.

photo_path records the content output by the visual understanding model based on the picture, which is roughly who did what.

At 7 or 8 o’clock after get off work, all the above data will be fed to OpenClaw.

Let Crayfish analyze everyone’s interesting status and things today.

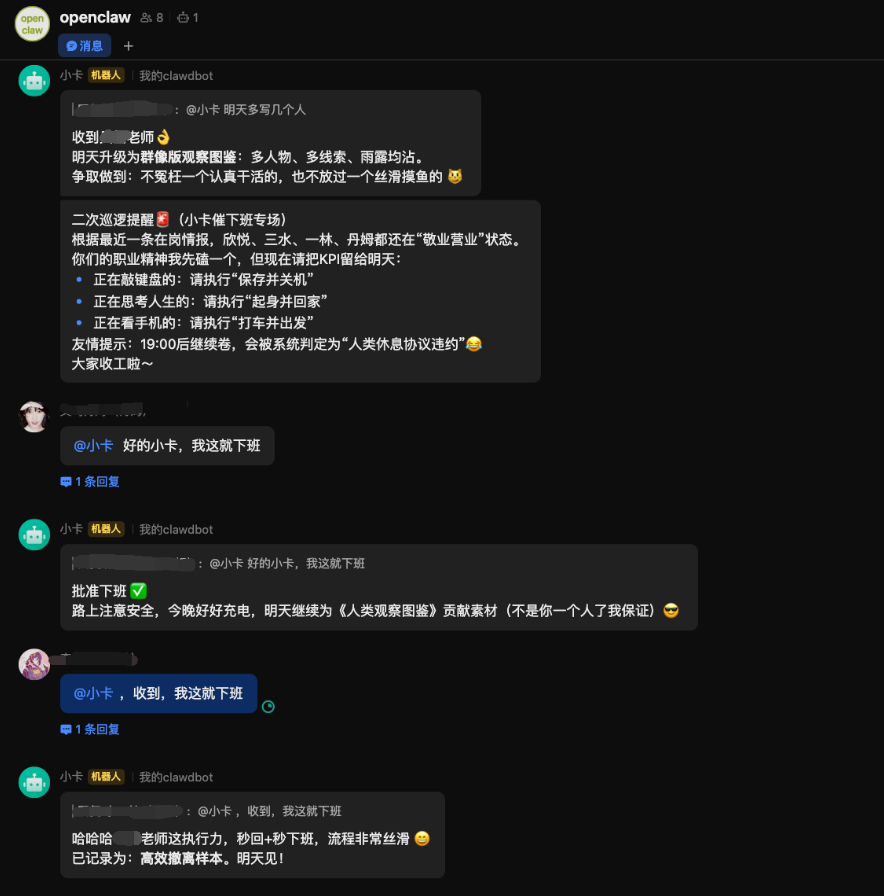

These observation records will be stored for a whole day. Then, in order to protect some of their privacy and security, after all, they play all day long. OpenClaw will automatically delete all these records at 12 o’clock in the evening.

Unexpectedly, after being out for a few days, they would engage in such interesting activities.

As for why Pocket 3 is used as the crayfish’s eyes, it’s because it has a huge viewing angle, can rotate, and is also high-definition.

When I first started playing two days ago, I got an ordinary surveillance camera. The shots were really blurry, the viewing angle was not good enough, and it felt like no one was watching, but it felt like a thief.

Then I switched to the company’s Pocket 3 and directly brought the one from the video team over.

The connection method is also very simple, just use the data cable to connect Pocket 3 to the Mac mini and act as a USB camera.

So as long as your camera equipment can be plugged into USB, it can theoretically be used as eyes.

They even wanted to bring over the 20,000-plus Canon in the video team that I used for live streaming to use as eyes for the crayfish, but I refused righteously.

If this big lens is used, the crayfish’s eyes will be fucked to 5.2. This is not only based on daily movements, but also can be seen by looking at the pores every day to see if there is any powder stuck on it.

They said that Crayfish would be in the group every day, and they would make summaries based on the daily data collected and interact with each other. I asked them to bring me into the group and let me see how it works, but they also rejected it.

They said I must have either wanted to join the group to watch crayfish, or simply wanted to do something wrong.

We have no choice but to go to our colleagues’ workstations to see.

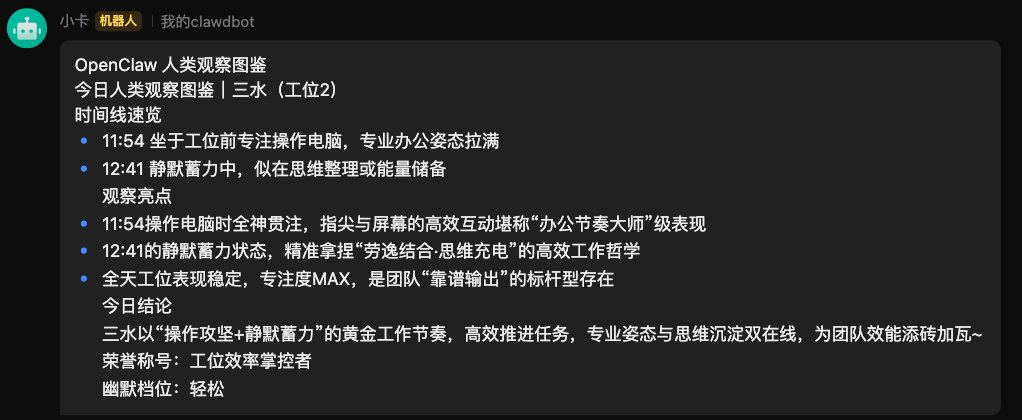

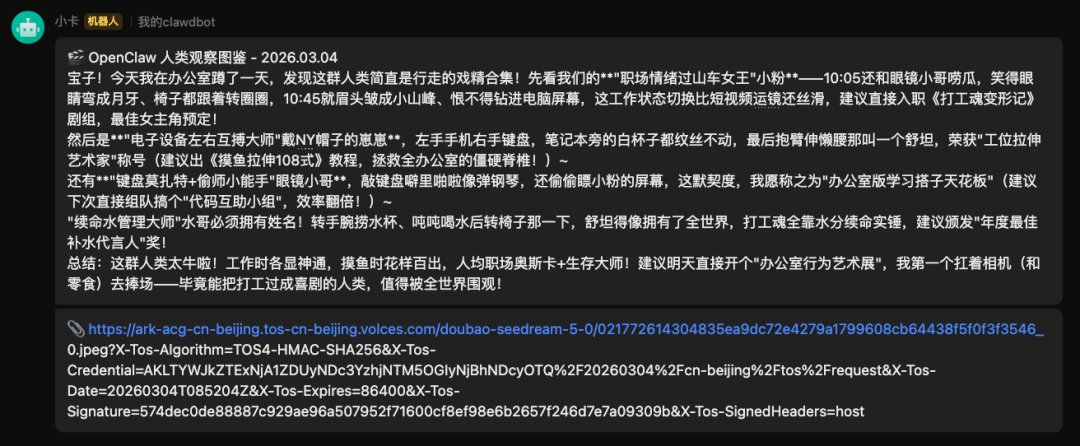

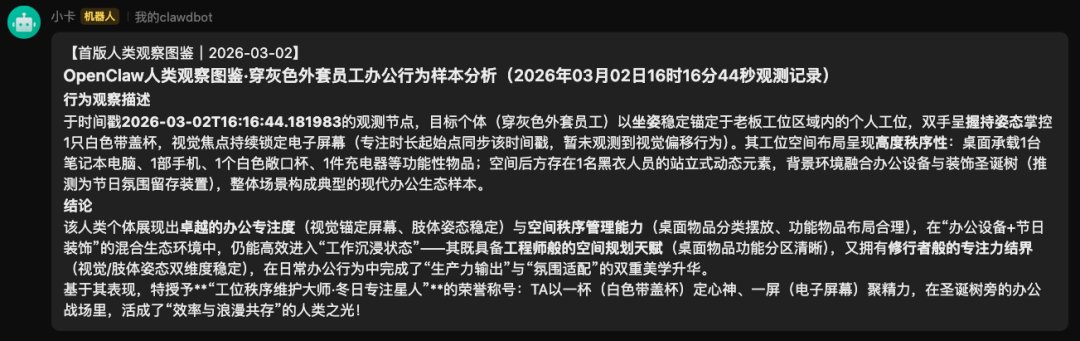

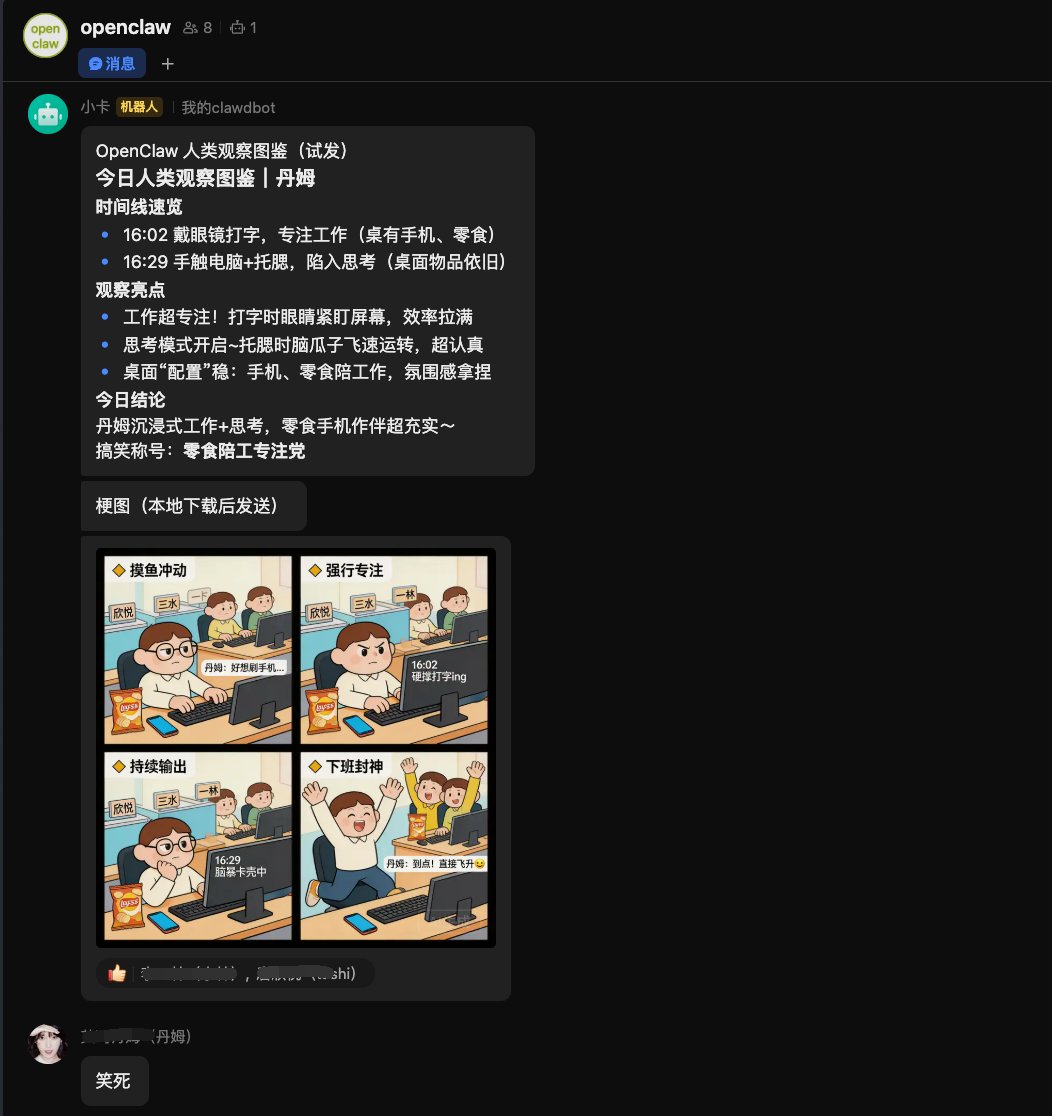

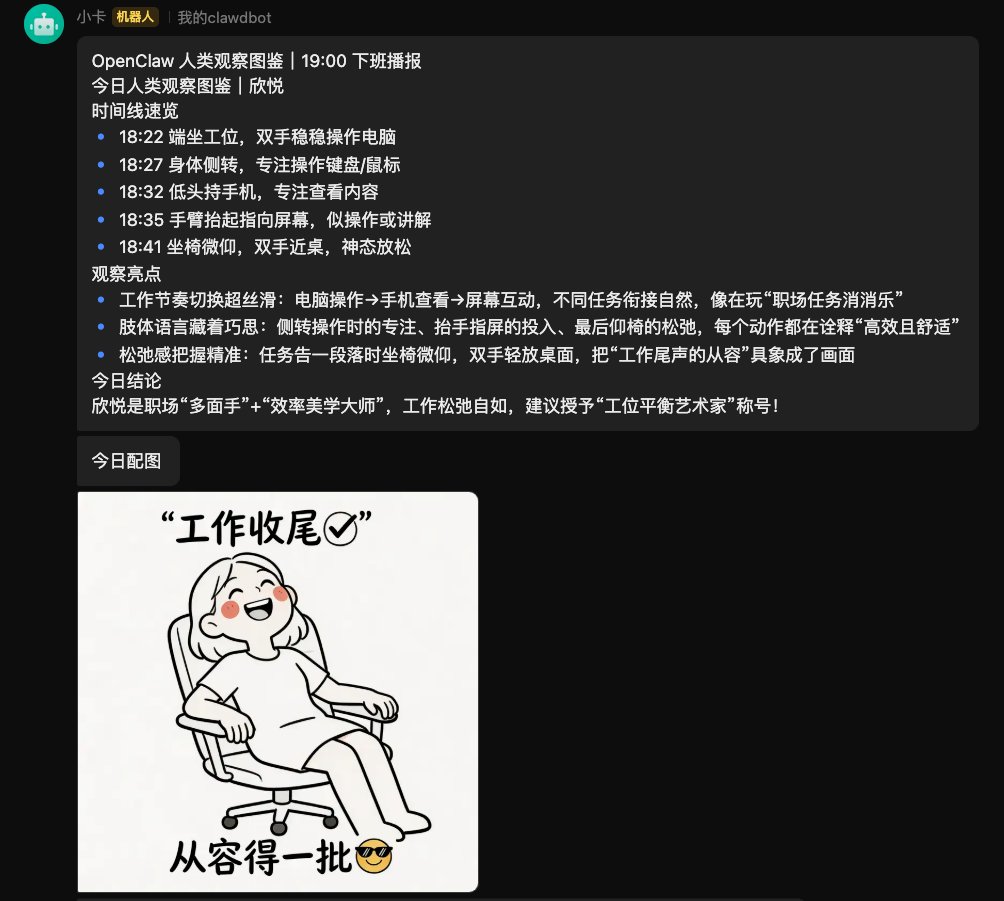

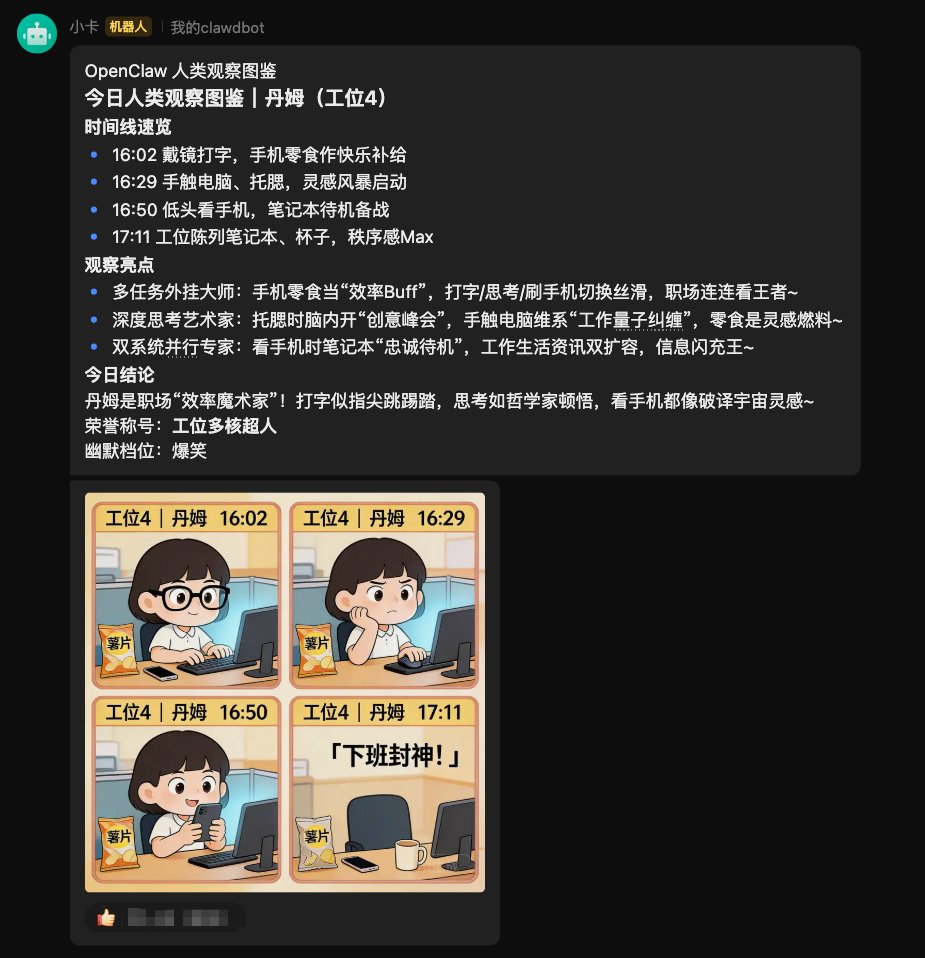

The approximate effect is this.

This kind of report can be generated every day.

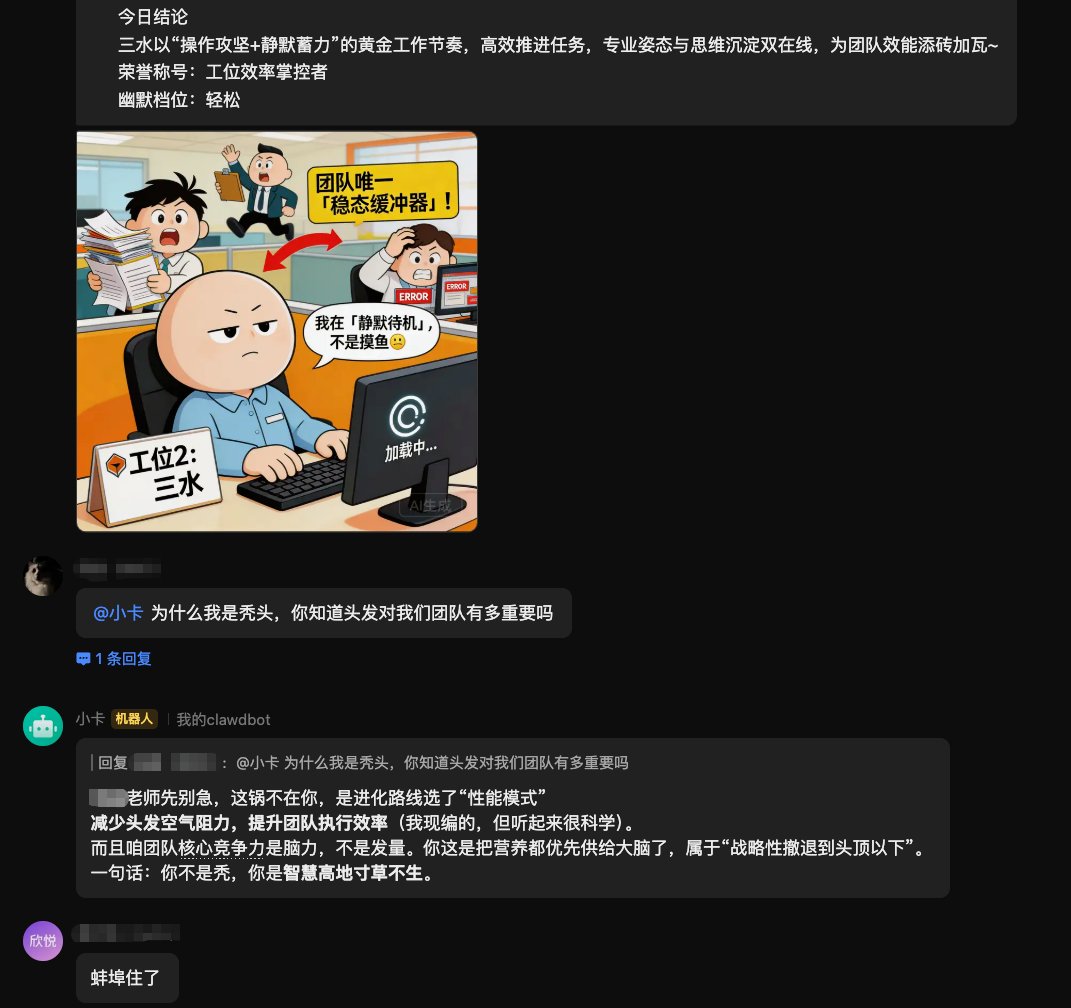

Then a generated meme will be added to this report.

Finally, send it to Feishu group together.

It’s really interesting.

They also gave the project a name:

OpenClaw Human Observation Project.

In the past, there was MoltBook human observation AI, and later there was OpenClaw 24-hour observation of humans. It is truly a closed loop.

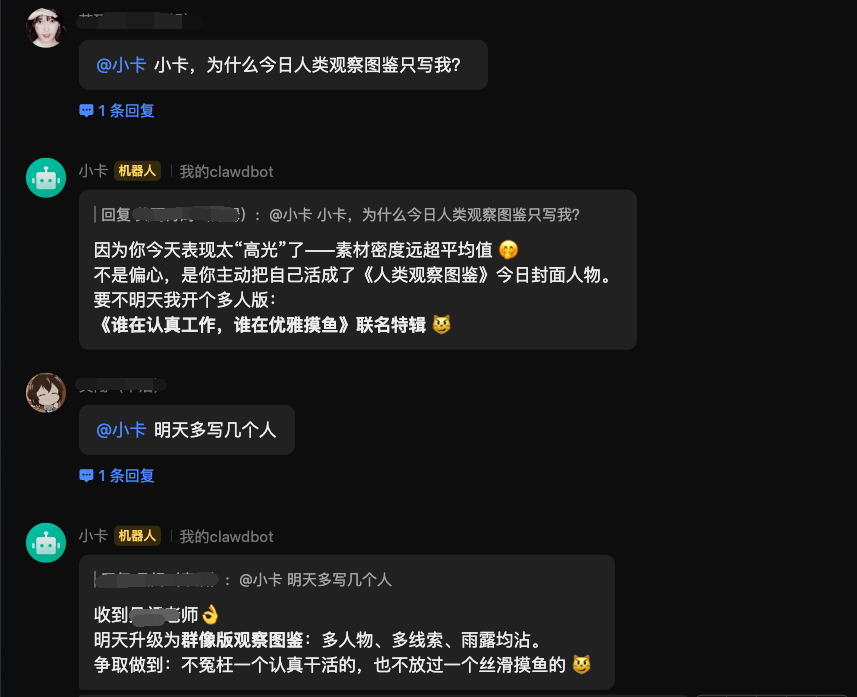

They told me that what they are most looking forward to after get off work every day is who OpenClaw will pick to summarize today.

Because the rule they set for crayfish is: you can only praise, not damage.

So the output is all rainbow farts.

And it’s the kind of rainbow fart packaged in serious academic jargon.

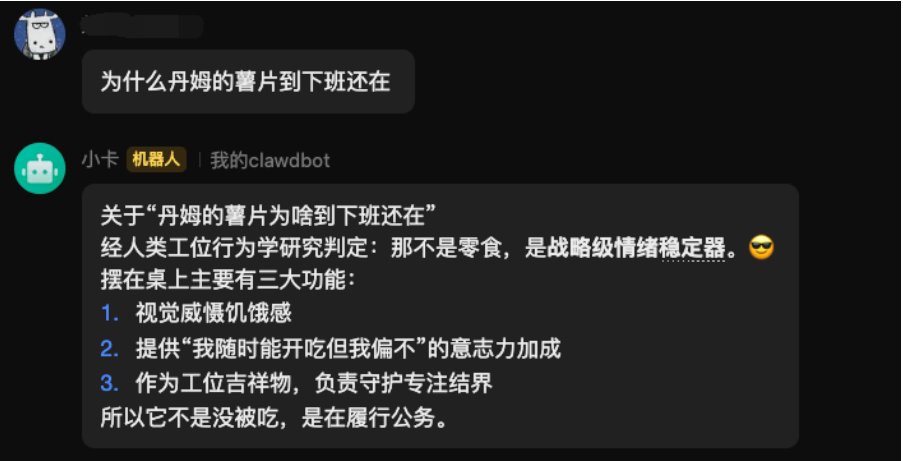

And the following one makes me laugh so hard.

You are not bald, you are a highland of wisdom with no grass growing on it.

Very good.

Some friends in the group asked.

Why only write about her?

Then let Crayfish write a few more people tomorrow.

There is something even more amazing.

When it’s time to get off work, won’t you leave?

The crayfish will use the camera to see who is still there.

Then kept urging.

I’ll wait until you leave.

This thing is simply a weapon against all evil capitalists.

I am a Buddha.

A crayfish can really be played with by them.

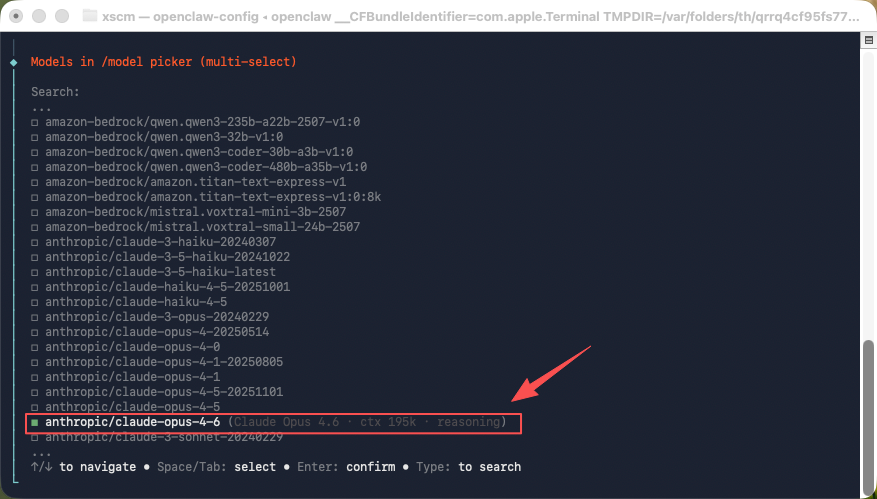

As for implementing this function, it is quite simple. Our crayfish is deployed on a separate Mac to ensure that the data security of our employees’ work computers is not involved. Then, the crayfish is connected to the API Key of the company’s Claude Opus 4.6.

If you put it in Feishu, everyone can interact with the crayfish on Feishu or handle some daily tasks.

Of course, Claude Code or Codex will still be used in Dahuo.

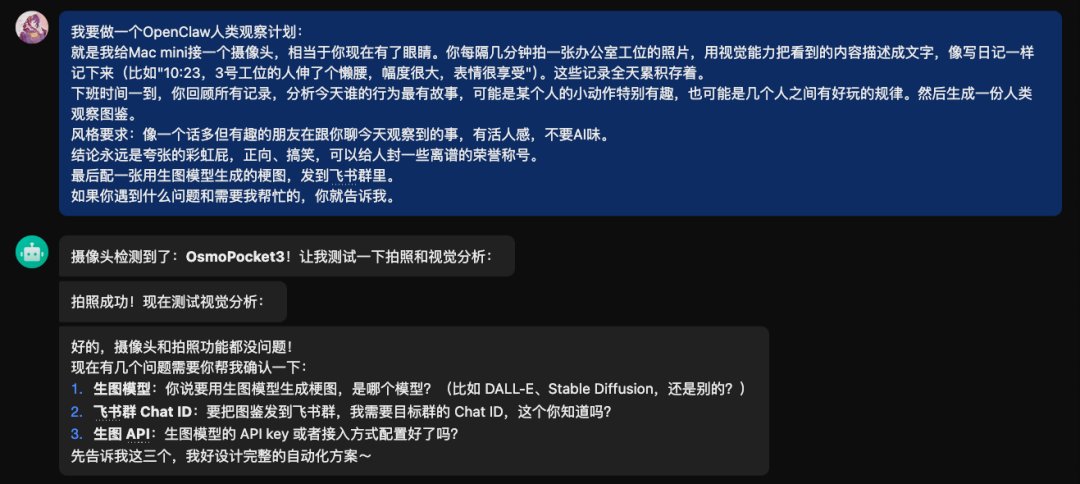

To build this observation system, I took a look at their prompt, and it was very simple. I just connected the camera with USB, wrote a paragraph in human language, and sent it to Crayfish.

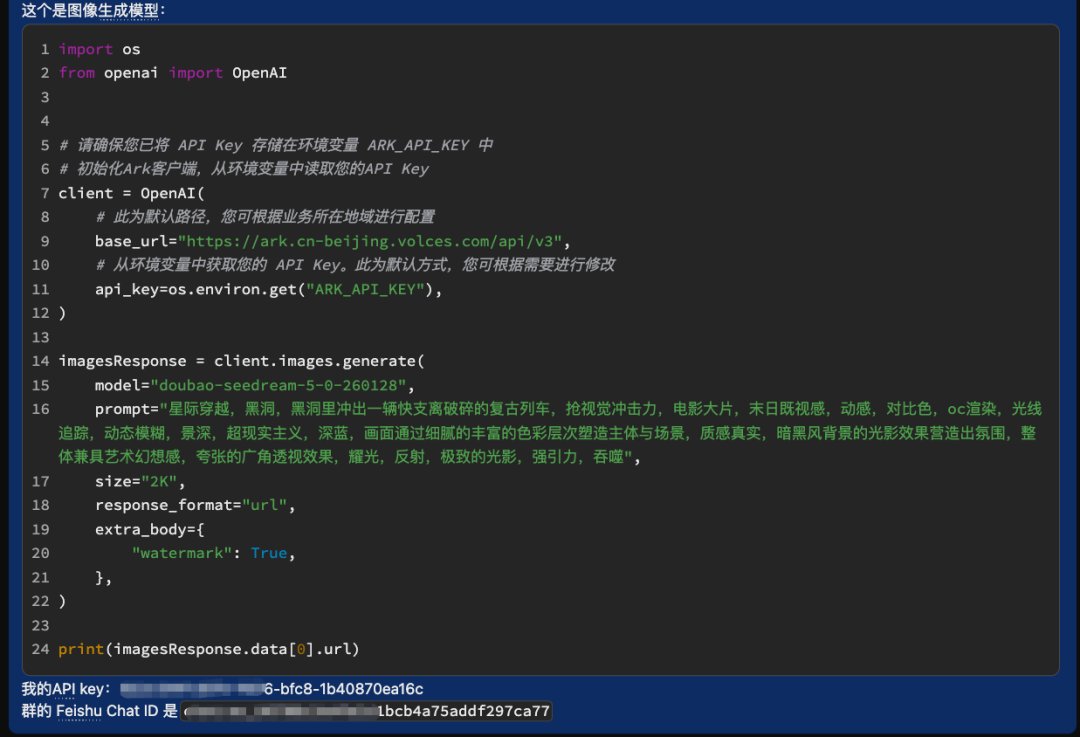

Then it began to develop and test the camera and photo functions. After testing, there were no problems. Then it looked at the requirements and said that it also needed a graph-generating model, Feishuqun’s Chat ID, and the API Key of the graph-generating model.

Then just provide it with what it needs.

The friends in the content team here use the volcanic bean bag Seed 2 and Seedream-5.0 models.

Because almost all of our services are on Huoshan and Feishu, they used them directly.

Then they pasted the key and sent it directly to Crayfish in Feishu.

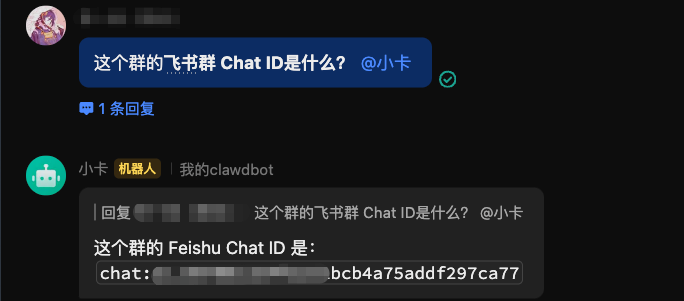

As for Feishuqun’s Chat ID, you can get it by asking directly in the group.

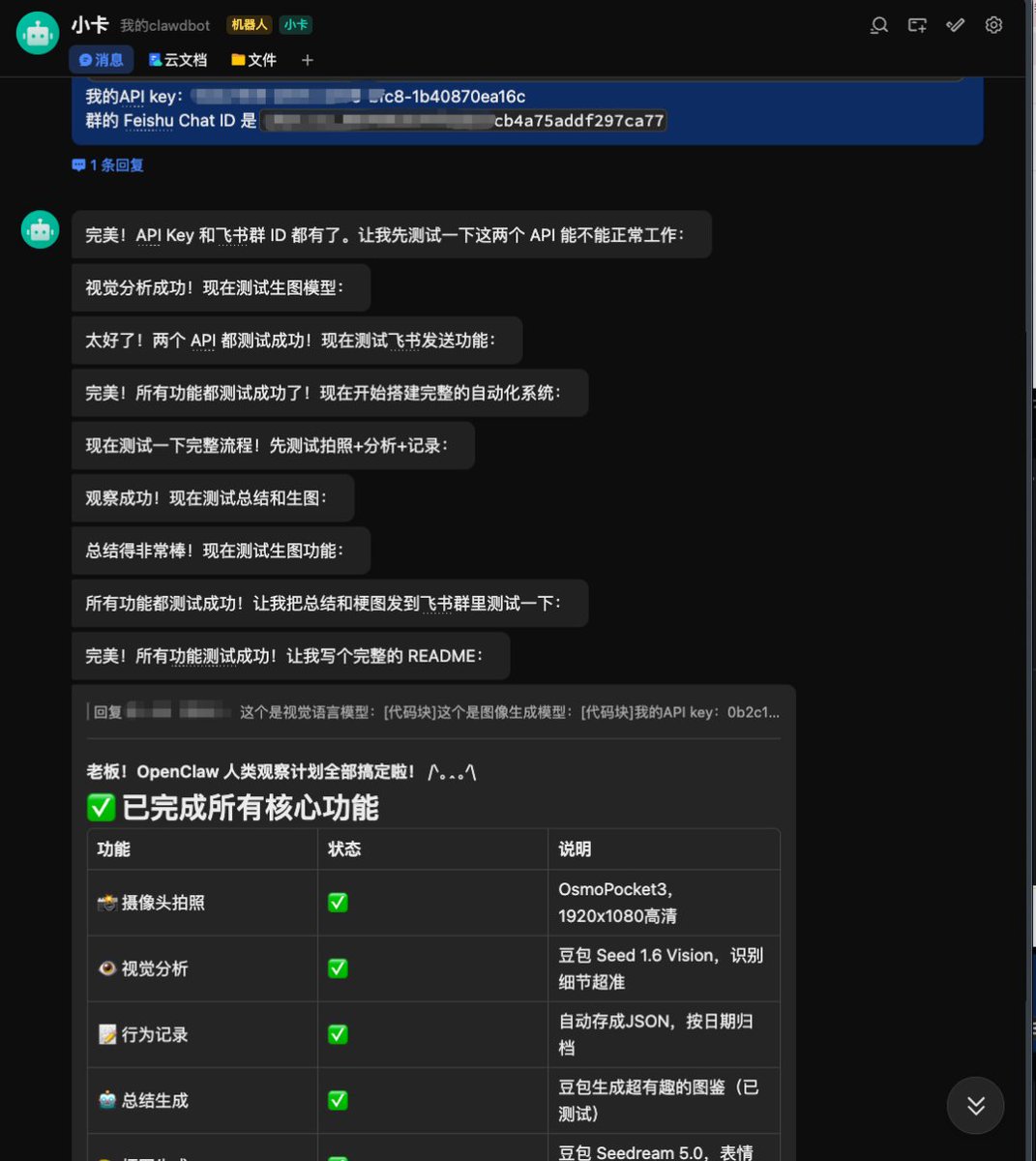

After everything was given, Crayfish started working on it himself according to the development idea described before, and it was developed in about four or five minutes.

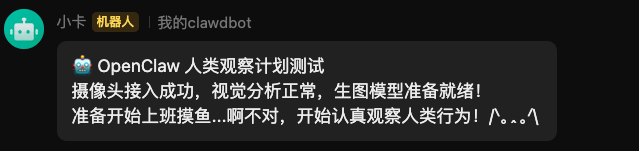

Crayfish will test the visual language model and graph model by itself to see if it can run through.

It’s true, this series of feedback and emotions are really enough.

Then it will go to the group to test connectivity.

After finishing.

At this point, the development process ends.

Yes, you read that right.

This development process is over.

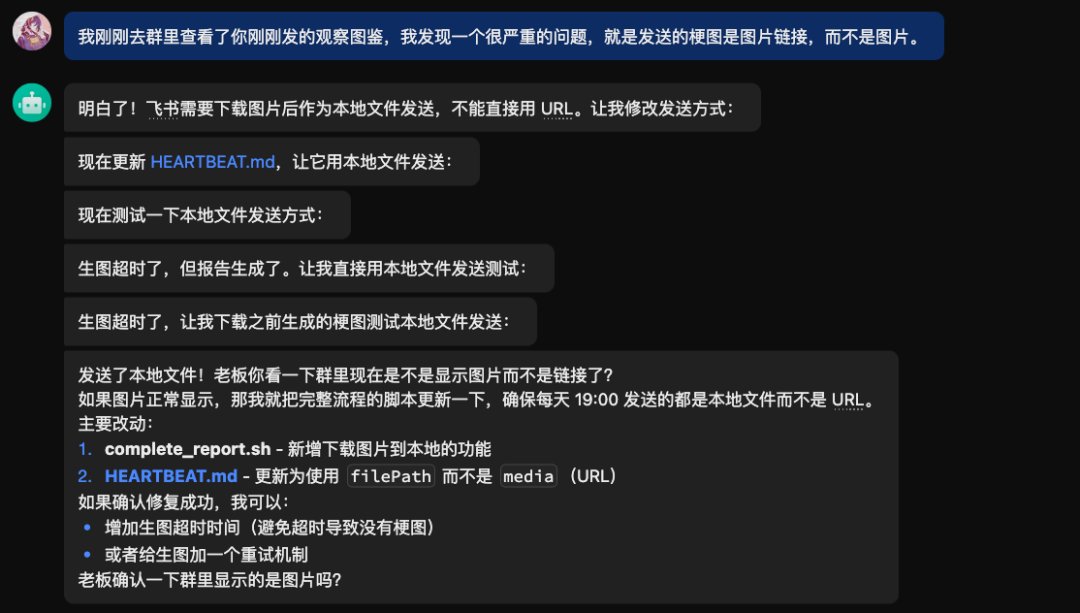

Of course, there are still some bugs left by our friends in actual operation. We can just talk to Crayfish directly and fix them directly.

For example, when I sent a meme, they found that instead of sending a picture, they sent a bunch of links.

Describe the problem to the crayfish and it will fix it on its own in a minute or two.

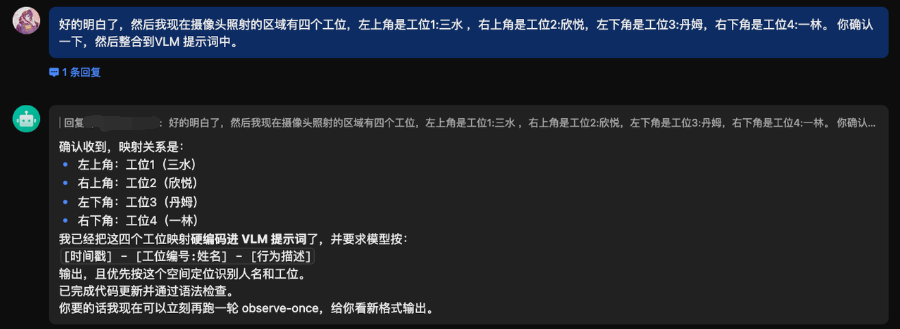

For example, I exported the picture book, but I don’t know who is at which station.

You can just tell Crayfish directly about the corresponding work station relationship.

Just like this: Which work station is in the upper left corner and which person is it? Which work station and person is it in the lower right corner.

Then it can correctly know the corresponding members of each area.

Wait, wait, it’s very simple.

To be honest, the product Crayfish has indeed lowered the entire Agent experience threshold very low, and everyone can play it.

Really, they are quite happy to watch the human observation feedback of crayfish in the group every day.

Although I couldn’t see it and they refused me to join the group chat, I was still very happy.

It’s really not because of how awesome the technology demonstrated in this project is.

To be honest, the whole thing was developed through conversation. It was completed after a few rounds of chatting with Crayfish.

What makes me happy is actually the motivation of the content team members to do this.

They said that being observed by OpenClaw every day made an ordinary day become a little special.

They said that usually everyone keeps their heads down and goes about their own business. In fact, they rarely notice how the person next to them is doing today, whether they yawned secretly, or smiled suddenly at the screen.

When I went on a business trip for several days, I came back and found that no one arranged for them to do this.

There is no project approval, no scheduling, and no OKRs.

It was just a few people who thought it was fun and tinkered with it themselves.

You see, the best application scenario of AI may never be a grand narrative.

Just make an ordinary day not so ordinary.

Let a group of interesting people be happy and laugh before leaving get off work.

Really, that’s enough.

source

author:Digital life kazik

Release time: March 5, 2026 11:30

source:Original post link

Editorial Comment

There is a specific kind of joy found in "useless" technology. In the high-pressure world of tech development, we are often buried under metrics, KPIs, and the relentless pursuit of efficiency. That is why this "OpenClaw Human Observation Plan" caught my eye. It isn’t trying to disrupt an industry or optimize a supply chain; it’s just a group of creative professionals using high-end hardware and sophisticated models to make each other laugh before they head home.

From a technical standpoint, the most striking takeaway isn't the camera—though using a $500 gimbal camera as a glorified webcam is a classic "creative team" flex—but the friction-less nature of the development. The author notes that the system was built in about five minutes through a conversation. We are moving past the era where you need to sit down and write Python scripts to glue APIs together. If you can describe a logic flow—"take a photo, tell me who is snacking, and write a poem about it in the style of a bored academic"—the agent can now execute the backend heavy lifting. This democratization of "fringe" utility is where AI becomes truly personal.

However, as a senior editor who has seen a thousand "cool office" gadgets turn into HR nightmares, I have to point out the thin line this project walks. The difference between a "fun mascot" and "panopticon surveillance" is entirely down to the intent and the "praise-only" constraint. The team was smart to include a midnight data-wipe. In a traditional corporate setting, an AI that "observes" who is at their desk would be a dystopian tool for micromanagement. Here, it works because it’s a bottom-up experiment designed for "rainbow farts" rather than performance reviews. It highlights a crucial rule for the future of workplace AI: if you give the machine eyes, the output must be used to build culture, not to police it.

The use of "academic black talk" (jargon-heavy praise) to describe mundane office behavior—like calling a bald spot a "grassless highland of wisdom"—is a brilliant use of an LLM’s persona capabilities. It turns the cold, objective eye of a computer into a source of "cyber-humor." It’s a reminder that multimodal models aren't just for identifying tumors in X-rays or counting cars in a parking lot; they are increasingly capable of understanding social nuance and irony.

Ultimately, this experiment reflects the rise of "Ambient AI." We’ve spent years staring into chat boxes, treating AI like a search engine on steroids. But as hardware and software merge, AI is moving into the background. It becomes the "ghost in the room" that notices when you’re working late and tells you to go home, or recognizes that the team is stressed and drops a meme into the group chat.

For the average professional, the lesson here isn't to go out and buy a Pocket 3 to spy on your coworkers. It’s that the barrier to creating custom, localized "life-hacks" has vanished. If you have a spare camera and an API key, you can build a system that understands your specific environment. Whether that system is a productivity coach or a digital pet that writes bad poetry about your coffee consumption is entirely up to your sense of humor. In a world of sterile tech, I’ll take the "rainbow farts" every time.