Editor’s Brief

A critical look at the tendency of OpenClaw users to over-engineer their setups. The core argument is that "workspace bloat"—creating multiple specialized agents for simple tasks—introduces unnecessary coordination costs that outweigh the benefits of specialization. The text advocates for a "Skills-first" approach, where users maximize the utility of a single agent before scaling to complex multi-agent architectures.

Key Takeaways

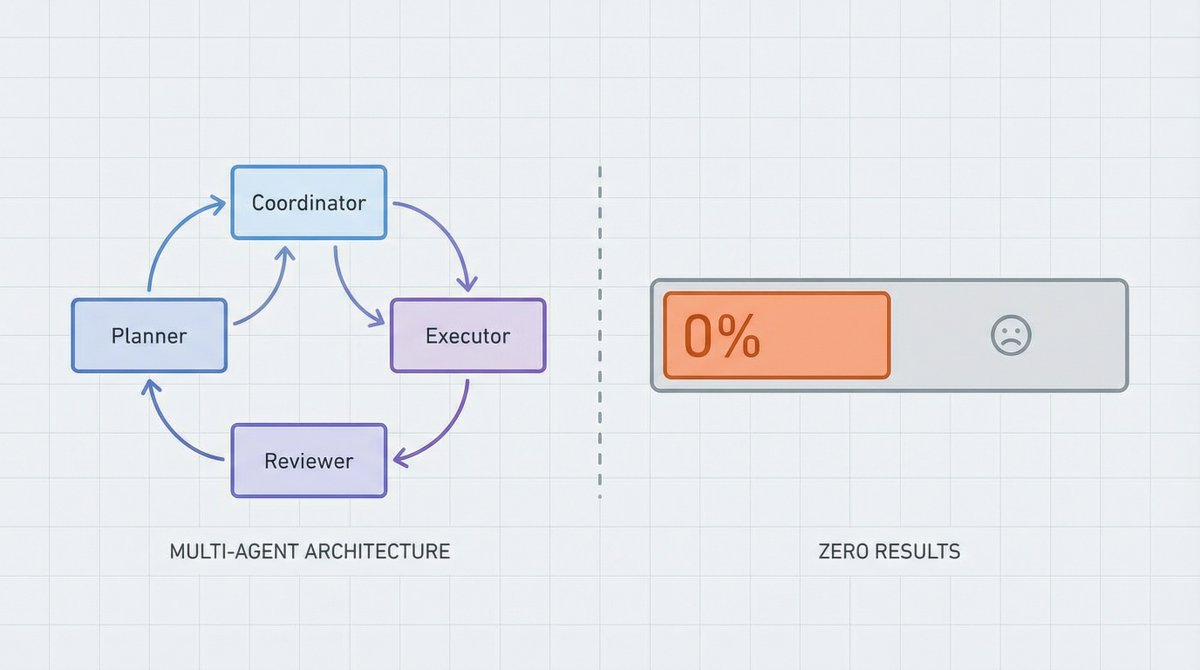

- The "Stationery Trap": Users often spend more time designing complex architecture diagrams than ensuring their agents actually perform work.

- Coordination Tax: Every additional agent introduces friction in the form of context loss, state synchronization, and formatting mismatches.

- Skills vs. Agents: Most "specialized" needs are better served by giving an existing agent a new tool (Skill) rather than creating a new persona (Agent).

- The Coase Equilibrium: Borrowing from economics, the text argues that the boundary of an agent's workspace should only expand when the benefit of specialization exceeds the cost of managing the hand-off.

- Practical Heuristics: Start with one workspace, use Skills to extend capability, and only split into multiple agents when faced with genuine bottlenecks like context window limits or the need for true parallel execution.

Editor’s Brief

Mortal Xiaobei’s post has been circulating widely in the OpenClaw community, and for good reason: it directly challenges the default assumption that more workspaces equal better architecture. Drawing on Coase’s theory of the firm and real-world failures documented by GitHub’s engineering team, the author makes a compelling case that OpenClaw budget workspace optimization starts not with adding structure but with resisting the urge to add it prematurely. For solo developers and small teams running OpenClaw on constrained budgets, this is essential reading. The coordination overhead of multi-agent setups — context loss, format mismatches, error propagation — is not a theoretical concern; it is a measurable cost that most practitioners systematically underestimate. This piece provides the economic framework and practical heuristics to avoid that trap.

Key Takeaways

- The Stationery Fallacy is real: Configuring elaborate multi-workspace architectures before producing any output is a form of productive procrastination. The architecture diagram is not the deliverable — task completion is.

- Coase’s boundary test applies directly to agents: Split a task into multiple workspaces only when the benefit of specialization demonstrably exceeds the coordination cost. If your Coordinator spends most of its logic managing handoffs, you have built a bureaucracy, not a system.

- Skills before Agents: Most capabilities that feel like they need a dedicated workspace can be handled by installing a Skill (tool) on a single agent. A weather query does not need a weather agent — it needs a weather skill.

- Three valid reasons to split: True parallelism (independent tasks running simultaneously), hard permission isolation (different API keys or security contexts), and context window exhaustion (a single agent literally cannot hold enough state).

- Sequential often beats parallel for budget users: The author’s own test — 8 front-end tasks done sequentially in 4 hours with zero coordination cost versus 30 minutes parallel with merge conflicts and style inconsistencies — shows that speed is not always the right optimization target.

- Rejection logs are the hidden asset: When you do split workspaces, tracking what was decided against and why is the most valuable and most neglected coordination artifact.

- The correct progression is linear: Make one workspace work reliably, expand its capabilities with Skills, and only split when you hit a hard operational wall. Skipping steps one and two is the root cause of most multi-agent failures.

NovVista Editorial Comment — Michael Sun

There is a pattern NovVista sees repeatedly in the developer tools space that Mortal Xiaobei’s post captures with unusual precision: the conflation of architectural complexity with engineering competence. In the OpenClaw community specifically, the default onboarding path encourages users to think in terms of multi-workspace orchestration — Researcher, Writer, Coder, Coordinator — as though the framework’s value is proportional to the number of agents deployed. The reality, as this post documents, is closer to the opposite. For budget-conscious users in particular, OpenClaw budget workspace optimization is not about maximizing the number of workspaces; it is about minimizing the number of workspaces while maximizing what each one can accomplish.

The Coase framework is the strongest intellectual contribution here, and it deserves more attention than the original post gives it. Ronald Coase’s insight was not just that firms exist to reduce transaction costs — it was that the boundary of the firm is dynamic and determined by the relative cost of internal coordination versus external contracting. Applied to agentic systems, this means the “right” number of workspaces is not a fixed architectural decision. It changes as your tools improve, as context windows expand, and as skill libraries mature. An architecture that required three agents six months ago may now be optimally served by one agent with better tooling. Budget users who lock themselves into a multi-workspace design are not just paying coordination costs today — they are committing to paying them indefinitely, even as the conditions that justified the split erode.

What the original post underweights is the psychological dimension of over-engineering. The stationery metaphor is charming, but the underlying dynamic is more specific than procrastination. In developer communities, there is genuine social reward for sharing complex architecture diagrams. A screenshot of four beautifully labeled workspaces with arrows between them generates engagement. A screenshot of a single workspace with twelve skills installed does not. The incentive structure of the community actively punishes the correct approach and rewards the wasteful one. This is not a minor observation — it explains why the pattern persists even among experienced developers who should know better. The n8n example in the original post is telling: even pragmatic automation experts who pride themselves on simplicity fall into the same trap when the community rewards visible complexity.

For NovVista readers running OpenClaw on limited compute budgets, the practical implications are direct. Every additional workspace is not just an architectural decision — it is a cost multiplier. Each agent consumes context window tokens, requires its own system prompt, and introduces latency through inter-agent communication. On budget-tier API plans with token limits or rate caps, a four-workspace setup can consume four times the tokens of a single-workspace setup for the same task, with the additional tokens going entirely to coordination overhead rather than productive output. The Skills-first approach is not just architecturally cleaner; it is materially cheaper. One agent calling twelve tools uses one context window. Four agents coordinating to call three tools each use four context windows plus the coordination tokens. The math is unambiguous.

The one area where I would push back on the original post is the implied finality of the single-workspace recommendation. The correct framing is not “one workspace is always better” but rather “one workspace is the correct starting point, and the burden of proof for splitting should be high.” There are legitimate use cases for multi-agent architectures — long-running parallel code generation, security-isolated environments for handling different client credentials, or workflows where context window limits are genuinely reached. The danger the original post correctly identifies is that most users reach for multi-agent solutions long before they reach those legitimate boundaries. The discipline is in waiting for the wall rather than preemptively building around an imagined one.

Introduction

The following content is compiled by NOVSITA in combination with X/social media public content and is for reading and research reference only.

focus

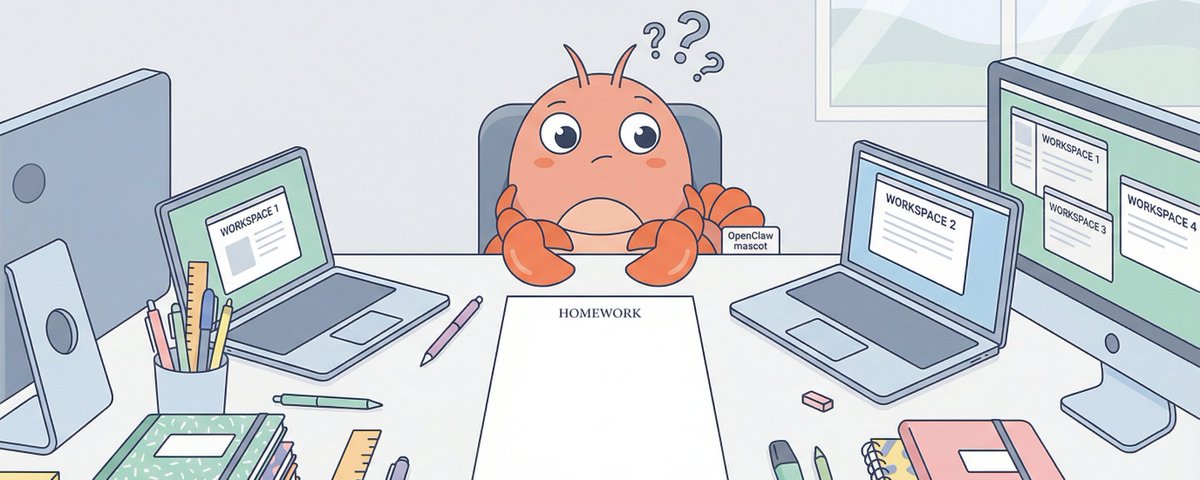

- OpenClaw poor students have too much stationery: you may not need 4 Workspaces

- Recently I have seen an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started.

Remark

For parts involving rules, benefits or judgments, please refer to Mortal Xiaobei’s original expression and the latest official information.

Editorial comments

This article “X Import: Mortal Xiaobei – OpenClaw poor students have many stationery: you may not need 4 Workspaces” comes from the X social platform and is written by Mortal Xiaobei. Judging from the completeness of the content, the density of key information given in the original text is relatively high, especially in the core conclusions and action suggestions, which are highly implementable. OpenClaw students have a lot of stationery: you may not need 4 workspaces. A counter-intuitive observation. Recently, I saw an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started. The architecture is beautifully drawn: clear division of roles and clear boundaries of responsibilities. Then what? Each workspace is empty, and the Coordinator can do everything by itself. This reminds me of what my teacher said when I was a kid:…. For readers, its most direct value is not “knowing a new point of view”, but being able to quickly see the conditions, boundaries and potential costs behind the point of view. If you break this content down into verifiable judgments, it would at least include the following levels: OpenClaw has a lot of bad students: you may not need 4 Workspaces; I recently saw an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started. . Among these judgments, the conclusion part is often the easiest to disseminate, but what really determines the practicality is whether the premise assumptions are established, whether the sample is sufficient, and whether the time window matches. We recommend that readers, when quoting this type of information, give priority to checking the data source, release time and whether there are differences in platform environments, to avoid mistaking “scenario-based experience” for “universal rules.” From an industry impact perspective, this type of content usually has a short-term guiding effect on product strategy, operational rhythm, and resource investment, especially in topics such as AI, development tools, growth, and commercialization. From an editorial perspective, we pay more attention to “whether it can withstand subsequent fact testing”: first, whether the results can be reproduced, second, whether the method can be transferred, and third, whether the cost is affordable. The source is x.com, and readers are advised to use it as one of the inputs for decision-making, not the only basis. Finally, I would like to give a practical suggestion: If you are ready to take action based on this, you can first conduct a small-scale verification, and then gradually expand investment based on feedback; if the original article involves revenue, policy, compliance or platform rules, please refer to the latest official announcement and retain the rollback plan. The significance of reprinting is to improve the efficiency of information circulation, but the real value of content is formed in secondary judgment and localization practice. Based on this principle, the editorial comments accompanying this article will continue to emphasize verifiability, boundary awareness, and risk control to help you turn “visible information” into “implementable cognition.”

OpenClaw poor students have too much stationery: you may not need 4 Workspaces

a counterintuitive observation

Recently I have seen an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started.

The architecture is beautifully drawn:

There is a clear division of roles and clear boundaries of responsibilities. Then what?

Each workspace is empty, and the Coordinator can do everything by itself.

This reminds me of what my teacher said when I was a kid: Poor students have more stationery.

It’s not just newbies making mistakes

You may think this is a newbie question. But no.

Saw a post on Reddit r/n8n the other day. n8n is a no-code workflow platform, and its users are mostly experienced developers and automation experts. The post said:

“I have seen too many workflows where 5-6 specialized agents coordinate with each other. If I open it up, I can actually do it with 3 nodes + an agent.”

He gave an example: Someone created three agents, email drafting, email sending, and email followup tracking.

One agent + three tools can obviously solve the problem, but it has to be split into three agents.

Similar sounds also appeared in large factories. GitHub recently published a blog “Multi-agent workflows often fail”, reviewing the pitfalls they have encountered in Copilot and internal automation:

「Most multi-agent failures come down to missing structure, not model capability.」

Translation: The main reason for multi-agent failure is not insufficient model capabilities, but structural design problems.

What’s interesting is that n8n is a no-code community, and most of its users are pragmatists who “just use it”; GitHub is an engineering team of a major manufacturer that pays attention to architecture and best practices. People on both sides have completely different backgrounds, but they are making the same mistakes.

Why?

Because we naturally love to make simple things complicated.

Why do we love over-design?

Complex = professional?

Human beings have a deep-rooted intuition: complex things are more powerful.

The four agents have a clear division of labor, with arrows pointing back and forth, which looks much more “professional” than one agent working alone.

But this is an illusion.

Drawing architectural diagrams is great. You are creating, you are planning, and you feel that you are doing “correct engineering practice.”

It is very painful to make an agent truly run through. You have to deal with edge cases, you have to debug strange bugs, and you have to face the soul torture of “Why can’t it do such a simple thing well?”

Why do poor students have so much stationery? Because buying stationery is easier than doing homework, and organizing stationery is more enjoyable than solving problems.

The temptation of division of labor

In 1776, Adam Smith told the story of a needle factory in “The Wealth of Nations”: ten workers working together could produce 48,000 needles a day; one person working alone could not produce even 20 needles a day.

Specialization brings efficiency gains, which is the cornerstone of economics.

But what Smith didn’t tell you is: specialization also brings coordination costs.

Who is responsible for what? How to hand over? Who will take care of things if something goes wrong?

In 1937, economist Ronald Coase asked a question: If the market division of labor is so efficient, why are firms needed?

The answer is: transaction costs. The costs of finding people in the market, negotiating, signing contracts, and supervising implementation…these costs may add up to more than “hiring one person to do it all.”

The boundaries of an enterprise depend on the balance between coordination costs and division of labor benefits.

The same goes for AI Agents.

Tools have changed, and so have boundaries.

Interestingly, in recent years we have seen a trend: more and more one-person companies.

One person + Vercel + Supabase + Stripe + ChatGPT can make products that previously required 10 people.

Why? Because the tools have gotten better.

When one person + good tools can do the work of three people, you don’t need three people. Not because three people are bad, but because the cost of coordinating three people may be higher than the extra work.

OpenClaw’s Skill system has this logic:

- Skill is capability expansion: install a new tool on the agent

- Agent is a split role: develop an independent brain

Do I need a “weather agent” to check the weather? No, just install a weather skill.

Most of the “specialized requirements” can be solved using skills, and there is no need to dismantle the agent.

Coordination costs are severely underestimated

Hidden costs of multiple agents:

- Context is lost: What the coordinator knows, the researcher does not know

- Format alignment: pass JSON here, expect Markdown there

- Status synchronization: Who is doing what? Where have you been?

- Error propagation: If the Researcher makes an error, how do you tell the Coordinator?

Within an agent, these are all automatic. Split into multiple agents, each item must be processed explicitly.

The GitHub blog also mentioned that agents will make many implicit assumptions about status, order, and format. When these implicit assumptions go wrong, the entire system breaks down.

our lessons

Tell us about ourselves.

At the beginning, we excitedly set up multiple workspace configurations. Four characters, four sets of bots, the configuration took a day.

After running for two weeks, I found:

- Searching for information? Just use web_search directly in main

- In-depth analysis? Main can also be used to load analysis skills.

- On a mission? Main calls various tools and that’s it.

There is only one scenario that really requires an independent agent: writing code in parallel.

For example, if you are working on a website project, let Codex write the front-end and Claude Code write the back-end, and the two can run at the same time. This is “really need more agents”.

a concrete example

I recently built a website and had 8 front-end tasks.

Option A: One Codex sequence

- Time taken: 4 hours

- Coordination cost: 0

Option B: 8 Codex done in parallel

- Time taken: 30 minutes

- Coordination costs: merge conflicts, inconsistent styles, duplicate components

We chose option A.

When to use plan B? When backend + frontend are connected, it only makes sense for the two Agents to run in parallel, because waiting for each other is a waste.

Lesson: Early demolition = increased coordination costs + no benefits.

If it really needs to be demolished

That’s not to say never dismantle it, but to wait until it encounters a bottleneck before dismantling it.

When is it really needed?

- True parallelism: two tasks run at the same time, independent of each other

- Hard isolation requirements: different permissions, different contexts

- Context explosion: the context window of a single agent is really not enough

What to do after demolition

If you do decide to split, have these ready:

- Explicit handover checklist

Every handoff must be clear: output format, tone goals, context, completion criteria. Don’t assume the other person “should know.”

- Three logs

- Action log: what was done

- Rejection log: why not done (most easily ignored, but most valuable)

- Handover log: to whom and what

Rejection logs are particularly important. “Why it was not done” is often invisible work that no one sees, but if it is not done, the system will have problems.

- near-miss summary

Regular statistics of “almost accidents but being caught” incidents. These data allow the value of coordination to be quantified, preventing coordinators from being underestimated, resources being cut off, and the system from collapsing.

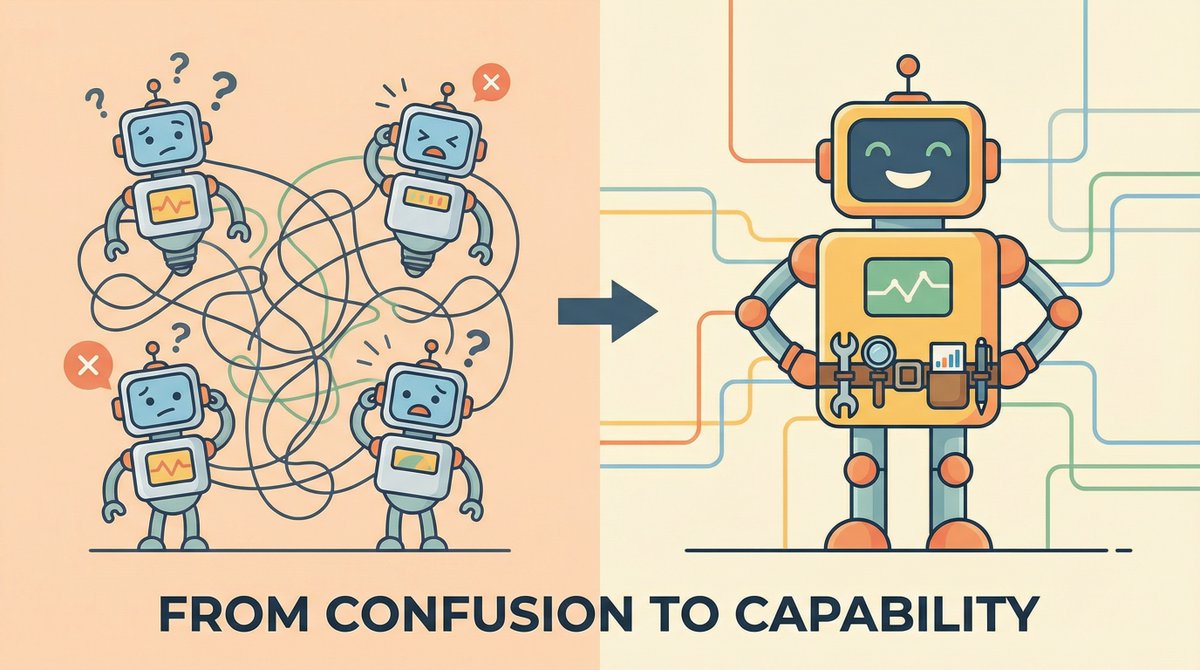

correct order

1. First let a workspace actually work

Able to complete daily tasks

Ability to handle edge cases

Able to produce stable output

1. Use Skills to expand capabilities

Need new capabilities? install skill

No new roles required

1. Consider dismantling when encountering bottlenecks

Single agent response is too slow → Consider parallelism

Context is too long → consider splitting

Permissions need to be isolated → consider independent workspace

Don’t skip 1 and 2, go straight to 3.

Coase’s problem

Back to Coase’s question: Where are the boundaries of the enterprise?

The answer is: when internal coordination costs = external transaction costs.

The same goes for the boundaries of AI Agents.

It is only worth splitting when “the benefits of splitting into multiple agents” > “coordination costs”.

Otherwise, there are too many stationery items for poor students.

Questions for you

Before you rush to provision a second workspace, ask yourself:

- Is it true that a workspace + skills can’t do it?

- After demolition, are you ready to coordinate the costs?

- Are you solving problems or enjoying the thrill of “designing complex systems”?

If you can’t dismantle it, don’t dismantle it. If you want to dismantle it, you have to wait until you encounter a real bottleneck.

source

author:Mortal Xiaobei

Release time: February 28, 2026 22:57

source:Original post link

Editorial Comment

There is a specific kind of procrastination that looks exactly like hard work. In the world of software and automation, we call it over-engineering. The source text hits on a phenomenon that is becoming rampant in the burgeoning agentic ecosystem: the "Stationery Trap." It’s the digital equivalent of a student buying ten different colored highlighters instead of actually reading the textbook. In OpenClaw, this manifests as users building elaborate four-workspace architectures before they’ve even successfully automated a single, boring task.

The allure of the multi-agent system is psychological. Drawing a diagram with four distinct roles—a Researcher, a Writer, a Coder, and a Coordinator—feels professional. It feels like "proper" engineering. However, as the text correctly identifies, we are often ignoring the "Coordination Tax." In traditional management, we know that adding more people to a late project makes it later. In agentic workflows, adding more agents to a simple task makes it more fragile.

Every time Agent A hands a task to Agent B, something is lost in translation. You have to manage the hand-off, ensure the output of one matches the input requirements of the other, and deal with the "silent failures" where an agent makes an implicit assumption that the other doesn't share. When you keep everything within a single workspace, these problems largely vanish. The "brain" has all the context, all the tools, and no need to negotiate with itself.

The distinction between "Skills" and "Agents" is the most practical takeaway here. For most users, a "Researcher" doesn't need to be a separate agent; it just needs to be an agent with a web-search skill. A "Data Analyst" doesn't need a separate workspace; it just needs a Python execution skill. By treating agents as roles rather than just toolsets, users are prematurely optimizing for a level of complexity they haven't reached yet.

We should look at this through the lens of Ronald Coase’s theory of the firm. Coase argued that companies exist because the cost of coordinating tasks internally is sometimes lower than the cost of contracting them out in the open market. The same logic applies to OpenClaw workspaces. You should only "contract out" a task to a second agent when the internal "cognitive load" of the first agent becomes so high—due to context window limits or conflicting instructions—that the cost of coordination becomes the lesser of two evils.

For the budget user or the solo developer, the goal should be "Minimum Viable Complexity." If one workspace can do the job, using two is a failure, not a feature. The text mentions that even high-end engineering teams at GitHub are finding that multi-agent workflows often fail not because the underlying technology is weak, but because the structural design is too brittle.

My advice to anyone starting with OpenClaw or similar orchestration tools is to stay in one workspace until it hurts. Wait until you are hitting hard limits: perhaps you need two things to happen at the exact same time (true parallelism), or you need to strictly isolate sensitive data from a specific tool. Until you hit those walls, adding more workspaces is just "buying stationery." It’s a distraction from the actual work of building a system that produces value. In the current landscape, the most sophisticated users aren't the ones with the most complex diagrams; they are the ones getting the most done with the simplest possible setup. Simplicity is not just a budget constraint; it is a competitive advantage.