Editor’s Brief

New industry data from DX CTO Laura Tacho reveals a striking paradox: while nearly every developer (92.6%) now uses AI assistants, the actual time saved has plateaued at roughly four hours per week. The data suggests that while AI is exceptionally good at accelerating individual tasks like onboarding and initial code generation, it acts as a "dysfunction amplifier" for organizations with poor underlying systems. The real frontier isn't faster typing, but solving the systemic "human" bottlenecks that AI currently cannot touch.

Key Takeaways

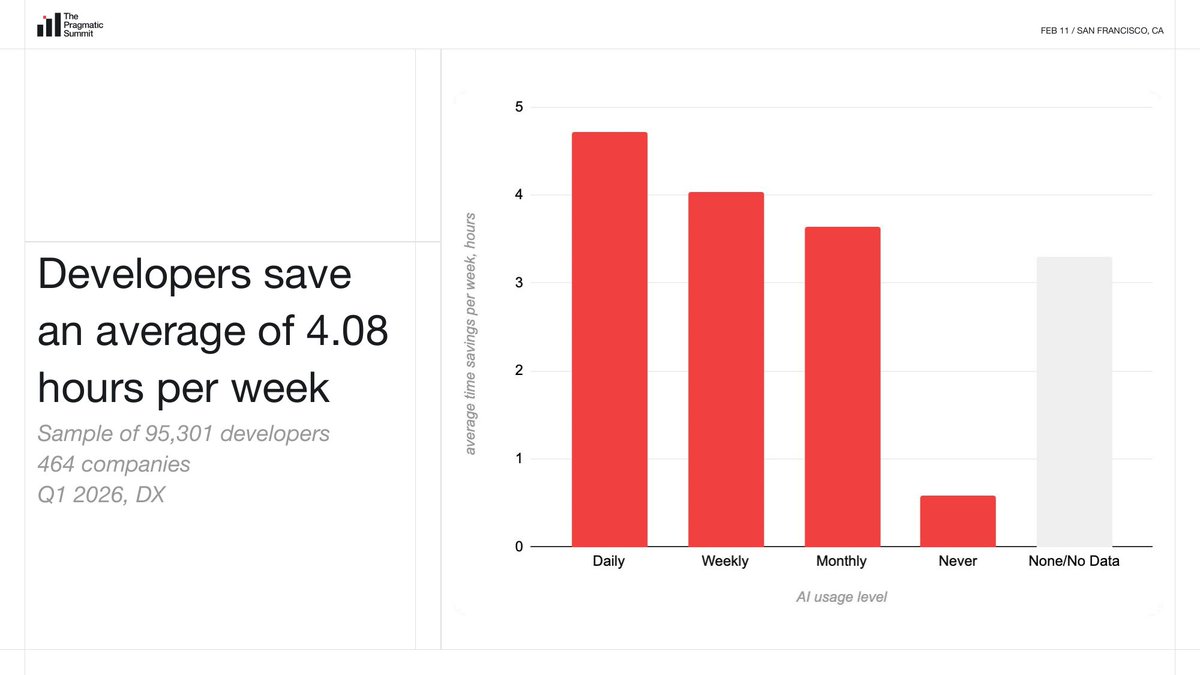

- The Productivity Plateau:** Despite rapid model improvements, weekly time savings have remained stagnant at approximately 4 hours for several quarters, suggesting a "ceiling" for individual coding assistance.

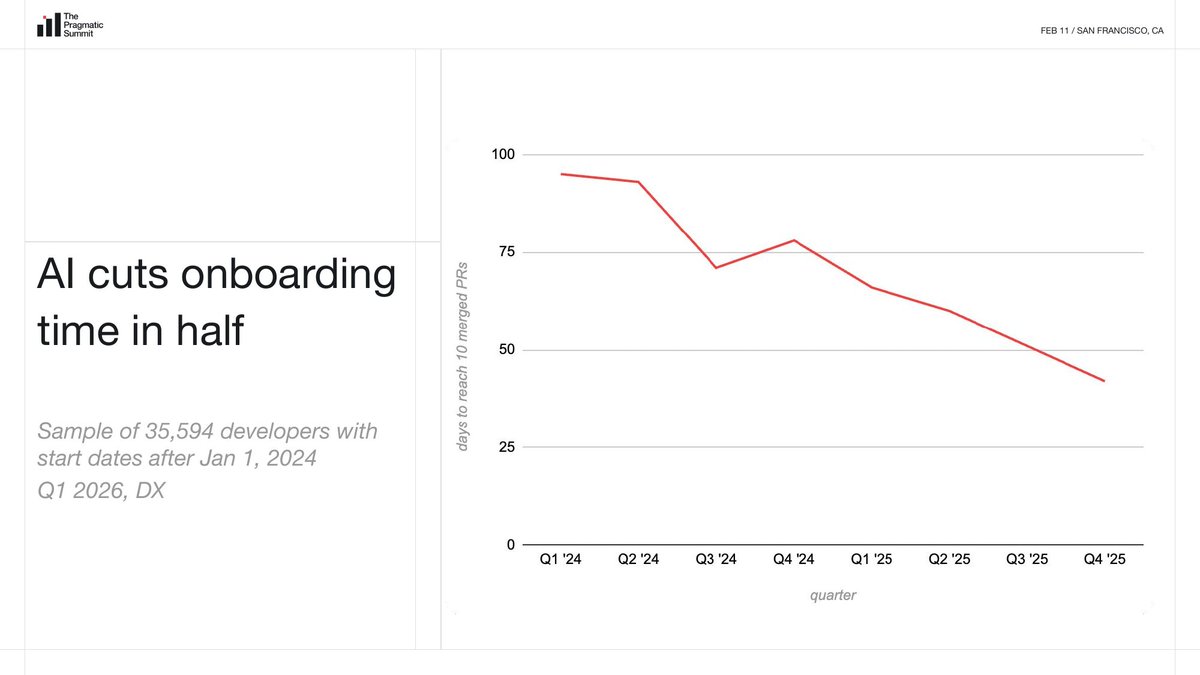

- The Onboarding Win:** AI’s most measurable success is halving the time it takes new hires to reach their 10th Pull Request—a key predictor of long-term performance.

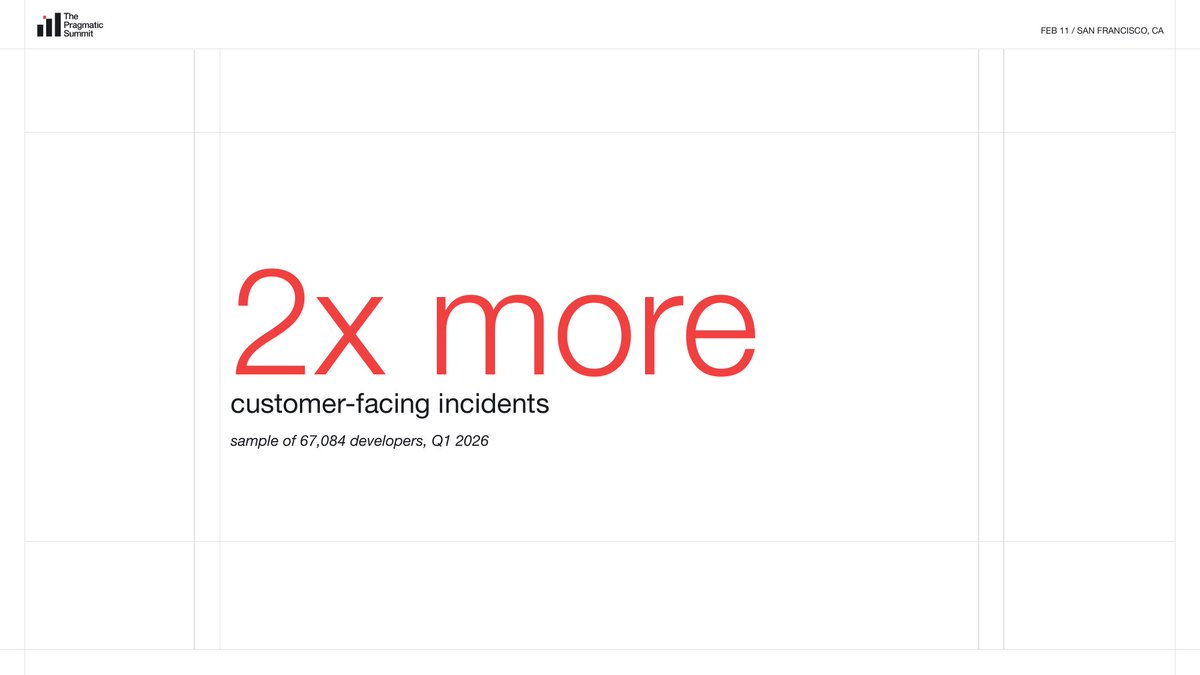

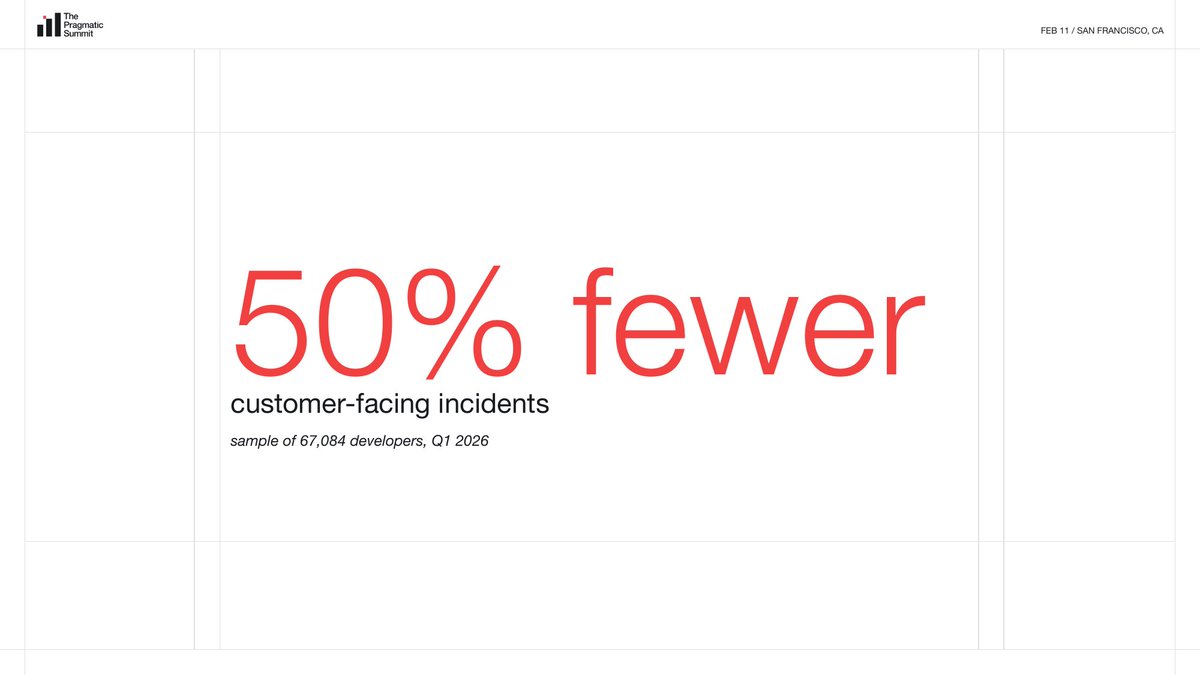

- The Dysfunction Amplifier:** AI is widening the gap between high- and low-performing teams; while some saw incidents drop by 50%, others saw them double as AI pushed bad code through broken processes faster.

- Low Organizational ROI:** MIT research indicates 95% of enterprise AI projects fail to yield measurable financial impact, largely because they focus on individual tasks rather than backend automation or structural change.

- The "Agent Experience" Pivot:** Engineering leaders are successfully securing budgets for long-neglected infrastructure (docs, CI/CD, testing) by rebranding "Developer Experience" as "Agent Experience."

- Systemic Limits:** Industry veterans like Martin Fowler and Kent Beck argue that AI cannot fix broken organizational cultures; without addressing human constraints, AI simply "takes the mess to space."

Editor’s Brief — Michael Sun, NovVista

This piece deserves your full attention if you lead engineers, buy developer tooling, or are trying to make sense of why your AI investment has not moved the needle the way vendors promised. Laura Tacho is CTO of DX and has access to something almost no analyst does: survey data from 121,502 developers across 464 companies, collected between November 2025 and February 2026. For developers using AI coding assistants, this survey is the clearest industry mirror available right now. The headline finding — 92.6% monthly adoption, yet time savings stuck at four hours per week — cuts through the noise of product marketing and conference keynotes. NovVista’s perspective: the data does not argue against AI adoption. It argues that adoption without organizational readiness is a fast lane to nowhere. Read the full piece, then come back to the editorial comment below.

Key Takeaways

- The four-hour ceiling is real. With 121,502 developers surveyed, self-reported AI time savings have plateaued at approximately 4 hours per week across consecutive quarters — despite faster models and better tooling. Individual task acceleration has a hard ceiling.

- AI is an amplifier, not a corrective. In the same sample period, some companies saw client incidents drop by 50% while others saw them double. The differentiator was not which AI tool they used — it was existing organizational health.

- Onboarding is AI’s biggest measurable organizational win. Time-to-10th-PR dropped from roughly 100 days (Q1 2024) to under 50 days (Q4 2025), strongly correlated with AI adoption. Microsoft research links the 10th PR to a developer’s two-year output trajectory.

- The shadow AI economy is large and ungoverned. Only 40% of companies pay for official LLM subscriptions, yet over 90% of employees use personal AI tools for work. This gap is a live data-leakage and liability risk.

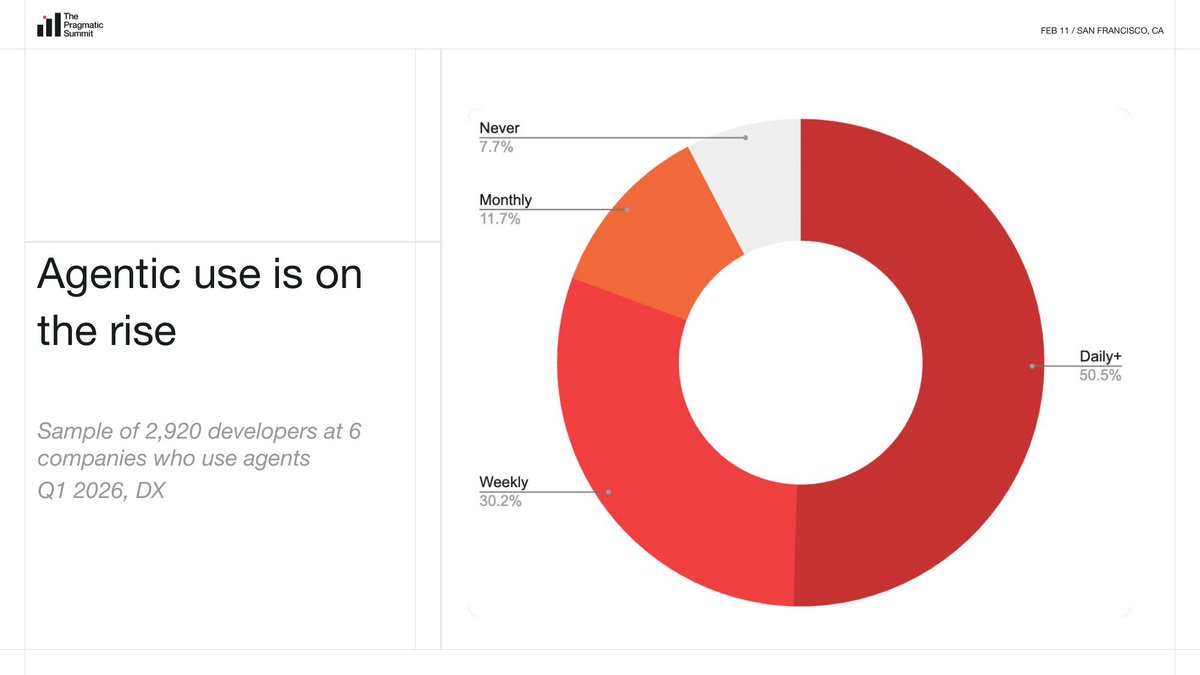

- Agentic workflows are early but accelerating. Among six pioneer companies, 50.5% of surveyed developers use agentic workflows daily. Codex crossed one million desktop downloads in its first week; developers using it submit roughly 60% more PRs weekly.

- Organizational barriers outrank technical ones. MIT research of 152 organizations found the top three barriers to AI impact are change management difficulty, lack of executive support, and poor user experience — none of them technical.

- Kent Beck, Laura Tacho, and Steve Yegge reached a joint conclusion at the Martin Fowler retreat: “We remain skeptical of any technology that claims to improve organizational performance without first addressing human and systems-level constraints.”

NovVista Editorial Comment — Michael Sun

The DX survey data Laura Tacho presented at the Pragmatic Summit is methodologically the strongest dataset in this space right now. A sample of over 121,000 developers across 464 companies, with a three-month collection window ending February 2026, is not a vendor survey with obvious confirmation bias — it is as close to ground truth as the industry currently has for developers using AI coding assistants. The finding that 92.6% of developers use these tools monthly but that weekly time savings have plateaued around four hours is not a product failure. It is a systems diagnosis.

What the original piece gets right, and what most coverage misses, is the amplifier framing. The industry has spent two years debating whether AI will “replace developers.” That debate is already obsolete. The real question — which this data forces us to confront — is whether AI will widen the gap between engineering organizations that are already functioning well and those that are not. The evidence says yes, strongly. A 50% incident reduction in high-performing organizations alongside a doubling of incidents in struggling ones, using identical tooling, is not a fluke. It is a reproducible pattern. If your development culture rewards speed over quality, an AI assistant that writes code faster is not your friend.

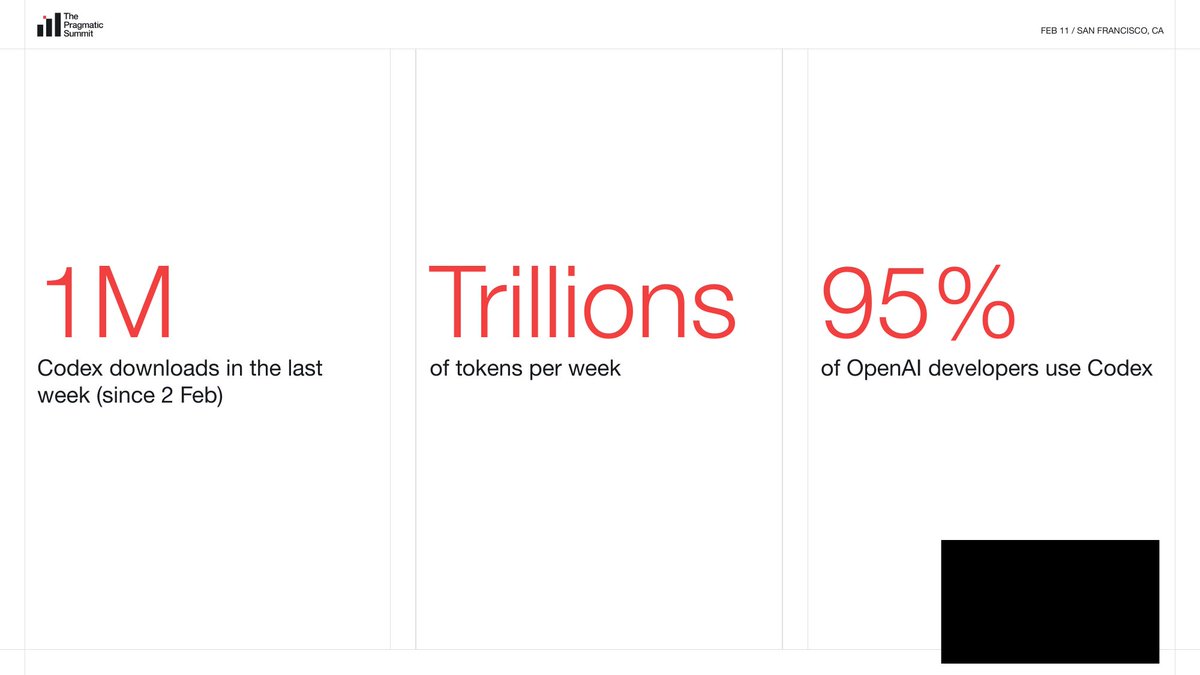

Where the piece is necessarily limited is in timeliness. This data was collected through February 1, 2026. The agentic tooling landscape has shifted even in the weeks since. OpenAI’s Codex desktop crossed one million downloads in its launch week; Anthropic has expanded Claude Code’s autonomous capabilities significantly. The “agentic workflows” section of this report draws from only six pioneer companies and 2,920 developers — Laura is transparent about this — meaning the agentic numbers are directional, not representative. The next quarter’s benchmark report will be the real test of whether agentic adoption genuinely breaks the four-hour ceiling or merely redistributes where time is saved.

The onboarding finding deserves more attention than it typically receives in coverage of this data. Halving the time-to-10th-PR from roughly 100 days to under 50 days is not a marginal efficiency gain — it is a structural change in how organizations absorb new talent. Combined with Microsoft’s research linking 10th-PR performance to two-year output trajectories, this is the closest thing to a documented ROI story that AI in software development currently has. Engineering leaders who are still framing the AI value conversation around “hours saved per week” are measuring the wrong thing. Measure onboarding velocity instead.

The “shadow AI economy” statistic — 90% of developers using personal AI tools despite only 40% of companies paying for official subscriptions — connects directly to a broader pattern NovVista has tracked: developer tool adoption almost always outpaces procurement policy. This is the same dynamic we saw with SaaS in 2012 and with cloud in 2015. The security and compliance implications are real, but the more important signal for leaders is that developers have already voted with their behavior. The organizational question is not whether to allow AI tools but how to govern them without creating friction that drives more shadow usage.

The Martin Fowler retreat conclusion — that AI cannot solve organizational problems that organizations refuse to acknowledge — is the 25-year echo of the Agile Manifesto. The people who wrote that manifesto are now saying the same thing about a different technology: the constraint is human, not technical. That consistency across a quarter century of software development cycles is either deeply sobering or deeply reassuring, depending on how seriously your organization is willing to look at itself. NovVista’s read: the teams that will see outsized returns from AI in 2026 are not the ones with the best tools. They are the ones that have already done the slower, harder work of fixing their development culture, documentation standards, and feedback loops. AI finds the leverage in that foundation; it cannot create the foundation itself.

Introduction

The following content is compiled by NOVSITA in combination with X/social media public content and is for reading and research reference only.

focus

- Laura Tacho is CTO of DX, co-author of the Core 4 developer productivity framework, and has 120,000 developers,…

- [Note: Pragmatic Summit is the founder of The Pragmatic Engineer, Gergely O…]

Remark

For parts involving rules, benefits or judgments, please refer to Baoyu’s original expression and the latest official information.

Editorial comments

This article “X Import: Baoyu – 92.6% developers use AI coding assistant every month, but only save 4 hours of time per week” comes from the X social platform and is written by Baoyu. Judging from the completeness of the content, the density of key information given in the original text is relatively high, especially in the core conclusions and action suggestions, which are highly implementable. Laura Tacho is CTO of DX and co-author of the Core 4 Developer Productivity Framework, with real data from 120,000 developers and more than 450 companies. On February 11, she gave a 30-minute keynote speech at the Pragmatic Summit in San Francisco, using the latest industry benchmark data to answer a question: Has AI changed organizations for the better? [Note: Pragmatic Summit is The Pr…. For readers, its most direct value is not “knowing a new point of view”, but being able to quickly see the conditions, boundaries and potential costs behind the point of view. If this content is broken down into verifiable judgments, it contains at least the following levels: Laura Tacho is the CTO of DX Company, co-author of the Core 4 developer productivity framework, with 120,000 developers in hand,…; [Note: Pragmatic Summit is Gergely O…, founder of The Pragmatic Engineer. Among these judgments, the conclusion part is often the easiest to disseminate, but what really determines the practicality is whether the premise assumptions are established, whether the sample is sufficient, and whether the time window matches. We recommend that readers, when quoting this type of information, give priority to checking the data source, release time and whether there are differences in platform environments, to avoid mistaking “scenario-based experience” for “universal rules.” From an industry impact perspective, this type of content usually has a short-term guiding effect on product strategy, operational rhythm, and resource investment, especially in topics such as AI, development tools, growth, and commercialization. From an editorial perspective, we pay more attention to “whether it can withstand subsequent fact testing”: first, whether the results can be reproduced, second, whether the method can be transferred, and third, whether the cost is affordable. The source is x.com, and readers are advised to use it as one of the inputs for decision-making, not the only basis. Finally, I would like to give a practical suggestion: If you are ready to take action based on this, you can first conduct a small-scale verification, and then gradually expand investment based on feedback; if the original article involves revenue, policy, compliance or platform rules, please refer to the latest official announcement and retain the rollback plan. The significance of reprinting is to improve the efficiency of information circulation, but the real value of content is formed in secondary judgment and localization practice. Based on this principle, the editorial comments accompanying this article will continue to emphasize verifiability, boundary awareness, and risk control to help you turn “visible information” into “implementable cognition.”

Laura Tacho is CTO of DX and co-author of the Core 4 Developer Productivity Framework, with real data from 120,000 developers and more than 450 companies. On February 11, she gave a 30-minute keynote speech at the Pragmatic Summit in San Francisco, using the latest industry benchmark data to answer a question: Has AI changed organizations for the better?

[Note: Pragmatic Summit is the first offline summit held by Gergely Orosz, founder of The Pragmatic Engineer, in February 2026, with approximately 500 engineering leaders participating. DX is a developer experience platform company that helps enterprises measure and improve engineering efficiency. 】

XIMGPH_2

Original video link:https://www.youtube.com/watch?v=LOHgRw43fFk

Quick takeaways:

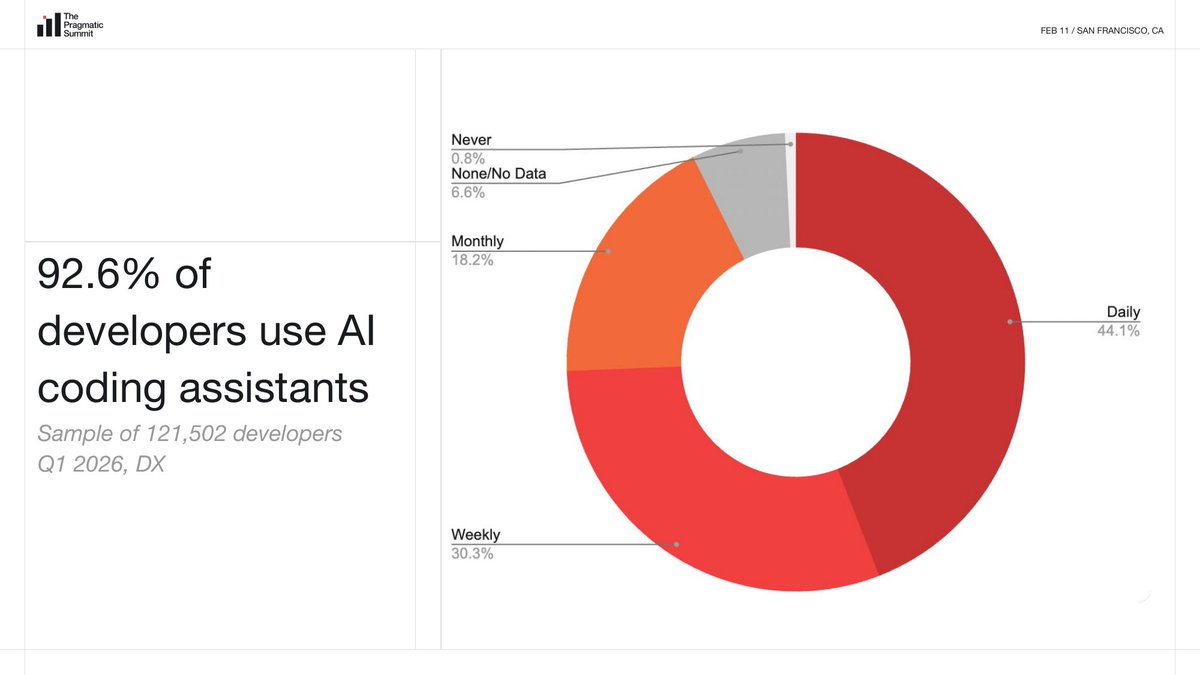

- A sample of 121,000 developers shows that 92.6% use AI coding assistants monthly, but weekly time savings have stabilized at about 4 hours, with no growth for several consecutive quarters

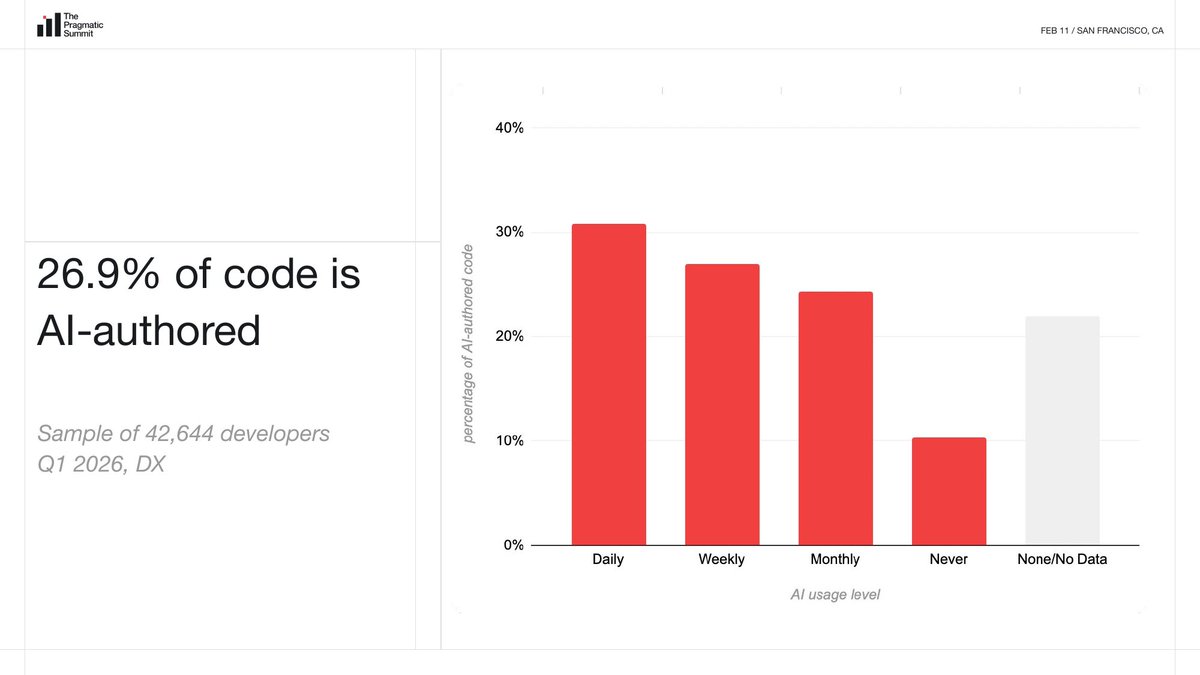

- The proportion of code written by AI and merged into the production environment reached 26.9%, a significant increase from 22% in the previous quarter, and daily active users exceeded 30%

- AI is the amplifier: Some companies have seen a 50% reduction in client incidents, others have doubled their incidents. Same tools, different results

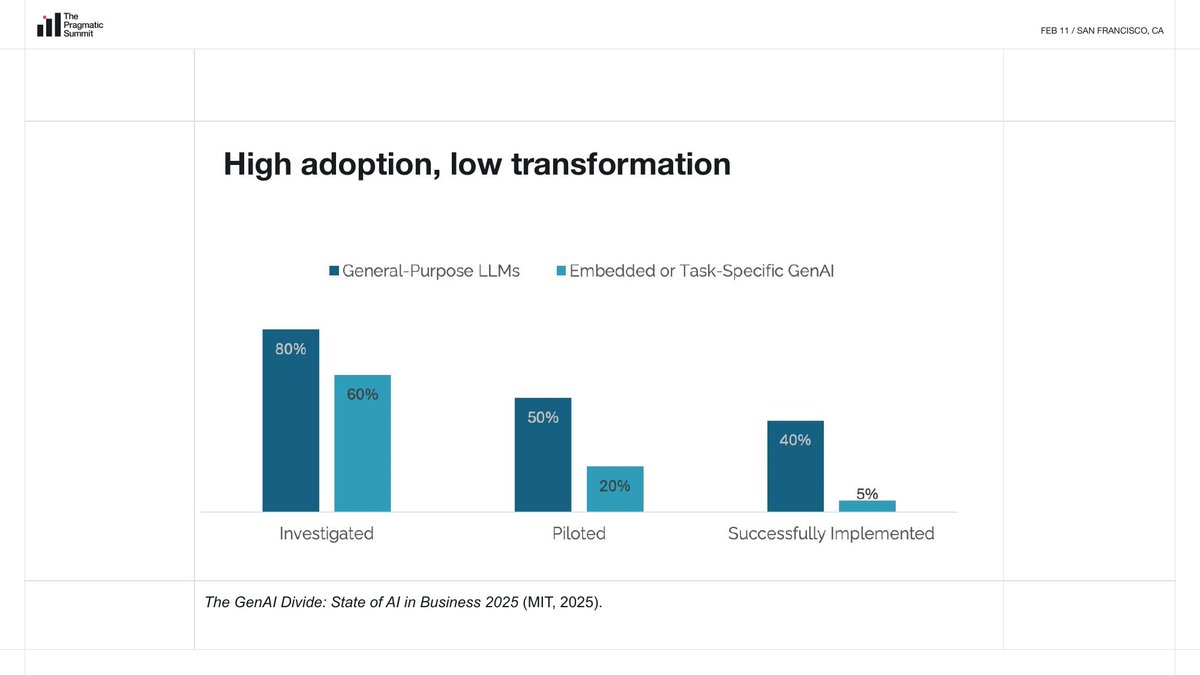

- MIT study conclusion: “High adoption rate, low conversion rate.” 92% of developers are using AI, but real change at the organizational level rarely happens

- 95% of enterprise AI projects fail to produce measurable financial impact (MIT research data)

- Halving the onboarding time may be the most far-reaching effect of AI so far: the performance of the 10th PR can predict the output of the next two years, and AI has cut the time to reach this milestone in half

- Consensus among agents will be a major issue that needs to be solved in 2026

- The core judgment of Martin Fowler’s closed-door seminar: AI will not solve organizational system problems unless you first admit that the system problems exist

【1】The latest data of 120,000 developers: 92% are using it, but the time saving is stuck at 4 hours

Laura presents a set of industry benchmark data that has been made public for the first time. The sample size is 121,502 developers, covering 464 companies, and the data collection period is from November 2025 to February 1, 2026.

Let’s start with the adoption rate: 92.6% of developers use the AI coding assistant at least once a month. Of these, 44.1% use it daily, 30.3% use it weekly, 18.2% use it monthly, and only 0.8% never use it. The “AI coding assistant” here is defined by most developers as tools such as Cursor, Codex, Copilot, and Claude, and does not necessarily include ChatGPT.

Then look at the time savings. In a sample of 95,301 developers, self-reported savings of approximately 4.08 hours per week due to AI tools. Broken down by frequency of use, those who used daily saw the most savings (about 4.8 hours/week), those who used weekly about 4 hours, and those who used monthly about 3.6 hours. This number is similar to Q2 in 2025, and close to Q4’s 3.67 hours, which has been hovering around 4 hours. A study published by Google in 2025 claimed about a 10% productivity improvement, and DX’s data basically confirms this magnitude.

But this number has not grown significantly with tool iteration. The models are getting stronger, the tools are getting better, and the time saved per week is stuck around 4 hours. Laura didn’t explain why, but the data itself is a signal: the optimization space just by accelerating encoding tasks may be approaching the ceiling.

The fastest-changing metric is the proportion of AI authored code. In a sample of 42,644 developers, 26.9% of production code written by AI passed code review and entered production largely without significant modifications. The figure was 22% last quarter, up nearly 5 percentage points quarter-on-quarter. The proportion of developers who use AI every day has exceeded 30%.

【2】AI cuts the onboarding time for new employees in half, and the effect lasts for two years

Laura has always had an intuition: AI will be a useful tool for onboarding, helping newcomers gain access to project information earlier. She verified this intuition with data.

Among a sample of 35,594 developers who joined after January 2024, onboarding time was cut in half from Q1 2024 to Q4 2025. The metric is the number of days to reach the 10th merge PR, which is a commonly recognized onboarding milestone in the industry. Looking at the chart, Q1 in 2024 will take approximately 100 days, and Q4 in 2025 will drop to less than 50 days. Putting this curve together with the growth curve of AI usage, the trend is highly consistent.

[Note: PR (Pull Request) is a standard process for developers to submit code changes to the project. The 10th PR usually means that the developer has independently completed multiple meaningful code contributions and is a common indicator of “really getting started.” 】

Microsoft has a study on this. Brian Houck, co-author of the SPACE framework, found that a developer’s performance level on the 10th PR can predict that person’s output pattern in the next two years. It’s not as simple as “people who get hired quickly will also get hired quickly”, but the 10th PR itself is a predictive signal.

[Note: The SPACE framework is a developer productivity measurement framework proposed by Nicole Forsgren and others in 2021. It covers five dimensions: satisfaction, performance, activity volume, communication and collaboration, and efficiency. It is widely adopted by the industry. 】

The logical chain is as follows: AI helps newcomers achieve the 10th PR faster → the 10th PR predicts performance in the next two years → the effect of onboarding acceleration may continue to amplify within two years. If this corollary holds true, onboarding acceleration may be the single greatest impact of AI on organizations.

Laura added that this applies not only to new hires at the company, but also to engineers switching projects and even non-engineers. AI has lowered the cognitive threshold for understanding the code base. In the past, it would take a few weeks for a new person to join a large project just to understand the existing code structure. Now, they can directly ask AI.

【3】The average is deceiving: for some companies, accidents are halved, while for other companies, accidents are doubled.

After Laura showed the industry average, the conversation changed:

The average does not equal a typical experience, nor does it equal what will happen to you.

(“Average does not mean typical, it does not mean what is going to happen to you.”)

XIMGPH_7

She used the analogy of the Big Bang. AI entering organizations is like a big explosion, releasing a huge amount of energy, but the shock wave pushes different organizations in completely different directions. Organizational performance is multidimensional, and AI, as an accelerator and amplifier, is making the differences in these dimensions wider and wider.

The biggest gap is in the quality dimension. In a sample of 67,084 developers, some companies saw client incidents double, while others saw a 50% decrease in incidents during the same time period.

Laura said:

An organization that was already dysfunctional is now even more dysfunctional. And it’s running poorly at faster speeds.

(“Organizations that were dysfunctional already, now they’re more dysfunctional. They’re dysfunctional and dysfunctional faster.”)

For companies with existing health systems, AI amplifies their advantages, allowing them to make changes faster, with higher quality, and with greater confidence. For companies that already have problems, AI amplifies the problems, creates more accidents, and pushes code to places where it shouldn’t be pushed faster.

AI is not a medicine, it is an amplifier. Good ones are better, bad ones are worse.

【4】92% are using it, but the tissue conversion rate is very low

Laura cited the MIT study “The GenAI Divide: State of AI in Business 2025” released in July 2025. The study, which surveyed 152 organizations, concluded that the industry is currently in a “high adoption, low conversion” state.

[Note: This study, published by MIT’s Project NANDA team, is based on 52 executive interviews, 153 executive surveys, and an analysis of more than 300 public AI projects. 】

The chart on the PPT shows: 80% of organizations have investigated the general large language model, but only 50% have entered the pilot stage, and only 40% have successfully implemented it. Embedded or task-specific GenAI is even worse, with an extremely steep funnel from 60% research to 20% pilot to 5% successful implementation. There are plenty of stragglers every step of the way, from pilot to production to profit.

There is a pattern behind this that Laura has observed: organizations that have given up on cloud transformation, organizations that have given up on agile transformation, are now giving up on AI transformation. Transformation requires organizations to confront their own problems, which is inherently uncomfortable.

Adoption does not equal impact.

(“Adoption doesn’t mean impact.”)

Laura’s PPT quoted a sentence from MIT: “These tools mainly improve personal productivity, not profit and loss statement (P&L) performance.” When AI is only used to speed up the process of a person typing code in front of the computer, the ceiling for productivity improvement is very low. If you want organizational-level results, you must think about AI applications at the organizational level, not just at the level of coding tasks.

The MIT study also revealed a mismatch: More than 50% of AI budgets are invested in sales and marketing tools, but the highest ROI actually comes from back-office automation, such as cutting outsourcing and reducing agency fees. Buying professional vendor tools has a success rate of about 67%, while building your own in-house has only a one-third success rate.

There’s also a rapidly expanding “shadow AI economy”: only 40% of companies purchase official LLM subscriptions, but at more than 90%, employees regularly use personal AI tools at work.

XIMGPH_11

Three steps are listed directly on the PPT: “1. Experiment with AI → 2. ??? → 3. Profit!”. That “???” in the middle is the really hard part, it’s called organizational change management.

【5】Agentic workflow is expanding the boundaries of possibilities

XIMGPH_12

Laura uses the expansion of the universe as a metaphor for the rise of agentic workflows: Our universe is getting bigger, the hype is getting bigger, but so are the possibilities.

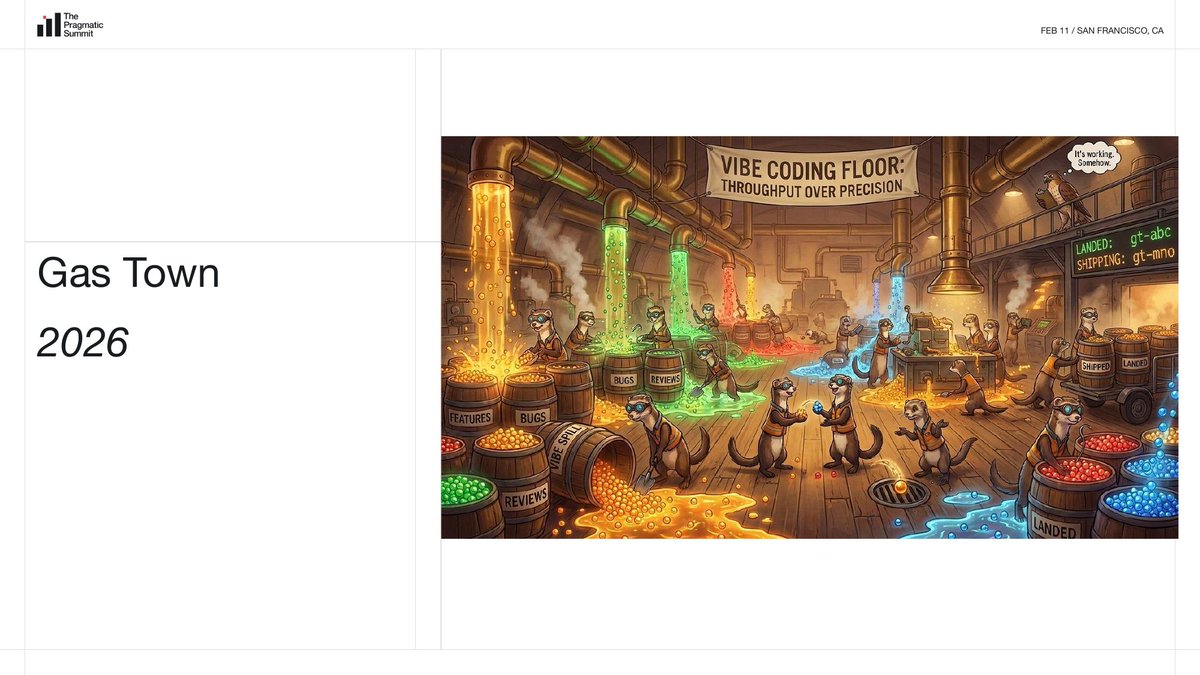

She contrasts the fantasy of a lunar colony in 1969 with the Gas Town of 2026.

Gas Town is an experimental AI programming tool. The PPT illustration is labeled “Vibe Coding Floor: Throughput Over Precision.” A group of otters are carrying buckets labeled “Features”, “Bugs”, and “Reviews” in a chaotic factory.

XIMGPH_15

Laura described Gas Town as “completely crazy”, and the PPT listed several usage warnings: “If you can’t run at least 5 Claude Codes at the same time, don’t use Gas Town”, “If you care about money, don’t use Gas Town”, “Don’t use Gas Town”.

[Note: Gas Town is an experimental AI programming agent tool developed by Steve Yegge. It runs multiple AI coding agents in parallel to complete tasks at the same time. It is known for its radical autonomy and extremely high token consumption. 】

XIMGPH_16

These tools make building more interesting, but Laura is very clear: using AI to build a nail polish matching app in a nail salon is completely different from using AI to change revenue for a multinational bank. She compared it to the moon landing: We don’t live on the moon, but space exploration brought sunglasses, barcodes, and quartz watches. Experimentation and exploration are valuable in themselves, and Agent is also expanding the boundaries of what we can do, how we do it, and for whom.

XIMGPH_17

The specific data used by Agentic comes from a smaller sample: 2,920 developers at six pioneering companies. Of these companies, 50.5% use agentic workflows daily, 30.2% weekly, and 11.7% monthly. Laura reminded that these companies are at the forefront of the industry and have deployed telemetry for agentic workflows and do not represent the industry average.

Regarding Codex, Laura shared a set of data: Codex desktop version exceeded 1 million downloads within a week of its release on February 2, and user growth increased by 60% last week. OpenAI processes trillions of tokens internally every week, and 95% of OpenAI developers are using Codex. The GPT 5.3 Codex was also released last Thursday (around February 6). Developers using Codex submit approximately 60% more PRs per week than developers using other AI tools.

[Note: The Codex desktop application is a Mac-side AI coding tool launched by OpenAI in February 2026. It can run multiple AI Agents at the same time to process tasks in parallel. GPT-5.3-Codex is its underlying model. These data come from the official announcement of OpenAI and are of interest. 】

Laura is cautious about this number: it’s one data point, not the only data point, but it illustrates that agentic workflows have a high ceiling.

【6】Business practice: Haven Medical, Cisco, JP Morgan

Laura introduced a company not in the AI industry: Haven Headache and Migraine Center, a San Francisco-based virtual telemedicine startup focused on headaches and migraines. The question at the heart of Haven is simple: Can the headache be solved over Zoom? It turns out it can.

In the medical field, Laura highlighted a key distinction: whether code generated with AI is “durable code” or “disposable code”. As a small startup in the medical scene, Haven does both.

On the development side, Haven used Ralph loops to quickly prototype a new patient portal. The PPT shows the complete workflow link: (Linear + Figma) → PRD → JSON → Claude Code. The output is not one-time junk code, but high-quality prototypes, with faster iterations and clearer documentation.

At the clinical level, Haven trained a HIPAA (U.S. Healthcare Privacy Regulation) compliant model on hundreds of thousands of historical patient messages. Models classify, route and automate workflows for messages: whether a medication refill is needed or a follow-up appointment is needed. The result was a customer satisfaction rate that was 3 times the industry average and a substantial reduction in patient headache days and severity.

XIMGPH_20

Large companies are also moving. Cisco has 18,000 engineers using Codex every day, primarily for complex code migrations and code reviews, reducing code review time by 50%. JP Morgan Chase published a paper on MAFA (Multi-Agent Framework for Annotation, multi-agent annotation framework): multiple specialized agents independently annotate customer interaction data (intentions, entities, FAQ categories), and then a “judgment agent” aggregates and re-ranks the results through consensus and confidence scoring.

Laura predicts that consensus among agents will be a major technical issue that needs to be solved in 2026. This is in the same vein as the consensus problem in distributed systems, but in the context of AI Agent it is a completely new challenge: how to reach consensus when multiple agents each have different “judgments”?

【7】Martin Fowler Closed Seminar: “AI Does Not Solve Organizational Problems”

Laura was invited to attend the **”Future of Software Development” closed workshop** organized by Martin Fowler and Thoughtworks on February 1-3, 2026 in Deer Valley, Utah. The choice of location is historic: it was at Snowbird Ski Resort in Utah that the Agile Manifesto was born in February 2001. Twenty-five years later, a group of people returned to the mountains of the same state to discuss software development in the age of AI. Participants include Kent Beck (founder of Extreme Programming), Steve Yegge (developer of Gas Town), Gergely Orosz, and others.

[Note: Martin Fowler is chief scientist at Thoughtworks, author of “Refactoring”, and one of the 17 signatories of the 2001 Agile Manifesto. Kent Beck is the founder of Extreme Programming (XP) and a signer of the Agile Manifesto. 】

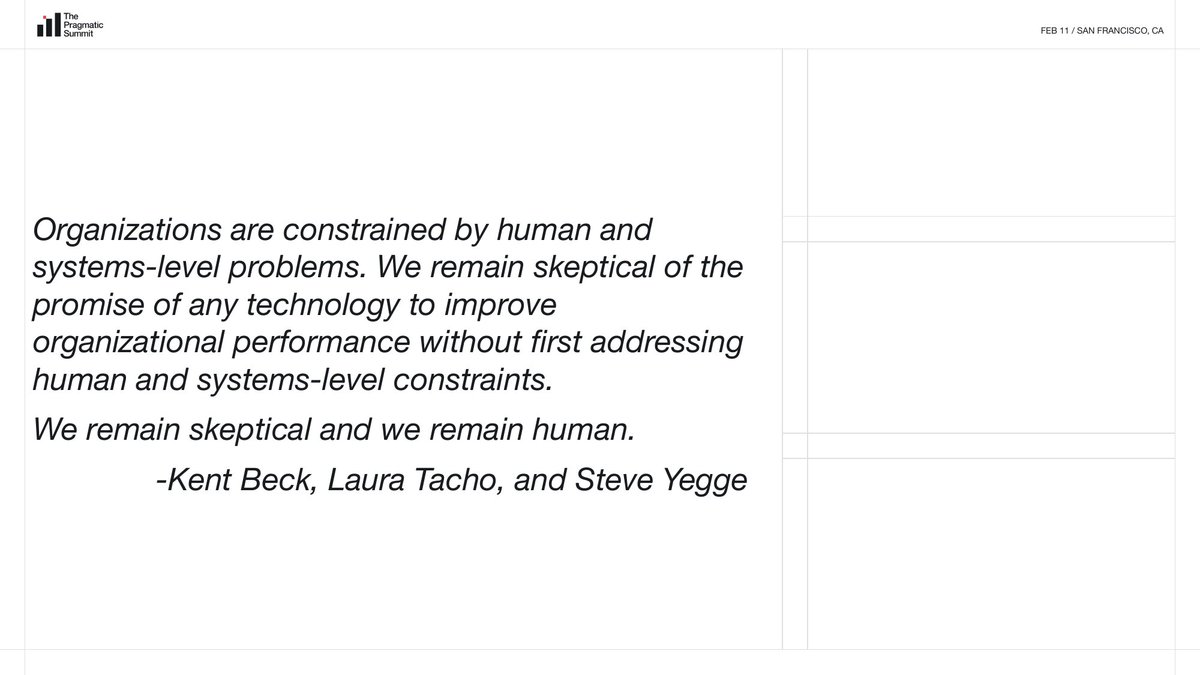

The day-and-a-half discussion focused on one topic: how to use Agent responsibly, ethically, and sustainably. There was a lot of experimentation (Steve Yegge was playing Gas Town) and the atmosphere was lively. But the final conclusion is surprisingly sober:

AI does not solve an organization’s systemic problems. AI only works when you proactively apply it to systemic problems. The premise is that you must first admit the existence of system problems.

Kent Beck has seen every “silver bullet” promise in the software industry over the past 30 years, Steve Yegge has experienced the realities of large-scale engineering organizations at Google and Amazon, and Laura has real-world data at her fingertips across 450 companies. Three people saw the same thing from different angles.

There is a photo of Laura and a participant on the PPT, next to a discussion board covered with sticky notes, covering topics such as productivity measurement and the future of programming languages. Laura said that she, Kent Beck and Steve Yegge chatted for a while in the corridor between meetings and summed up this paragraph:

Organizations are subject to human and system-level issues. We remain skeptical of any technology that claims to improve organizational performance without first addressing these people and system-level constraints. We remain skeptical, and we remain human.

(“Organizations are constrained by human and systems-level problems. We remain skeptical of the promise of any technology to improve organizational performance without first addressing human and systems-level constraints. We remain skeptical and we remain human.” — Kent Beck, Laura Tacho, and Steve Yegge)

Laura finishes with a space analogy. The PPT showed a cartoon of a traffic jam on Mars, with the surface littered with garbage, abandoned satellites and long lines of cars, with the Earth in the distant sky.

If we don’t solve the problems at the system level, we’ll just take them into space with us.

(“If we don’t address the systems level problems, we will just take them to space with us.”)

Martin Fowler was asked: Is it time to write a new manifesto? Fowler’s answer: No, it’s too soon. People are still experimenting with ideas. He said he had little interest in the manifesto itself, that most manifestos were wisely ignored by most people, except that the Agile Manifesto happened to be an exception.

[8] Organizations that win AI competitions do four things right

Based on workshops and extensive data analysis, Laura identified four successful patterns that she saw over and over again.

First, there are goals and measurements. “Spray and pray” doesn’t work, which is to license AI tools to all developers and pray for good things to happen. Laura says she has plenty of evidence that this doesn’t work. Winning organizations target AI innovation to a specific problem, set measurable goals, and then track progress.

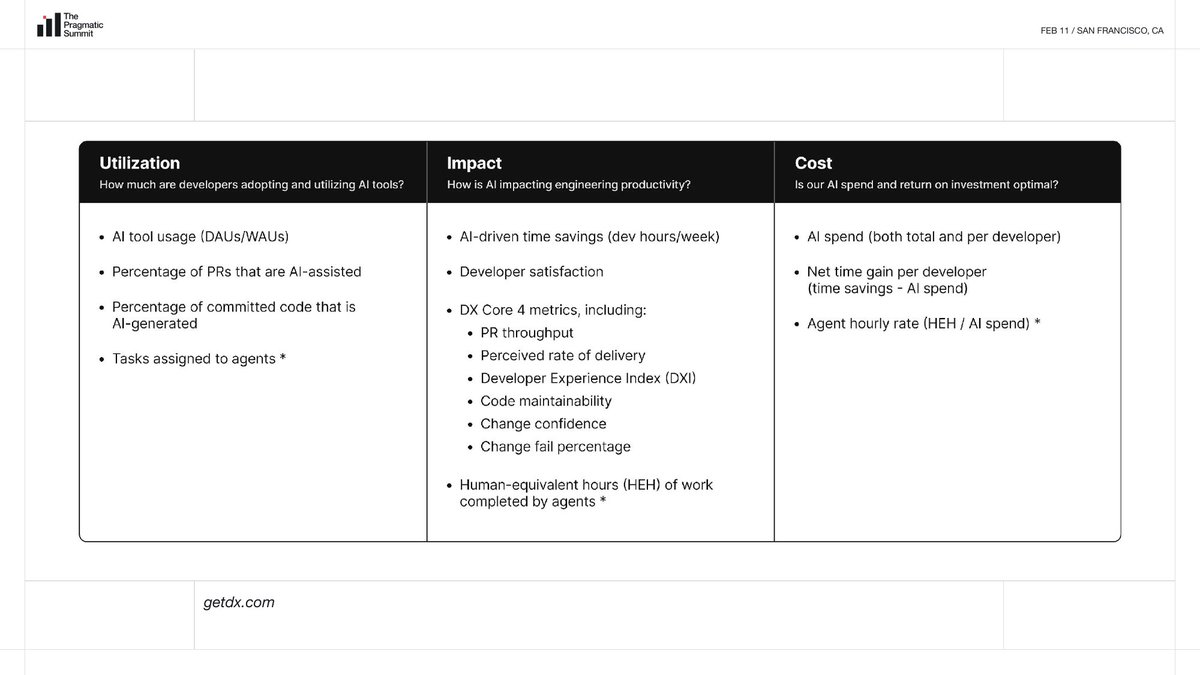

To this end, she and DX CEO Abi Noda jointly released an AI measurement framework:

- The first layer of “utilization”: daily/weekly activity of AI tools, proportion of AI-assisted PRs, proportion of AI-generated code, number of tasks assigned to Agents

- The second layer of “impact”: time saved by AI, developer satisfaction, DX Core 4 metrics (PR throughput, delivery perception rate, developer experience index, code maintainability, change confidence, change failure rate), and human equivalent hours (HEH) completed by the Agent

- The third layer of “cost”: total AI expenditure and per capita expenditure, net time benefit of each developer (time savings minus time spent on AI), Agent hourly wage rate (HEH/AI expenditure)

She recommends two AI readiness models simultaneously. One is the DORA AI Capabilities Model, which contains seven capability dimensions: clearly communicated AI stance, small batch work, healthy data ecosystem, user-centered, AI-accessible internal data, high-quality internal platform, and strong version control practices. The other is Thoughtworks’ FOREST framework, which includes six dimensions: infrastructure, operating model, data readiness, trusted AI, human-machine collaboration experience, and strategic alignment.

[Note: DORA (DevOps Research and Assessment) is a research team under Google Cloud, famous for its annual DevOps report. 】

Second, developer experience is more important than ever.

Laura said:

Rename the “developer experience” you were going to talk to leadership about “agent experience” and you’ll get the budget.

(“Just call it agent experience and you’ll get money for it.”)

Good feedback loops, clear service definitions, complete documentation, and fast CI (continuous integration), these basic skills of developer experience, have been praised for decades, but have never been able to get budget. In the AI era, the same thing had a different name, and management was suddenly willing to invest. Because good testing practices and good documentation are exactly the basis for agentic workflow to run.

Laura said:

We’ve been clamoring for developer experience for decades, begging for pennies. Wouldn’t be willing to pay that for a human engineer, but would be fine paying for a robot engineer. This is a bit chilling, but strategically, seize the opportunity.

(“We have been screaming about this for decades… It is disheartening that we didn’t want to spend the money when it came to human engineers but when it comes to robot engineers we’re okay with it.”)

XIMGPH_25

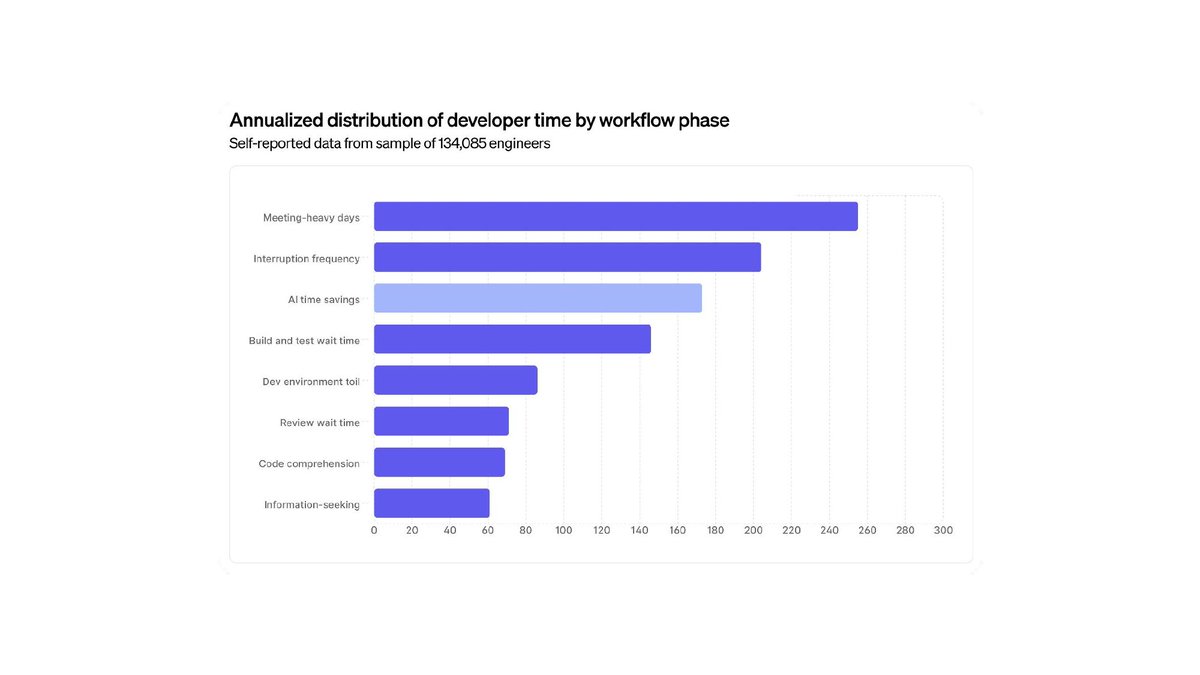

The PPT shows a chart from a survey of 134,085 engineers, arranging the impact of each workflow stage on developer time by annualized time distribution. At the top of the list were “meeting-intensive days” and “frequency of interruptions,” far ahead of “AI time savings.” Build and test wait times, development environment complexity, review wait times, code understanding, and information search ranked lower.

The 4 hours per week saved by AI cannot make up for poor meeting culture and frequent interruptions. Successful organizations do not use AI to speed up coding, but point AI at the systemic problems themselves, such as using AI to reduce meetings, optimize CI wait times, and reduce duplication of work in the development environment.

Third, treat AI as an organizational problem rather than an individual problem.

If you want organizational-level results like revenue, P&L, time to market, you have to think about AI applications at the organizational level. AI requires workflows that span the entire value stream.

XIMGPH_26

MIT research shows ranking of barriers to AI adoption at the organizational level:

- Change management difficulties

- Lack of executive support

- Poor user experience

- Model output quality concerns

- Reluctance to adopt new tools

The first three are not technical issues. Laura named a phenomenon: the executive team talks about “getting on AI” but they have never opened Windsurf, Claude Code or Codex.

Fourth, experiment by solving real customer problems.

Expeditions to Mars are cool, but you can’t have your entire organization go there. The cost is too high, it interferes too much with core business, and it does not serve customers. Experimentation continues, but experiments can also be precisely targeted at actual customer problems. This is the path to seeing organizational-level results.

【at last】

Laura’s speech is based on the judgment of someone who is immersed in the data of 120,000 developers every day: the adoption of AI is no longer a problem, the gap between adoption and impact is. Companies that doubled their accidents and companies that halved their accidents used the same tools. The difference is in the organization itself.

Several signals worthy of continued attention: the proportion of AI authored code is rising rapidly, from 22% to 27%, and daily active users exceed 30%. This curve is still accelerating. The time savings indicator is stuck at 4 hours, suggesting that the space for acceleration at the coding level alone may have reached its limit. Agentic workflow is the next growth point, but the current data comes from pioneers, and it is still unknown whether mainstream enterprises can copy it.

In the context of the 25th anniversary of the Agile Manifesto, Laura, Kent Beck, and Martin Fowler reached the same conclusion as they did 25 years ago: technology cannot replace organizational change. AI is no exception.

Laura ended her talk with the words of Carl Sagan: “Somewhere, something incredible is waiting to be discovered.”

Then she added four words:

stay grounded, stay skeptical, stay human, stay pragmatic

Full speech video:https://www.youtube.com/watch?v=LOHgRw43fFk

Slides: https://docs.google.com/presentation/d/1WQMMBfHDBg5xeUWmQPQ86tlBatk_OEV0EsbVDf9sHPo/edit?usp=sharing

source

author:Baoyu

Release time: February 26, 2026 04:24

source:Original post link

Related Reading on NovVista

- Claude Code vs Cursor vs Copilot: A Practical Comparison for Working Developers — Side-by-side evaluation of the three dominant AI coding assistants, with real workflow benchmarks and use-case recommendations.

- AI Agents in 2026: What Is Actually Working and What Is Still Hype — Cuts through the agentic workflow noise with concrete production examples, failure patterns, and organizational readiness criteria.

- How to Evaluate AI Developer Tools Without Getting Sold a Demo — A structured framework for assessing AI tooling against your team’s actual workflow, not vendor benchmarks.

Editorial Comment

The latest data from the Pragmatic Summit confirms what many of us have suspected: we have reached the "local maximum" of the AI coding assistant. When 92% of your workforce is using a tool but the needle on productivity hasn't moved past the four-hour mark in a year, you aren't looking at a tool problem—you’re looking at a systems problem.

As a senior editor watching this space, the most telling metric isn't the 26.9% of code now authored by AI. It’s the "Dysfunction Amplifier" effect. We’ve spent years telling ourselves that better tools would fix bad output. Instead, Laura Tacho’s data shows that AI is essentially a high-pressure hose. If you point it at a well-manicured garden (a team with solid CI/CD, clear documentation, and rigorous peer review), everything grows faster. If you point it at a pile of trash (a team with technical debt and "vibe-based" deployments), you just get a bigger, faster-moving mess. The fact that some companies saw production incidents double while using the exact same tools as those who saw a 50% decrease is the ultimate proof that "AI adoption" is not a strategy; it’s a magnifying glass.

The real ROI is hiding in the onboarding data. Halving the time to the "10th PR" milestone is a massive win, not just for the immediate velocity, but for the long-term health of the engineering org. AI has effectively lowered the "cognitive tax" of entering a new codebase. If a junior dev can ask an agent to explain a legacy service instead of hunting down a busy senior engineer, the entire organization breathes easier. This is where the "4 hours saved" figure is deceptive; it doesn't account for the saved "interruption time" for the rest of the team.

However, we need to be honest about the "Agentic" hype. Tools like Gas Town and the new Codex desktop app are impressive, but they are currently pushing us toward a "throughput over precision" model. This is a dangerous trade-off in a corporate environment. The 2026 challenge isn't making agents write more code; it’s "Agent Consensus"—getting multiple AI entities to agree on a solution in a way that doesn't require a human to spend six hours debugging a "four-hour" time-saver.

Perhaps the most cynical—yet brilliant—takeaway from Tacho’s keynote is the rebranding of Developer Experience (DX) to Agent Experience (AX). For a decade, CTOs have begged for budget to fix flaky tests and update documentation, only to be told there’s no "business value." Now, because an AI Agent needs those same tests and docs to function, the checkbooks are opening. It’s a bit disheartening that we’ll fund a robot’s needs before a human’s, but if it results in the robust infrastructure we’ve needed since 2015, we should take the win.

Ultimately, the insights from the Martin Fowler and Kent Beck retreat in Utah serve as a necessary cold shower. AI is not a "silver bullet" for organizational rot. If your company culture is built on silos, fear, or poor communication, AI will only help you be siloed and fearful at 10x speed. As we move further into 2026, the winners won't be the companies with the most "agentic workflows," but the ones who realized that AI is just a faster way to expose their existing human failures. If you don't fix the system, you're just automating your own obsolescence.