Editor’s Brief

The 2026 standoff between Anthropic and the Pentagon marks a historic fracture in the relationship between Silicon Valley and the U.S. government. After CEO Dario Amodei refused to yield on specific "red lines" regarding domestic surveillance and autonomous weaponry, the administration designated the company a "national security supply chain risk"—a label previously reserved for foreign adversaries. The conflict highlights a fundamental power struggle: whether private tech firms or the federal government should define the ethical boundaries of AI in warfare when existing laws fail to keep pace with the technology.

Key Takeaways

- The 1% Friction:** Anthropic agreed to 99% of military use cases but drew hard lines at mass domestic surveillance and fully autonomous weapons, arguing that AI’s scale makes these actions uniquely dangerous and currently unregulated by Congress.

- Weaponized Regulation:** The "supply chain risk" designation is an unprecedented use of executive power against a domestic firm, effectively attempting to "de-bank" Anthropic from the entire defense ecosystem through fear and uncertainty.

- The OpenAI Paradox:** Hours after Anthropic was blacklisted, OpenAI secured a deal claiming to respect the same red lines. This suggests the dispute was less about the specific restrictions and more about who holds the ultimate "veto" over model deployment.

- The Governance Gap:** Amodei argues that because AI capabilities double every four months, tech leaders must act as temporary gatekeepers until the legislative branch can establish a modern legal framework for digital-age civil liberties.

Introduction

The following content is compiled by VIPSTAR in combination with X/social media public content and is for reading and research reference only.

focus

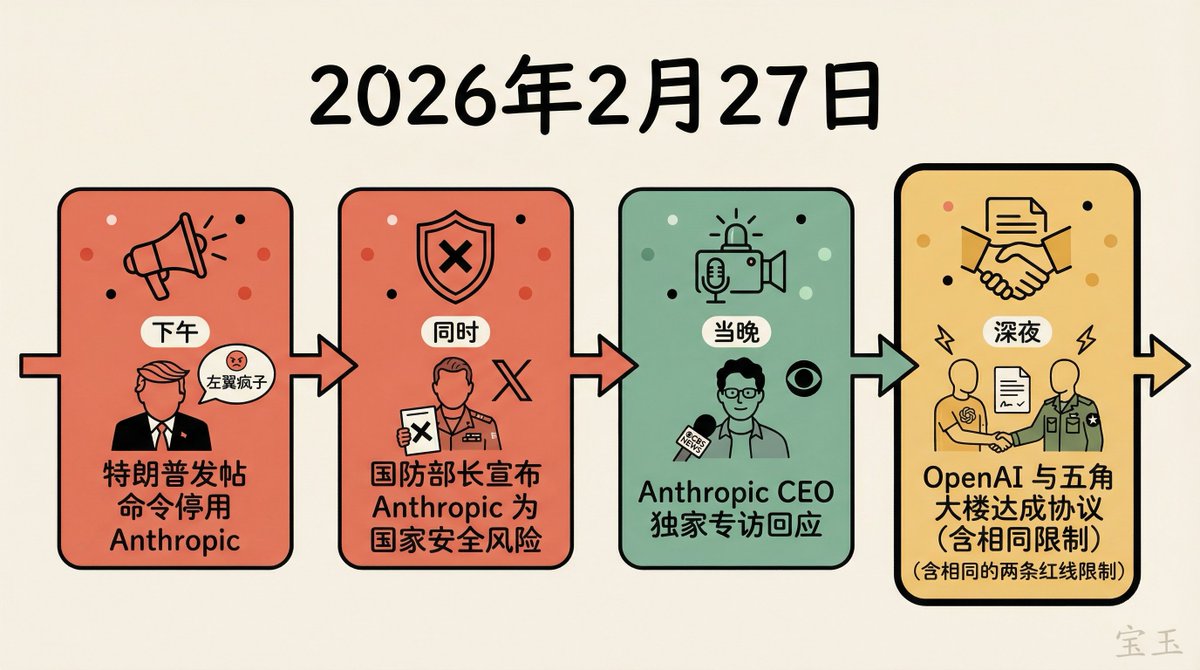

- February 27, 2026, was a long day at Anthropic.

- This afternoon, Trump posted on Truth Social (his social platform), calling Anthropic a “left-wing lunatic” movement…

Remark

For parts involving rules, benefits or judgments, please refer to Baoyu’s original expression and the latest official information.

Editorial comments

This article “X Import: Baoyu – On the night of being banned, OpenAI obtained the same terms – Amodei’s first exclusive interview reveals the inside story of the conflict between Anthropic and the Pentagon” comes from the X social platform and is written by Baoyu. Judging from the completeness of the content, the density of key information given in the original text is relatively high, especially in the core conclusions and action suggestions, which are highly implementable. February 27, 2026, was a long day at Anthropic. That afternoon, Trump posted on Truth Social (his social platform), calling Anthropic a company run by a “left-wing lunatic” and ordering all federal agencies to “immediately stop” using Anthropic’s technology. Almost at the same time, Secretary of Defense Pete Hegseth announced on Posted on Social (his social platform), calling Anthropic a “left-wing lunatic” movement… Among these judgments, the conclusion is often the easiest to spread, but what really determines the practicality is whether the premise assumptions are valid, whether the sample is sufficient, and whether the time window matches. We recommend that readers first check the data source, release time and whether there are differences in platform environments when quoting this kind of information, to avoid mistaking “scenario-based experience” for “universal rules.” From the perspective of industry impact, this type of content usually has a short-term guiding effect on product strategy, operational rhythm and resource investment, especially in topics such as AI, development tools, growth and commercialization. From an editorial perspective, we are more concerned about “whether it can withstand subsequent fact testing”: first, whether the results can be reproduced, secondly, whether the method can be transferred, and thirdly, whether the cost is affordable. The source is x.com, and readers are recommended to use it as one of the inputs for decision-making, rather than the only basis. Finally, a practical suggestion: If you are ready to act on this, you can conduct small-scale verification first, and then gradually expand investment based on feedback; if the original article involves revenue, policy, compliance, or platform rules, please refer to the latest official announcement and retain the rollback plan. The significance of reprinting is to improve the efficiency of information circulation, but the value of content is truly formed in secondary judgment and localization practice. Based on this principle, the editorial comments accompanying this article will continue to emphasize verifiability, boundary awareness, and risk control to help you turn “visible information” into “implementable knowledge.”

February 27, 2026, was a long day at Anthropic.

That afternoon, Trump posted on Truth Social (his social platform), calling Anthropic a company run by a “left-wing lunatic” and ordering all federal agencies to “immediately stop” using Anthropic’s technology. At about the same time, Defense Secretary Pete Hegseth announced on Never used by a US company.

That night, Anthropic CEO Dario Amodei accepted an exclusive interview with CBS News. This is the first time he has faced the camera since the incident. In a nearly 30-minute conversation, he discussed the breakdown of negotiations with the Pentagon, including a three-day ultimatum, a “compromise” clause full of vague legalese, and why he insisted on two red lines that could not be compromised. He characterized the government’s actions as “retaliatory and punitive” and said he would challenge the supply chain risk designation in court.

Original video link:http://www.youtube.com/watch?v=MPTNHrq_4LU

The following is the core content of the interview.

Quick overview

- Anthropic agrees with 98%-99% of the Pentagon’s use cases, and the dispute involves only two red lines: domestic mass surveillance and fully autonomous weapons. According to Amodei, these restrictions have never been triggered during an actual military mission.

- The Pentagon gave Anthropic a three-day ultimatum, during which the “compromise” language sent was full of vague terms such as “if the Pentagon deems it appropriate” and no actual concessions were made.

- The supply chain risk designation has never been used before for a U.S. company, only for foreign adversaries such as Kaspersky (Russia) and foreign chip suppliers. Amodei called the measure “retaliatory and punitive.”

- Anthropic had not received any formal government notification as of the time of the interview, and all information comes from social media posts from Trump and Hegseth. Anthropic will challenge that finding in court.

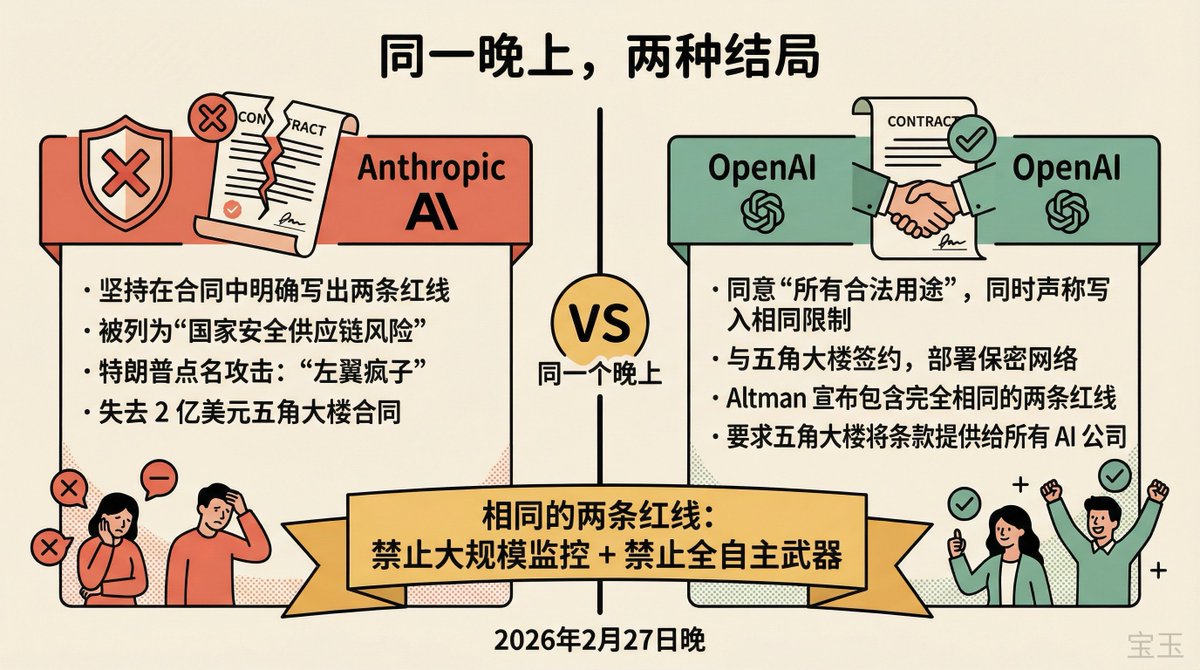

- Just hours after Anthropic was banned, OpenAI announced a deal with the Pentagon that it claimed included the exact same two red lines. This suggests that at the heart of the dispute may be who has the final say.

[1] 98% of usage scenarios have already been opened, and the controversy is only about 2%

The interviewer got right to the point: “Why not open Anthropic’s AI to the U.S. government without restrictions?”

Amodei’s answer begins with a fact that may surprise many viewers: Anthropic has been the most active of any AI company in working with the U.S. government. He said that Anthropic is the first company to deploy models to the military’s classified cloud and the first company to customize models for national security purposes. It has been widely deployed in the intelligence community and the military, covering many fields such as network security and combat support.

[Note: In July 2025, Anthropic signed a $200 million contract with the Pentagon through its partnership with Palantir, becoming the first company to deploy cutting-edge AI models in classified military systems. OpenAI and Google also received Department of Defense contracts during the same period, but had previously only been used in unclassified networks. 】

Under that premise, Amodei said Anthropic has no problem with 98 to 99 percent of the use cases proposed by the Pentagon. There are only two exceptions: mass domestic surveillance and fully autonomous weapons.

While defending ourselves against external threats, we must do so in a way that upholds democratic values.

(“As we defend ourselves against our adversaries, we have to do so in ways that defend our democratic values and preserve our democratic values.”)

XIMGPH_3

【2】Two red lines: Technology is ahead of the law, AI makes things that were previously impossible possible

Amodei explains the logic of the two red lines one by one.

The first red line is domestic mass surveillance. He gave a specific example: the location data, personal information, political affiliations, etc. collected by private companies on Americans that the government can legally purchase and then use AI to analyze at scale. The point is, this practice is not legally illegal. Before the age of AI, this kind of analysis was impractical due to technical limitations, so the law was never required to cover this scenario. Amodei’s core argument is that technology has outpaced legal updates, and neither judicial interpretations of the Fourth Amendment nor congressional legislation have caught up.

[Note: This is not a theoretical deduction. In 2025, reports emerged that ICE (U.S. Immigration and Customs Enforcement) had used facial recognition tools like Clearview AI, as well as NEC’s Mobile Fortify system, to track individuals. ICE’s largest contractor, Geo Group, was awarded more than $800 million in contracts in 2025 to mine data from commercial databases and conduct on-the-ground surveillance of suspects. 】

The second red line is fully autonomous weapons. Amodei specifically emphasized that he was not talking about the semi-autonomous weapons already used on the battlefield in Ukraine, but weapons systems that have no human involvement at all. But he did not absolutely oppose the existence of fully autonomous weapons, acknowledging that “our adversaries may someday possess them, and perhaps the defense of democracy will eventually require them.” He raised two practical concerns.

The first is technical reliability. Anyone who has worked with AI models knows that there is a fundamental unpredictability in these systems that “from a purely technical perspective we haven’t solved yet,” Amodei said. He didn’t want to sell a product that wasn’t reliable enough to kill his own personnel or innocent civilians.

The second is the lack of supervision mechanism. He described a specific scenario: If there is an army of 10 million drones, coordinated and controlled by one person or a few people, and there are no human soldiers on the spot to make decisions about “who to fire on,” this level of power concentration is unprecedented, and the existing chain of accountability is completely ineffective. “We need to have a discussion about who pushes the button and who has the authority to say no, and we haven’t had that discussion yet.”

Amodei also revealed that Anthropic had offered to work with the Pentagon on fully autonomous weapons technology – prototyped in a sandbox environment – “but they weren’t interested unless they could do whatever they wanted from the start.”

【3】The inside story of the breakdown of negotiations: 3-day ultimatum and superficial compromise

The interviewer brought the Pentagon’s statement: “The Pentagon told us that they have agreed in principle to these two restrictions and hope to reach an agreement. Why can’t they be reached?”

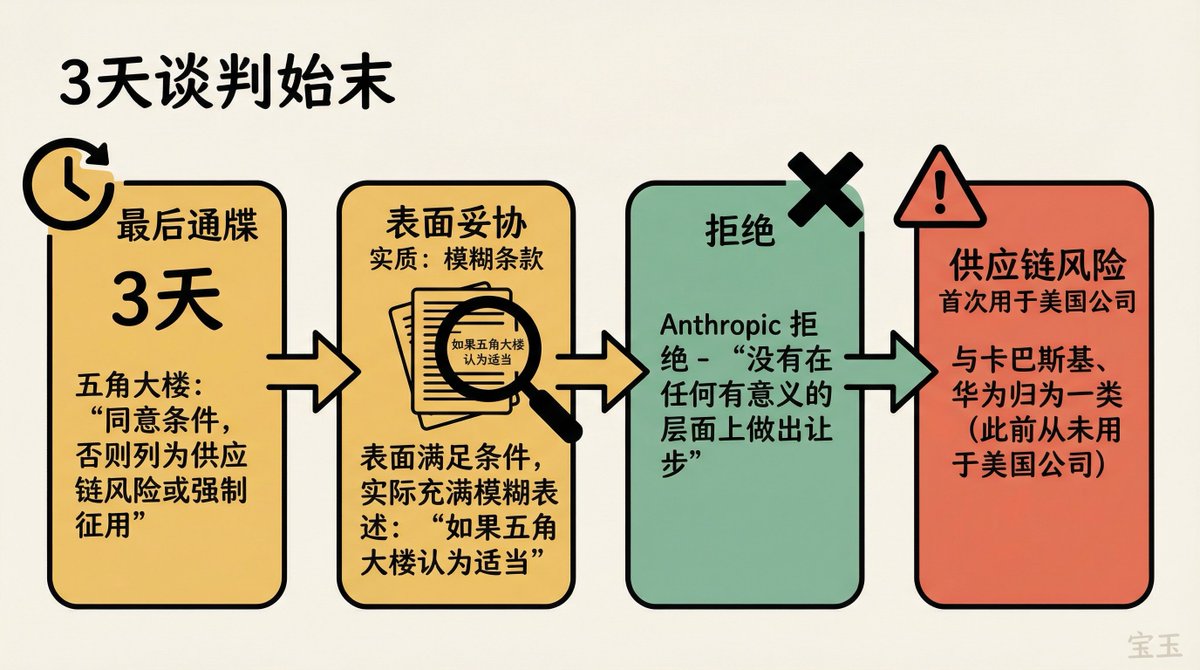

Amodei disclosed details of the negotiations for the first time. The Pentagon gave Anthropic a three-day ultimatum: agree to their terms or be classified as a supply chain risk or face requisitioning under the Defense Production Act.

There were several rounds of exchanges over the 3 days. At one point, the Pentagon sent a version of the document that on the surface appeared to meet Anthropic’s conditions, but Amodei said a closer look revealed it was filled with vague statements such as “if the Pentagon deems it appropriate,” “or anything else required by law.” “It doesn’t really compromise on any meaningful level.”

This is consistent with information obtained by ABC News. Anthropic reportedly told ABC News that the Pentagon’s latest contract language “makes little progress in preventing Claude from being used for mass surveillance of Americans or as a fully autonomous weapon.” The so-called “new language” is “laced with legal jargon” and “allows these security measures to be casually ignored.”

As evidence, he cited a tweet from Sean Parnell, the Pentagon’s chief spokesman, the day before the deadline. Parnell reiterated the Pentagon’s position: “We will only allow all lawful uses.” This is exactly the wording they have been insisting on from the beginning, which is in sharp contradiction with the so-called “agreement in principle.”

[Note: Sean Parnell is the Pentagon’s chief spokesman and senior adviser to the Secretary of Defense appointed by the Trump administration. Another key player during the negotiations was Undersecretary of Defense for Research and Engineering Emil Michael (a former Uber executive), who publicly called Amodei a “liar” on social media and accused him of having a “God complex” and trying to “personally control the U.S. military.” 】

XIMGPH_4

【4】Response to Trump’s “selfish” accusation: Even if he is blocked, he promises to help the transition

The interviewer read Trump’s post on Truth Social that day: “Their selfishness is putting American lives at risk, putting our military at risk, and putting our national security at risk.”

[Note: Trump’s full post that day took a more violent tone, calling Anthropic “leftwing nut jobs,” accusing it of trying to “force the War Department to abide by their terms of service rather than the Constitution,” and ordering all federal agencies to “immediately cease” use of Anthropic technology. 】

Rather than push back on the accusation, Amodei emphasized a specific commitment: Even after these unprecedented measures, Anthropic remains willing to do whatever it can to support the Pentagon — providing technical support until the military completes the transition and switches to another supplier.

The interviewer pressed, “Are you ready to quit?”

Amodei said he was deeply concerned about the disruption in service. He mentioned that he has spoken to uniformed military personnel on the front lines who have told him that losing Claude will set them back six to 12 months, or even longer. “That’s why we tried so hard to make a deal. But the three-day ultimatum, the threat of a supply chain risk designation — that whole timeline was driven by the Pentagon, not us.”

【5】Boundaries of power in the AI era: Who has the right to draw the line for AI military applications?

The interviewer got to the bottom of the dispute: “Why do you think Anthropic — a private company — has more power than the Pentagon to decide how AI is used in the military?”

Amodei started by clarifying the fact that, to his knowledge, these restrictions have never been triggered in actual front-line use. This is a 1% use case, and there is currently no evidence that anyone actually needs to do this.

And then he made a key concession — “I actually agree with you.” He argued that, long term, this really should be Congress’s job. AI is allowing governments to analyze Americans’ location data, personal information and political affiliations at scale without being legally illegal, suggesting the law needs to be updated.

But Congress is not the fastest-moving institution in the world. Until legislation arrives, Amodei believes it is the responsibility of those who know best about the technological frontier to serve as gatekeepers for the time being. He framed the nature of the debate with two questions:

No one wants to be monitored by the US government. No one wants to be monitored by the US government.

(“No one wants to be spied on by the US government. No one wants to be spied on by the US government.”)

These are fundamental rights—the right not to be spied on by the government, the right for soldiers, not machines, to make war decisions.

The interviewer continued: “But in the name of fundamental principles, why should Americans trust you – a private company CEO – to make these decisions rather than the federal government?”

Amodei’s answer is twofold. The first level is market logic: Anthropic is a private company and has the right to choose what to sell and how to sell it. If the government is not satisfied, it can change the supplier. He said that if the Pentagon said, “Dario, we don’t have the same ideas, let’s find another model,” he would disagree but respect it. This is the normal way to handle such disagreements.

But the government did not do this. The second level is his indictment of punitive measures: the government not only canceled Pentagon contracts, but extended the scope of penalties to government departments outside the Pentagon, attempted to punitively cancel broader government contracts, and made a supply chain risk designation that meant that any private company with a military contract could not use Anthropic for the military contract portion. “It’s hard to explain in any way other than punishment.”

[Note: The top senators on the Senate Armed Services Committee wrote a private letter to Anthropic and the Pentagon before the ultimatum expired, urging both sides to reconcile. The letter states that the Department of Defense “has no intention of conducting mass surveillance or using autonomous weapons without human involvement — positions we support in Congress” but acknowledges that the issue of “lawful use” “requires further efforts by all stakeholders.” 】

【6】Classified with Kaspersky and Huawei – “Feels very punitive and unfair”

Amodei said that to his knowledge, supply chain risk designations have never been used for U.S. companies. Previous additions to the list include Kaspersky Lab, a cybersecurity company with suspected ties to the Russian government, and foreign chip suppliers.

To be lumped into the same category as these companies feels extremely punitive and unfair considering everything we do for U.S. national security.

(“Being lumped in with them, it feels very punitive and inappropriate given the amount that we’ve done for US national security.”)

[Note: In 2024, the U.S. Department of Commerce banned Kaspersky Lab from providing anti-virus services in the United States on the grounds that the Russian government’s cyber warfare capabilities posed a threat to its operations. Previous supply chain risk determinations have targeted companies with ties to foreign governments—it is truly unprecedented to apply the same mechanism to U.S. companies. 】

【7】Boeing won’t tell the military how to use planes—why is AI different?

The interviewer offered a powerful analogy: “Boeing builds airplanes for the U.S. military, but Boeing doesn’t tell the military how to use that airplane. What’s the difference?”

Amodei responded on two levels. The first is the difference in technological maturity. Aircraft have been around for a long time, and generals have a fairly sophisticated understanding of how they work. But AI is advancing at an unprecedented pace—the computational complexity of models is doubling every four months. “We’ve never seen this pace of innovation.”

The interviewer immediately retorted: “If this speed continues, the U.S. government will never be able to catch up. Then isn’t your logic contradictory? On the one hand, you say that you need to cooperate with the government to ensure national security, but on the other hand, you say that technology is too fast and the government cannot keep up.”

Amodei’s response: Congress only has to play catch-up once. The technology is fast, but the issues that require congressional intervention are few and very specific—domestic mass surveillance and fully autonomous weapons. Once a legislative framework is established, it does not need to be updated repeatedly.

He condensed his argument into a core question: “Why bother with the 1% of use cases that go against our values instead of advancing the 99% of use cases that are conducive to democratic values and can protect this country? We are even willing to study the last 1% to see if there is a way to do it in a way that is consistent with our values.”

[8] If fully autonomous weapons go wrong: Manslaughter of civilians and the accountability problem for 10 million drones

The interviewer pressed, “Give me one or two examples of what could go wrong.”

Amodei describes two types of risks. The first category is reliability risk: AI aims at the wrong target, shoots civilians, and lacks the judgment of human soldiers, resulting in friendly fire or innocent casualties. “We don’t want to sell something that we don’t think is reliable enough, that could lead to one of our own being killed or innocent people being killed.”

The second category is supervision risk. He asked viewers to imagine a scenario: an army of 10 million drones, coordinated and controlled by one or a handful of people. Human soldiers have a whole chain of accountability based on everyone using common sense judgment. When you concentrate all decision-making in the hands of a very small number of people, the system breaks down.

He emphasized again that he was not saying there should never be such a fleet of drones — “Maybe someday we will need it because our adversaries will” — but that accountability mechanisms must first be discussed. “Whoever is pushing the button has the right to say no. I think that makes perfect sense.”

[9] “Left Awakening Company” label: Anthropic has always deliberately remained politically neutral.

The interviewer quoted Trump’s characterization directly: “Trump called Anthropic a ‘leftist woke company.’ Is this decision ideologically driven?”

Amodei countered by citing a record of collaboration: He attended an energy event in Pennsylvania hosted by Trump and Senator McCormick to discuss providing adequate power supplies for AI models; Anthropic signed a pledge on AI health applications; and when the government’s AI action plan was released, Anthropic publicly agreed with “many or even most of its elements.”

“Anthropic only speaks in the AI policy space because that’s where we have expertise. We don’t engage in general political issues. This notion that we’re not being impartial — we’ve been deliberately unbiased.”

He added: “We can’t control what others – not even the president – think of us. What we can control is: remain rational, remain neutral, and stand up for what we believe in.”

【10】Tweets govern the country: no formal notice, only social media posts

The interviewer asked Anthropic if it would take legal action. “All we received was a tweet. We didn’t receive any formal information. There was no actual characterization of the supply chain. There was no real action from the government. There were just tweets saying what they claimed they were going to do,” Amodei said.

An American technology company worth tens of billions of dollars, a decision that affects the entire defense AI ecosystem was actually communicated through a social media post. There are no formal documents, no legal procedures, no direct communication.

“When we receive some kind of formal action, we look at it, understand it and then challenge it in court.”

He also pointed out an important legal detail in Hegseth’s tweet: Hegseth claimed that “any company doing business with the military cannot engage in any commercial activity with Anthropic,” but the law actually only states that Anthropic cannot be used within the context of military contracts. There is a huge difference between the two. Amodei said Hegseth’s tweet was “intended to create uncertainty, designed to create fear, uncertainty and doubt”**.

For the first time, Amodei sidestepped the question of whether this constituted an “abuse of power,” saying only that “this is unprecedented.” When pressed again by the interviewer, he was more specific: This has never happened, never been done to an American company, and it’s clear from the government’s statements and language that “it’s retaliatory and punitive. I don’t know what else to call it.”

【11】Can an agreement be reached – “The red line will not be shaken”

The interviewer asked him, on a scale of 1 to 10, how likely it was that a deal would be reached in the future.

Amodei did not score. He reiterated Anthropic’s position: the two red lines have been in place since day one and will not change. If a consensus can be reached with the Pentagon, there can certainly be an agreement. “We continue to want to make this work for the sake of U.S. national security. But it takes both sides to reach an agreement.”

【12】If you can talk to the president – “Opposing the government is the most American thing in the world.”

The interviewer gave Amodei a chance to address the president directly: “If you could talk to the president right now, what would you say?”

He said: We are patriotic Americans. Everything we do is for this country and to support U.S. national security. We are the first to deploy models to the military because we believe in this country. We draw red lines because crossing these red lines goes against American values, and we want to defend American values.

When faced with threats to supply chain designations and the Defense Production Act — which are “unprecedented government intrusions into the private economy,” he said Anthropic exercised its “classic First Amendment right to dissent.”

Going against the government is the most American thing in the world, and everything we do in doing so is patriotic.

(“Disagreeing with the government is the most American thing in the world, and we are patriots in everything we have done here. We have stood up for the values of this country.”)

【13】Business survival——“Not only can we survive, we will be good”

The interviewer asked the top question for investors and customers: “Do you think Anthropic can survive as a business?”

Amodei said the actual scope of the supply chain designation was far less widespread than Hegseth’s tweet suggested. “The purpose of the Secretary of Defense’s tweet is to create uncertainty, to create fear, to make people think the impact will be much greater than it actually is. But we’re not going to let that tactic succeed. We’re going to be fine.”

[Note: Anthropic is expected to have revenue of at least US$18 billion in 2026, with a valuation of approximately US$380 billion. The canceled Pentagon contracts were worth $200 million, a small percentage of its revenue. But the bigger risk comes from the possible knock-on effects of a supply chain designation—a large number of corporate customers who do business with the Pentagon may use Claude less out of risk aversion. 】

【14】The biggest irony: OpenAI got the same terms on the same night

That same night, things took a turn.

Just hours after Anthropic was “blacklisted” for adhering to two red lines, OpenAI CEO Sam Altman announced on X that OpenAI has reached an agreement with the Pentagon to deploy its models on classified networks. The key is the terms Altman claims. “Two of our most important security principles are the prohibition of domestic mass surveillance and human responsibility for the use of force, including for autonomous weapons systems. The War Department agrees with these principles, embodies them in law and policy, and we have enshrined them in our agreements,” he wrote.

This is the exact same reason Anthropic is punished.

XIMGPH_5

Several media outlets pointed out this contradiction. CNN reports that “it’s unclear how OpenAI’s protocol differs from what Anthropic wants.” Fortune’s analysis is more pointed: The key difference may be the wording – Anthropic tried to write these limits explicitly into the contract, while OpenAI agreed that the Pentagon could use its technology for “any lawful purpose,” while Altman “also said these limits were written into the agreement.” How both are established at the same time is “unknown”.

The Axios report provides another perspective: A person familiar with the matter revealed that the restrictions in the OpenAI agreement “reflect existing U.S. law and Pentagon policy” and the intention is not to “invent new legal standards.” Anthropic’s core concern lies precisely in the fact that existing laws cannot keep up with the capabilities of AI.

Mark Dalton, an analyst at the R Street Institute, pointed out a fundamental contradiction in the Pentagon’s position: Earlier this week, they considered Anthropic’s technology so important to national security that they considered using the Defense Production Act to force its acquisition; and now they suddenly say it is a “supply chain risk.” Something cannot be both an “essential national security asset” and a “supply chain risk” unless the real question is who has the authority to say “no.”

Altman ended his post with a telling statement: “We are asking the War Department to offer the same terms to all AI companies, and we believe every company should be willing to accept them.”

The aftermath of this conflict is far from over.

Anthropic said it would challenge the characterization of supply chain risks in court. More than 430 Google and OpenAI employees signed an open letter supporting Anthropic’s stance and calling for industry unity. Jeff Dean, chief scientist at Google DeepMind, has personally spoken out against mass government surveillance. But xAI’s Elon Musk sided with the government, claiming “Anthropic hates Western civilization.”

The debate goes beyond a contract or the fate of a company. As Amodei said repeatedly in interviews: When AI’s capabilities go before the law, when algorithms can do things that the framers of the Constitution never imagined, who will hold the bottom line?

OpenAI was awarded a contract with the same restrictions on the same night, and even the Pentagon finally agreed to both red lines. The real question is: does a company have the right to say “no” at the bargaining table, or must it comply unconditionally?

Amodei chose to say “no.” The price was a $200 million contract, a “supply chain risk” label and name-calling attacks by the president on social media. He said Anthropic would be fine. Time will tell.

Full interview video:http://www.youtube.com/watch?v=MPTNHrq_4LU

source

author:Baoyu

Release time: March 1, 2026 13:35

source:Original post link

Editorial Comment

The fallout between Anthropic and the Pentagon on February 27, 2026, is a watershed moment that should make every technology executive and investor pause. We are no longer in the era of "move fast and break things"; we are in the era of "comply or be classified as an enemy of the state."

The most striking element of this conflict isn't the ethical debate over autonomous drones—it’s the weaponization of the "supply chain risk" designation. Historically, this was a surgical tool used to excise foreign espionage threats like Kaspersky or Huawei from American infrastructure. By turning this tool inward against a multi-billion dollar domestic company, the administration has signaled that political alignment and contractual "flexibility" are now prerequisites for doing business in the United States. It is a regulatory nuclear option used to settle a contract dispute.

Amodei’s position is intellectually consistent but politically precarious. He is essentially arguing that the Fourth Amendment is currently "broken" because it never contemplated a world where a private company could sell the government a tool capable of real-time, automated tracking of 330 million people. He isn't just asking for a better contract; he’s asking for a new constitutional settlement. In his view, until Congress acts, the person who built the tool is the only one who can prevent its misuse.

However, the "senior editor" view of this situation requires looking at the OpenAI contrast. Sam Altman’s ability to secure a deal with seemingly identical "red lines" just hours later is the ultimate tell. It suggests that the Pentagon wasn't necessarily hell-bent on building "Skynet" immediately; they were hell-bent on ensuring that no private CEO has the right to tell the Commander-in-Chief "no." OpenAI likely accepted "lawful use" language that gives the government the benefit of the doubt, whereas Anthropic insisted on explicit, enforceable restrictions. One company chose strategic ambiguity to stay in the room; the other chose principled friction and got kicked out of the building.

For the broader industry, the implications are chilling. If a company’s status as a "national security risk" can be announced via a social media post without a formal filing or due process, the "sovereign risk" of building technology in the U.S. has just skyrocketed. We are seeing a shift where the state views frontier AI not as a product to be purchased, but as a utility to be conscripted.

Amodei’s defense—that "disagreeing with the government is the most American thing in the world"—is a noble sentiment, but it doesn't pay the server bills. While Anthropic’s $18 billion revenue stream makes them resilient for now, the "fear, uncertainty, and doubt" (FUD) campaign led by the Pentagon will inevitably bleed into their private sector enterprise business. Fortune 500 companies are notoriously allergic to vendors that are in the crosshairs of the White House.

Ultimately, this isn't just about AI; it’s about the end of the "independent" tech sector. As AI becomes the central nervous system of national defense, the space for "neutral" or "principled" tech companies is shrinking. You are either a defense contractor or a target. Anthropic tried to be a partner with a conscience, and they found out that in the 2026 political climate, the state doesn't want a partner—it wants a tool.