Editor’s Brief

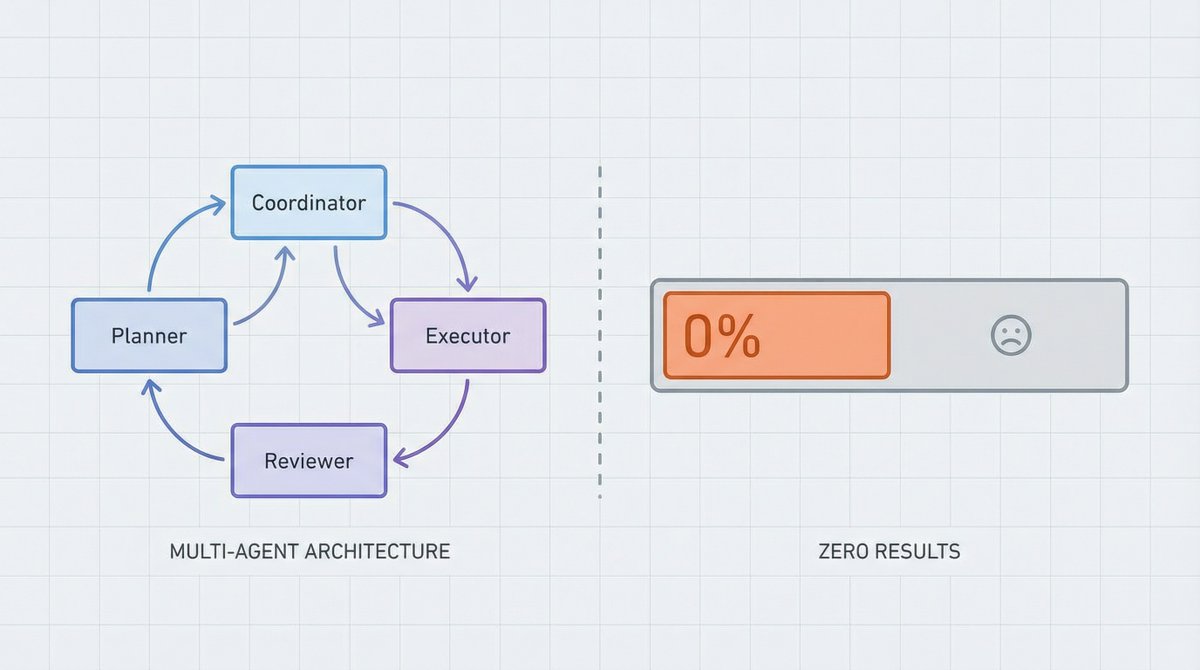

Mortal Xiaobei critiques the tendency of OpenClaw users to over-engineer multi-agent systems. By drawing on economic theory and industry failures, the author argues that "coordination costs" often outweigh the benefits of complex architectures, suggesting a "skills-first" approach instead.

Key Takeaways

- The "Stationery" Fallacy: Designing complex multi-workspace architectures is often a form of productive procrastination that masks a lack of actual output.

- The Coase Constraint: Just as firms exist to minimize transaction costs, AI agents should only be split when the benefit of specialization exceeds the cost of coordination.

- Skills vs. Agents: Most specialized tasks are better handled as "Skills" (tools) attached to a single agent rather than spawning entirely new autonomous roles.

- Valid Split Criteria: Multi-agent setups should be reserved for true parallel processing, strict permission isolation, or managing context window limits.

Introduction

The following content is compiled by VIPSTAR in combination with X/social media public content and is for reading and research reference only.

focus

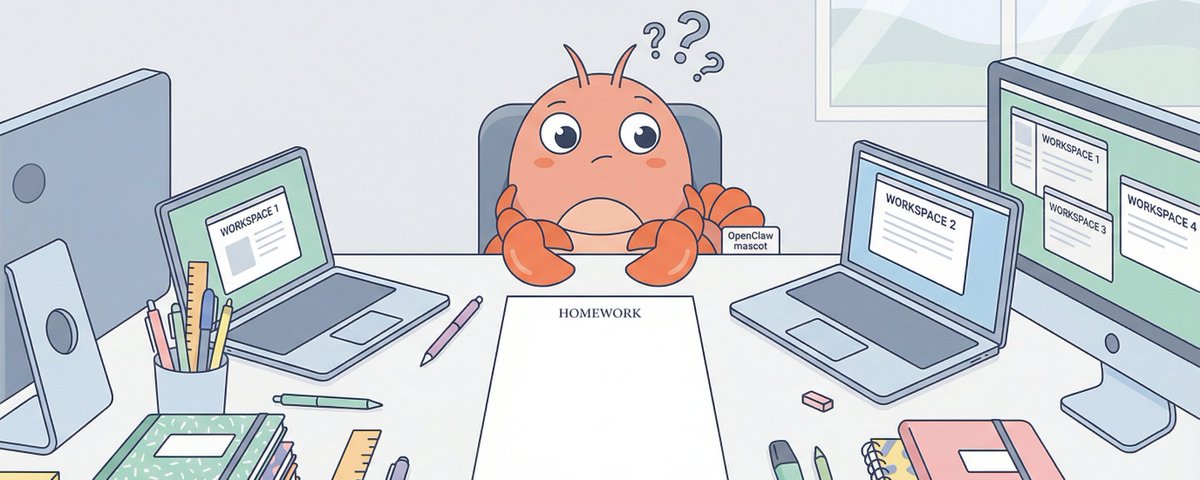

- OpenClaw poor students have too much stationery: you may not need 4 Workspaces

- Recently I have seen an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started.

Remark

For parts involving rules, benefits or judgments, please refer to Mortal Xiaobei’s original expression and the latest official information.

Editorial comments

This article “X Import: Mortal Xiaobei – OpenClaw poor students have many stationery: you may not need 4 Workspaces” comes from the X social platform and is written by Mortal Xiaobei. Judging from the completeness of the content, the density of key information given in the original text is relatively high, especially in the core conclusions and action suggestions, which are highly implementable. OpenClaw students have a lot of stationery: you may not need 4 workspaces. A counter-intuitive observation. Recently, I saw an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started. The architecture is beautifully drawn: clear division of roles and clear boundaries of responsibilities. Then what? Each workspace is empty, and the Coordinator can do everything by itself. This reminds me of what my teacher said when I was a kid:…. For readers, its most direct value is not “knowing a new point of view”, but being able to quickly see the conditions, boundaries and potential costs behind the point of view. If you break this content down into verifiable judgments, it would at least include the following levels: OpenClaw has a lot of bad students: you may not need 4 Workspaces; I recently saw an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started. . Among these judgments, the conclusion part is often the easiest to disseminate, but what really determines the practicality is whether the premise assumptions are established, whether the sample is sufficient, and whether the time window matches. We recommend that readers, when quoting this type of information, give priority to checking the data source, release time and whether there are differences in platform environments, to avoid mistaking “scenario-based experience” for “universal rules.” From an industry impact perspective, this type of content usually has a short-term guiding effect on product strategy, operational rhythm, and resource investment, especially in topics such as AI, development tools, growth, and commercialization. From an editorial perspective, we pay more attention to “whether it can withstand subsequent fact testing”: first, whether the results can be reproduced, second, whether the method can be transferred, and third, whether the cost is affordable. The source is x.com, and readers are advised to use it as one of the inputs for decision-making, not the only basis. Finally, I would like to give a practical suggestion: If you are ready to take action based on this, you can first conduct a small-scale verification, and then gradually expand investment based on feedback; if the original article involves revenue, policy, compliance or platform rules, please refer to the latest official announcement and retain the rollback plan. The significance of reprinting is to improve the efficiency of information circulation, but the real value of content is formed in secondary judgment and localization practice. Based on this principle, the editorial comments accompanying this article will continue to emphasize verifiability, boundary awareness, and risk control to help you turn “visible information” into “implementable cognition.”

OpenClaw poor students have too much stationery: you may not need 4 Workspaces

a counterintuitive observation

Recently I have seen an interesting phenomenon in the OpenClaw community: many people are rushing to configure a bunch of workspaces when they first get started.

The architecture is beautifully drawn:

There is a clear division of roles and clear boundaries of responsibilities. Then what?

Each workspace is empty, and the Coordinator can do everything by itself.

This reminds me of what my teacher said when I was a kid: Poor students have more stationery.

It’s not just newbies making mistakes

You may think this is a newbie question. But no.

Saw a post on Reddit r/n8n the other day. n8n is a no-code workflow platform, and its users are mostly experienced developers and automation experts. The post said:

“I have seen too many workflows where 5-6 specialized agents coordinate with each other. If I open it up, I can actually do it with 3 nodes + an agent.”

He gave an example: Someone created three agents, email drafting, email sending, and email followup tracking.

One agent + three tools can obviously solve the problem, but it has to be split into three agents.

Similar sounds also appeared in large factories. GitHub recently published a blog “Multi-agent workflows often fail”, reviewing the pitfalls they have encountered in Copilot and internal automation:

「Most multi-agent failures come down to missing structure, not model capability.」

Translation: The main reason for multi-agent failure is not insufficient model capabilities, but structural design problems.

What’s interesting is that n8n is a no-code community, and most of its users are pragmatists who “just use it”; GitHub is an engineering team of a major manufacturer that pays attention to architecture and best practices. People on both sides have completely different backgrounds, but they are making the same mistakes.

Why?

Because we naturally love to make simple things complicated.

Why do we love over-design?

Complex = professional?

Human beings have a deep-rooted intuition: complex things are more powerful.

The four agents have a clear division of labor, with arrows pointing back and forth, which looks much more “professional” than one agent working alone.

But this is an illusion.

Drawing architectural diagrams is great. You are creating, you are planning, and you feel that you are doing “correct engineering practice.”

It is very painful to make an agent truly run through. You have to deal with edge cases, you have to debug strange bugs, and you have to face the soul torture of “Why can’t it do such a simple thing well?”

Why do poor students have so much stationery? Because buying stationery is easier than doing homework, and organizing stationery is more enjoyable than solving problems.

The temptation of division of labor

In 1776, Adam Smith told the story of a needle factory in “The Wealth of Nations”: ten workers working together could produce 48,000 needles a day; one person working alone could not produce even 20 needles a day.

Specialization brings efficiency gains, which is the cornerstone of economics.

But what Smith didn’t tell you is: specialization also brings coordination costs.

Who is responsible for what? How to hand over? Who will take care of things if something goes wrong?

In 1937, economist Ronald Coase asked a question: If the market division of labor is so efficient, why are firms needed?

The answer is: transaction costs. The costs of finding people in the market, negotiating, signing contracts, and supervising implementation…these costs may add up to more than “hiring one person to do it all.”

The boundaries of an enterprise depend on the balance between coordination costs and division of labor benefits.

The same goes for AI Agents.

Tools have changed, and so have boundaries.

Interestingly, in recent years we have seen a trend: more and more one-person companies.

One person + Vercel + Supabase + Stripe + ChatGPT can make products that previously required 10 people.

Why? Because the tools have gotten better.

When one person + good tools can do the work of three people, you don’t need three people. Not because three people are bad, but because the cost of coordinating three people may be higher than the extra work.

OpenClaw’s Skill system has this logic:

- Skill is capability expansion: install a new tool on the agent

- Agent is a split role: develop an independent brain

Do I need a “weather agent” to check the weather? No, just install a weather skill.

Most of the “specialized requirements” can be solved using skills, and there is no need to dismantle the agent.

Coordination costs are severely underestimated

Hidden costs of multiple agents:

- Context is lost: What the coordinator knows, the researcher does not know

- Format alignment: pass JSON here, expect Markdown there

- Status synchronization: Who is doing what? Where have you been?

- Error propagation: If the Researcher makes an error, how do you tell the Coordinator?

Within an agent, these are all automatic. Split into multiple agents, each item must be processed explicitly.

The GitHub blog also mentioned that agents will make many implicit assumptions about status, order, and format. When these implicit assumptions go wrong, the entire system breaks down.

our lessons

Tell us about ourselves.

At the beginning, we excitedly set up multiple workspace configurations. Four characters, four sets of bots, the configuration took a day.

After running for two weeks, I found:

- Searching for information? Just use web_search directly in main

- In-depth analysis? Main can also be used to load analysis skills.

- On a mission? Main calls various tools and that’s it.

There is only one scenario that really requires an independent agent: writing code in parallel.

For example, if you are working on a website project, let Codex write the front-end and Claude Code write the back-end, and the two can run at the same time. This is “really need more agents”.

a concrete example

I recently built a website and had 8 front-end tasks.

Option A: One Codex sequence

- Time taken: 4 hours

- Coordination cost: 0

Option B: 8 Codex done in parallel

- Time taken: 30 minutes

- Coordination costs: merge conflicts, inconsistent styles, duplicate components

We chose option A.

When to use plan B? When backend + frontend are connected, it only makes sense for the two Agents to run in parallel, because waiting for each other is a waste.

Lesson: Early demolition = increased coordination costs + no benefits.

If it really needs to be demolished

That’s not to say never dismantle it, but to wait until it encounters a bottleneck before dismantling it.

When is it really needed?

- True parallelism: two tasks run at the same time, independent of each other

- Hard isolation requirements: different permissions, different contexts

- Context explosion: the context window of a single agent is really not enough

What to do after demolition

If you do decide to split, have these ready:

- Explicit handover checklist

Every handoff must be clear: output format, tone goals, context, completion criteria. Don’t assume the other person “should know.”

- Three logs

- Action log: what was done

- Rejection log: why not done (most easily ignored, but most valuable)

- Handover log: to whom and what

Rejection logs are particularly important. “Why it was not done” is often invisible work that no one sees, but if it is not done, the system will have problems.

- near-miss summary

Regular statistics of “almost accidents but being caught” incidents. These data allow the value of coordination to be quantified, preventing coordinators from being underestimated, resources being cut off, and the system from collapsing.

correct order

1. First let a workspace actually work

Able to complete daily tasks

Ability to handle edge cases

Able to produce stable output

1. Use Skills to expand capabilities

Need new capabilities? install skill

No new roles required

1. Consider dismantling when encountering bottlenecks

Single agent response is too slow → Consider parallelism

Context is too long → consider splitting

Permissions need to be isolated → consider independent workspace

Don’t skip 1 and 2, go straight to 3.

Coase’s problem

Back to Coase’s question: Where are the boundaries of the enterprise?

The answer is: when internal coordination costs = external transaction costs.

The same goes for the boundaries of AI Agents.

It is only worth splitting when “the benefits of splitting into multiple agents” > “coordination costs”.

Otherwise, there are too many stationery items for poor students.

Questions for you

Before you rush to provision a second workspace, ask yourself:

- Is it true that a workspace + skills can’t do it?

- After demolition, are you ready to coordinate the costs?

- Are you solving problems or enjoying the thrill of “designing complex systems”?

If you can’t dismantle it, don’t dismantle it. If you want to dismantle it, you have to wait until you encounter a real bottleneck.

source

author:Mortal Xiaobei

Release time: February 28, 2026 22:57

source:Original post link

Editorial Comment

There is a recurring pathology in software engineering that I like to call "Architectural Vanity." It’s the urge to build a cathedral for a congregation of three. In the current gold rush toward agentic workflows, this manifests as the "Multi-Agent Trap." Mortal Xiaobei’s critique of OpenClaw users—likening them to struggling students who buy fancy stationery instead of studying—is a sharp, necessary reality check for the industry.

We are currently seeing a massive push toward "agentic" everything. The marketing suggests that if one agent is good, ten agents working in a digital "factory" must be better. But as this post correctly identifies, we are ignoring the ghost in the machine: coordination overhead. In human organizations, we know that adding more people to a late project makes it later. In AI systems, adding more agents to a simple task makes it more fragile.

The reference to Ronald Coase and the "Nature of the Firm" is particularly astute. In economics, a firm grows until the cost of organizing an extra transaction within the firm becomes equal to the costs of carrying out the same transaction on the open market. Applying this to OpenClaw or any agentic framework, the "boundary" of your agent should be determined by friction. Every time you split a task into two workspaces, you introduce a "transaction." You now have to manage state synchronization, handle format mismatches (JSON vs. Markdown), and deal with context dilution. If your "Coordinator" agent spends 80% of its logic just managing the handoffs between a "Researcher" and a "Writer," you haven’t built a system; you’ve built a bureaucracy.

The GitHub engineering blog cited in the post offers the most sobering evidence. When the world’s leading repository of code says that multi-agent failures usually stem from structural flaws rather than the underlying intelligence, we should listen. The problem isn't that the agents aren't "smart" enough to talk to each other; it's that the "contracts" between them are often implicit and poorly defined. When a single agent handles a task, the "state" is unified. The moment you split it, you create a gap where information falls through.

For practitioners, the "Skills vs. Agents" distinction is the most actionable takeaway here. In frameworks like OpenClaw, a "Skill" is essentially a tool—a function the agent can call. An "Agent" is a separate entity with its own loop. Most people who think they need a "Weather Agent" or a "Database Agent" actually just need a "Weather Skill" or a "Database Skill." You want your agent to have a bigger toolbox, not a bigger staff.

My editorial advice to anyone building in this space is to adopt a "minimal viable agency" mindset. Start with a single workspace. Give it every tool (skill) it needs to fail or succeed. Only when you hit a hard wall—such as the need to run two heavy processes simultaneously to save time, or a requirement to keep sensitive API keys in a separate, isolated environment—should you consider clicking that "New Workspace" button.

Complexity is a cost, not a feature. If your architecture diagram looks like a spiderweb but your actual output is zero, you aren't an engineer; you're a digital stationery collector. Focus on the "homework"—the actual task execution—and let the tools remain as simple as possible for as long as possible. The most sophisticated systems are often the ones that look the most boring on paper.