Editor’s Brief

The technology sector is entering a phase of "perceived acceleration" where AI Agents have transitioned from peripheral assistants to the primary drivers of production. Using a speculative look-back from early 2026, the narrative highlights a total inversion of the programming paradigm—exemplified by Andrej Karpathy’s shift to 80% agent-led development—and explores how the commoditization of code mirrors historical shifts like the printing press and the industrial adoption of electricity. The core thesis is that while technical execution is becoming a zero-marginal-cost resource, the "productivity paradox" remains: real gains only arrive when organizational structures are redesigned around the new technology rather than merely plugging it into old workflows.

Key Takeaways

- The 80/20 Paradigm Flip: High-level developers are moving from writing syntax to auditing logic, with natural language serving as the primary interface for complex system configuration and deployment.

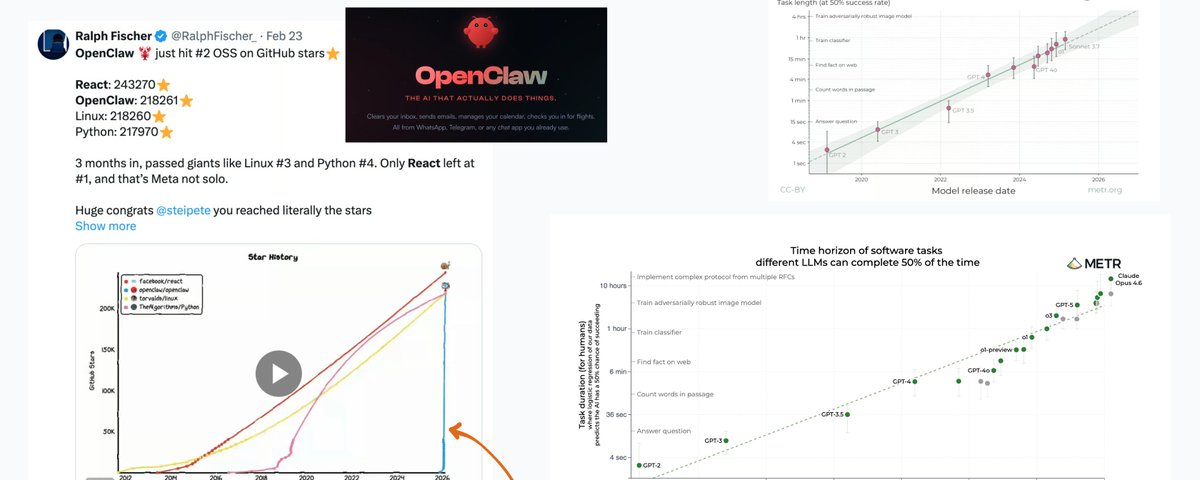

- Democratization via OpenClaw: Tools like OpenClaw are moving agentic capabilities out of specialized IDEs and into common interfaces like Slack and Telegram, lowering the barrier for non-technical users to execute complex tasks.

- The Productivity Lag: Historical data from the electrification of factories suggests that simply replacing old tools with new ones (steam for electric, or manual code for AI) yields little gain until the entire "factory floor" or workflow is structurally reimagined.

- Value Migration and Power Shifts: As the "how" of technology becomes cheap, the "what" and "why" become the new profit centers. Value is migrating from technical implementation to system architecture, product intuition, and user trust.

Extended Editorial Analysis

The practical value of As we stand at the beginning of change is not the headline claim itself, but whether the claim can survive execution constraints. In fast social channels, strong narratives travel faster than verification. We therefore treat reposted content as input for decisions, not as a decision replacement.

A useful operating method is a three-step pass: extract verifiable facts, assign confidence levels to major claims, and convert insights into small time-boxed experiments with explicit stop conditions. This protects teams from overcommitting while still capturing upside from timely signals.

For risk control, one-source interpretation is never enough. We recommend a quick triangulation: one independent source, one quantifiable indicator, and one counterexample scenario. If any of the three breaks, downgrade confidence before allocating more resources.

Our editorial intent is to increase decision utility: keep attribution, add context, define boundaries, and clarify actionable takeaways. As new public evidence appears, this analysis should be updated so the article remains operationally useful over time.

The Current Technological Shift Is Not Abstract — It Has Specific Names and Addresses

When analysts say "AI is transforming software development," the abstraction makes it easy to defer. But the technology transformation beginning practical preparation becomes urgent when you name the actual tools already in production use at thousands of companies today.

Claude Code, Cursor, and GitHub Copilot are not future-tense products. They are the daily instruments of a growing cohort of working engineers who have, quietly and without corporate announcement, restructured their entire day around agent-assisted loops. Cursor’s reported 40,000 paying business customers by late 2024 is not a niche statistic. It represents an installed base large enough to generate its own gravitational pull—onboarding pressure inside teams, implicit skill expectations in hiring, and competitive disadvantage for organizations that opt out.

The open-source side of this shift is equally concrete. When Meta released Llama 3, when Mistral shipped Mixtral, and when DeepSeek demonstrated that a fraction of the reported training budget could approach frontier performance, they collectively collapsed the moat around proprietary model access. The result: by early 2026, running a capable large language model locally or on a cheap VPS is a solved problem. The "intelligence layer" has become infrastructure, as fungible and commodity-priced as compute was after the cloud wars of the 2010s.

Agent frameworks are maturing at a similar pace. LangChain, LlamaIndex, CrewAI, and AutoGen each represent a different philosophy on how autonomous systems should be composed, but they share one implication: the unit of work is no longer a function or a prompt, but a goal. You describe an outcome and delegate the sequencing to an orchestrator. For individual practitioners, this is less a technical upgrade than a cognitive shift—from craftsman to director.

The Adjacent Possible: Position Beats Prediction

The biologist Stuart Kauffman coined the term "adjacent possible" to describe the set of transformations available from any given state. Applied to career positioning in a technology transition, it offers a more tractable frame than trying to predict which tool will win. You do not need to know whether Anthropic or OpenAI will dominate model-level competition in 2027. You need only ask: am I positioned adjacent to where significant change is happening?

Adjacent to this transition means something specific. It means working in environments where AI-assisted workflows are being experimented with, not just discussed. It means having hands-on contact with at least one agent framework, even in a weekend project context. It means reading primary sources—model release notes, evals papers, developer retrospectives—rather than filtered commentary. Proximity to active experimentation is the real preparation, because it builds the pattern recognition that purely abstract study cannot supply.

The mobile transition of 2007–2012 illustrates the point sharply. Thousands of software engineers who had built careers on desktop application frameworks suddenly found their skills illiquid. The ones who recovered fastest were not necessarily the ones who predicted the iPhone’s dominance early. They were the ones who had already been adjacent to native mobile development—writing small apps, attending platform developer conferences, reading the SDK documentation before anyone told them to. They weren't clairvoyant. They were proximate.

How Past Transitions Rewarded Early Practitioners—and What They Left Behind

The electricity analogy, already central to the original analysis, deserves a more granular examination because it surfaces a pattern that has repeated across every major platform transition in the computing era.

The printing press (1440s): Scribes were not immediately unemployed. For decades, their calligraphic skills were valued for luxury manuscripts. The disruption was structural: the economics of information distribution changed so completely that the entire scribal class eventually became vestigial. The beneficiaries were not monks who typed faster; they were the publishers, editors, and eventually the journalists who defined what the new medium was for.

The cloud transition (2006–2015): System administrators who managed physical servers faced a credential devaluation that felt sudden but had been accumulating for years. AWS launched in 2006; by 2012, the organizational logic of maintaining a data center was increasingly indefensible for any company below enterprise scale. The practitioners who thrived were not the ones who virtualized their existing mental models. They were the ones who understood distributed systems, stateless architecture, and infrastructure-as-code—concepts that required abandoning the physical-server frame entirely.

The open-source wave (late 1990s–2010s): Proprietary software vendors dismissed Linux as a hobbyist curiosity until it captured the web server market, then the mobile market, then the cloud market. The engineers who invested early in open-source contribution built reputations that survived every employer transition and every tool-cycle churn. The contribution record was more durable than any single job title.

The pattern across all three transitions is consistent: early practitioners gained advantages that compounded, while late adopters paid a premium for the same access once it became normalized. The transition window—the period between when a technology becomes viable and when it becomes mandatory—is where preparation has the highest asymmetric return.

That window is open right now. Not for much longer.

What to Do Before the Window Closes: Practical Moves, Not Motivational Abstractions

The anxiety this transition generates is legitimate and worth naming. Information overload is real: the number of new model releases, framework announcements, benchmark controversies, and think-pieces has grown past any individual's ability to track. FOMO compounds the problem—every week brings a claim that some new tool represents a categorical leap, and every week the claim requires calibration against actual use.

The productive response to this noise is bandwidth management through selective depth. Rather than trying to track everything, the practitioner who benefits from this transition is the one who goes deep on a small number of high-leverage areas while maintaining a lightweight awareness layer for everything else.

Skills Worth Compounding Now

- Prompt engineering and context architecture. The quality of output from any language model scales non-linearly with the quality of context provided. This is a learnable, transferable skill—and it currently commands a significant premium because it is still rare in non-technical roles.

- Evaluation design. As AI-generated outputs proliferate, the ability to define what "good" looks like—and to build test suites that verify it—becomes a scarce and valuable capability. This is where the METR finding about experienced developers slowing down is instructive: the bottleneck was evaluation, not generation.

- Systems thinking at the workflow level. Not systems architecture in the computer science sense, but the ability to map a business process, identify where AI assistance creates leverage, and redesign the workflow around the new constraints. This is the "small electric motor on every machine" insight—and it requires organizational empathy as much as technical knowledge.

- Data provenance and trust signals. When code, copy, analysis, and images can all be generated at near-zero cost, the differentiating asset is verifiable origin. Understanding how to maintain, document, and communicate the provenance of AI-assisted work is already a competitive differentiator in high-trust industries.

Habits That Compound Without Adding Cognitive Load

Not all preparation requires dedicated study blocks. Several habits integrate naturally into existing work patterns and build compounding advantage over 12–18 month horizons.

- Use one AI coding assistant—Cursor, Claude Code, or Copilot—on every project, even when it would be faster to write the code manually. The goal in this phase is pattern recognition, not speed. You are learning how to delegate, review, and correct, which are the durable skills.

- Follow primary sources rather than aggregators. The Anthropic, OpenAI, and Mistral engineering blogs, the Hugging Face papers page, and the GitHub repositories of the leading agent frameworks contain information weeks ahead of any newsletter synthesis.

- Document your experiments with explicit stop conditions. Before starting any exploration of a new tool or workflow, write down what result would convince you to adopt it and what result would convince you to stop. This counteracts the sunk-cost drift that turns exploratory projects into unfinished distractions.

Managing the Anxiety Without Dismissing the Signal

The noise-to-signal ratio in AI coverage is currently at its worst. The "Installation Period" Carlota Perez describes is, by definition, a time of speculative excess, contradictory evidence, and premature obituaries for entire professions. Dismissing all of it as hype is as costly as believing all of it as fact.

A calibrated stance: take the structural claims seriously (execution is commoditizing, value is migrating up the stack, organizational redesign is necessary) while treating specific tool predictions and timeline claims with heavy skepticism. The structural claims have historical precedent and are already visible in hiring data and product roadmaps. The specific predictions—which model, which framework, which company—are noise.

The question is not whether this transformation is real. The question is whether you are building the judgment to navigate it before that judgment becomes expensive to acquire.

The technology transformation beginning practical preparation is not a motivational call to action. It is a timing observation. The practitioners who prepared during the cloud transition before cloud became mandatory—who learned Terraform before their employers required it, who built AWS literacy before the job descriptions demanded it—entered the normalized phase with accumulated advantages that took late adopters years to close. The same dynamic is in motion now. The window between "viable" and "mandatory" is where preparation compounds. Act inside it.

English translation is temporarily delayed due upstream API instability. Source Chinese version is linked below.

Chinese original: https://novvista.com/p/948/

Editorial Comment

The most striking element of this observation isn't the prediction of faster code; it’s the "body feel" of the acceleration. When Andrej Karpathy notes that his workflow flipped from 80% manual to 80% agent-driven in a single month, we aren't just looking at an efficiency gain. We are witnessing the collapse of a specific type of human capital. For decades, the ability to speak the "language of the machine" was a high-walled garden. Now, that wall is being pulverized.

However, as a senior editor watching these cycles, I find the historical parallels to the "Productivity Paradox" most grounding. We often forget that when electricity first entered factories, productivity didn't budge for nearly forty years. Why? Because factory owners simply swapped a giant steam engine for a giant electric motor, keeping the same cramped, multi-story layouts and belt-driven shafts. Real growth only exploded when a new generation of engineers realized that small electric motors allowed for single-story, horizontal assembly lines—the birth of the Fordist revolution.

We are currently in that "steam-to-electric" transition phase with AI. Most companies are trying to shove AI agents into existing Jira tickets and legacy agile workflows. They are using a jet engine to power a horse carriage. The METR study cited—showing that experienced devs actually slowed down when using AI on familiar projects—is a cold shower for the hype cycle. It suggests that our current "factories" (our codebases and management structures) are still built for the manual era. The friction isn't in the AI's ability to generate code; it’s in our ability to review, test, and integrate it at the speed of thought.

The mention of Jack Dorsey’s radical downsizing at Block is a grim harbinger of the "One-Person Team" era. Whether or not "AI washing" is involved in corporate layoffs, the market narrative has shifted. The "intelligence tool" is now a valid excuse for structural leaness. If one person can truly do the work of a dozen, the very definition of a "company" changes. We move from a world of headcount-as-prestige to a world of leverage-as-prestige.

For the individual professional, the takeaway is clear: move up the stack or get crushed by the commoditization of the middle. If your value is in the "how"—the syntax, the boilerplate, the routine configuration—you are a scribe in the age of Gutenberg. The new power nodes are the "Publishers"—those who can define the problem, architect the solution, and maintain the "data flywheels" that AI cannot replicate.

We are currently in what Carlota Perez calls the "Installation Period"—a chaotic, speculative time of "vibe coding" and explosive app growth. It is noisy, messy, and filled with low-quality clones. But the "Deployment Period" is coming. That is when the dust settles, the bubbles pop, and we stop talking about the agents themselves and start talking about the entirely new business models they’ve made possible. The winners won't be the ones who wrote code the fastest; they will be the ones who realized that when code is free, the only thing that matters is the judgment of what to build with it.

The real danger isn't that AI will replace the programmer, but that we will spend the next five years trying to run "electric" agents in "steam-powered" organizations. The transition requires more than a subscription to a new tool; it requires a fundamental rewrite of how we define work itself.