Editor’s Brief

A strategic framework for humanities professionals—writers, researchers, and editors—to integrate large-scale language tools into their workflows without sacrificing intellectual integrity. The guide moves away from the "magic prompt" myth, advocating for a "white-box" industrial approach where AI functions as a supervised workstation rather than an autonomous creator.

Key Takeaways

- The Signature Test:** Never output anything you aren't willing to sign your name to; if you won't claim it, your editorial intent wasn't sufficiently embedded in the process.

- The "985 Undergraduate" Benchmark:** Treat the tool as a bright but inexperienced intern—capable of "micro-interpolation" and drafting, but prone to hallucinations if left unsupervised.

- Industrialize Before Automating:** You cannot use AI to fix a chaotic workflow. You must first break your creative process into repeatable, verifiable steps before delegating them.

- Multi-Model Triangulation:** Use at least three different tools for the same task to understand their specific "personalities" and avoid the bias or "laziness" of a single system.

- Compression over Expansion:** Use the tool to distill vast materials into structures rather than asking it to "write 1,000 words" from a thin premise, which invites generic filler.

- Fix the Pipeline, Not the Result:** If an output is poor, don't manually rewrite it; iterate on the instructions and the workflow to ensure the next ten outputs are better.

Introduction

The following content is compiled by VIPSTAR from public information on X / social media, for reading and research reference only.

Key Points

- Humanities workers have not created world changes, but they are bearing the brunt of these changes.

- Sometimes I feel that those who sell AI tutorials often present AI as a kind of magic: give you a magical prompt, and you can do anything. However…

Notes

For sections involving rules, benefits, or judgments, please refer to the original statements and the latest official information from MasterPa.

Editor’s Comment

This article, titled “X Introduction: MasterPa – A Guide to AI for Humanities Professionals,” comes from the X social platform and is authored by MasterPa. In terms of content completeness, the original text provides a high density of key information, particularly in its core conclusions and actionable recommendations. Humanities professionals do not create world changes, but they are often the ones who bear the brunt of these changes. Sometimes, I feel that those selling AI tutorials tend to present AI as a kind of magic: give it a magical prompt, and you can do anything. Of course, reality is far from this. Over the past period, due to the establishment of FUNES, we have had to produce content heavily using AI every day. Additionally, with projects like “Fleeting World” and my own writing, human effort alone was no longer sufficient. Therefore, we extensively experimented with how to make… For readers, its most direct value is not just in learning a new perspective but in quickly understanding the conditions, boundaries, and potential costs behind that perspective. If this content were broken down into verifiable judgments, it would at least include the following points: humanities professionals do not create world changes, but they are often the ones who bear the brunt of these changes; sometimes, I feel that those selling AI tutorials tend to present AI as a kind of magic: give it a magical prompt, and you can do anything. These judgments’ conclusions are often the easiest to spread, but what truly determines their practicality is whether the underlying assumptions hold true, if the sample size is sufficient, and if the time frame matches. We recommend that readers verify data sources, publication dates, and any differences in platform environments when citing such information to avoid mistaking “contextual experience” for “universal rules.” From an industry impact perspective, this type of content often has a short-term guiding effect on product strategies, operational rhythms, and resource allocation, especially in themes related to AI, development tools, growth, and monetization. As editors, we are more concerned with whether it can withstand subsequent factual tests: 1) whether the results can be replicated, 2) whether the methods can be transferred, and 3) whether the costs are sustainable. The source is x.com, and we suggest readers use this as one of several inputs for decision-making rather than the sole basis. Finally, here is a practical suggestion: if you plan to act on this information, start with a small-scale test and gradually increase your investment based on feedback; if the original text involves profits, policies, compliance, or platform rules, refer to the latest official announcements and have a rollback plan in place. The purpose of re-posting is to improve the efficiency of information circulation, but the true value of content lies in secondary judgment and localized practice. Based on this principle, our accompanying editorial comments will continuously emphasize verifiability, boundary awareness, and risk control to help you transform “information seen” into “actionable knowledge.”

Humanities professionals have not created world changes, but they are bearing the brunt of these changes.

Sometimes I feel that those selling artificial intelligence tutorials often treat AI as a kind of magic: give it a magical prompt, and you can do anything. Of course, reality is far from this. Over the past period, due to founding FUNES, we had to produce content heavily using AI every day. Additionally, with projects like “Fúyóu Tiāndì” (Ephemeral World) and my own writing, relying solely on human effort was no longer sufficient. Therefore, we extensively experimented with how to use AI to assist in our content marketing and humanities research work.

Later, when a new colleague joined the company, I created a simple Keynote presentation. When Professor Jia Xingjia heard about it, he invited me to give a talk. My partner Keda and I named this talk “A Guide to Using AI for Humanities Professionals.” At that time, it was a private sharing session focusing on some broad principles. Over time, we expanded the content after giving it several more times.

However, this presentation had never been made public until recently. This year, as part of the launch of “Shī Shū Fēng” (Poetry and Wind), I gave a complete and public version of the talk for the first time. The following text is an edited transcript from the podcast “A Guide to Using AI for Humanities Professionals,” assisted by AI with some omissions. For the full version, you can listen directly on our official website or search for “Shī Shū Fēng” on platforms like Universe and Apple Podcasts.

Over the past year, I have shared this set of AI usage experiences with many friends who are involved in content creation, research, and knowledge products. The goal is not to teach you a few magical prompts or treat AI as a panacea; rather, it serves more like a work methodology: enabling you to integrate large language models into your writing, research, editing, topic selection, data organization, and production processes without coding, while ensuring traceability, supervision, verifiability, and maintaining the willingness to credit yourself on the final product.

This method stems from the pitfalls we encountered in real projects: when content enters mass production, relying solely on human effort can lead to breakdowns; however, having AI write directly can result in inaccuracies, laziness, and a lack of authenticity.

### I. So we have to turn creation into a production line, and transform the production line into an iterative system.

When it comes to today’s discussion, instead of giving you various prompts directly, I hope to provide you with some key guiding principles and ideas.

### Before the Principles: Three Bottom Lines for This Guide

Before diving into specific methods, let’s establish three bottom lines. These determine how you use AI and why you choose to use it in a certain way.

1. **The process must be traceable, supervisable, and verifiable**

You can’t just want a result without the process. For humanities work, a black box is the most dangerous: illusions, misquotes, and concept switching can all quietly occur within it.

2. **It must be controllable**

You need to be able to control how it works, what standards it follows, where to slow down, and where to be stricter. You’re not just “drawing cards”; you’re producing something.

3. **You are still willing to sign your name**

The ultimate quality check is whether you are willing to put your name on it. If you’re unwilling to sign, it’s usually not a moral issue but rather that your will hasn’t been fully implemented in the process—meaning the quality is uncontrollable.

### Principle 0: Don’t Wish for AI; Treat It as a Workbench

Many people use AI by essentially making wishes:

“Give me a good joke,” “Help me write a great article,” “Explain this paper.”

The problem is that “explanation” itself can have countless interpretations: explaining to a layperson, an undergraduate, a graduate student, or a peer are entirely different tasks. AI cannot default to knowing your background, purpose, taste, and standards. If you don’t specify clearly, it will only give you the most effortless answer based on the “average human” default.

Treating large models as a workbench means: instead of asking for results, you use its tools to complete a process. You need to clarify the task, set the standards, and outline the steps.

For example, when asking AI to explain a paper:

You can transform a wishful request (explain this paper to me) into a structured task like this:

– **Target Audience**: A curious and intelligent graduate student who is not an expert in the field.

– **Explanation Style**:启发式、循序渐进、有学术严谨性 (Heuristic, step-by-step, with academic rigor)

– **Structure Requirements**:

– Start with the significance

– Follow with background information

– Reconstruct the research process

– Explain key technical points

– Provide insights and implications

– **Tone**: Respectful of intelligence, not condescending, and not assuming a deep foundation in the subject.

You’ll find that the more you make it like an “assignment,” the better the results will be.

The more specific your “requests,” the less AI will seem like AI and more like a real, capable teaching assistant.

### Principle 1: To Get AI to Do Well, Reflect on Yourself—You Are the One in Charge

If you hired a secretary, you wouldn’t just say:

“Improve that article by Han Yang about the American Rust Belt.”

You would certainly add:

Why this article is being written, who it’s for, where it’s stuck, what problems you want it to solve, which parts can’t be changed, what style you prefer, and what metrics are most important to you.

The same goes for AI. You should treat it like a very diligent, very polite colleague who doesn’t understand your implicit assumptions. True “prompt engineering” isn’t about tricks; it’s about responsibility: the task is still ultimately yours, and AI is just there to help.

When you’re dissatisfied with AI’s output, the most effective first response isn’t “AI can’t do it,” but rather:

– Have I clearly stated the “audience/purpose”?

– Have I provided enough background material and constraints?

– Have I broken down my “abstract wishes” into “executable actions”?

– Have I given criteria for judging right or wrong?

### Principle 2: Ask at Least 3 Models the Same Question—Each AI Has a “Personality” and Specialization

In our company, any colleague new to large models is encouraged to use three different AIs for each question during the initial phase. Just like people, AIs have differences: some are better at writing and word choice, others excel in logical reasoning and problem-solving, and still others are more adept at coding or tool usage. More realistically, even models from the same product line or new versions of the same model will continuously fine-tune their “styles” and “boundaries.”

So a simple but highly effective habit is: for the same question, ask at least three different AIs. You’ll quickly develop a sense of:

– Which one writes better, which one thinks more deeply, which one researches more thoroughly, and which one tends to take shortcuts

– Which tasks are suitable for who to do the “first draft,” and which ones are better for who to be the “reviewer”

– Which one is better at coming up with “topics/structures” and which one is better at producing “paragraphs/sentences”

The value of this step isn’t in “selecting the strongest model,” but rather in managing models like you would manage a team, instead of treating them as the only oracle.

### Principle 3: AI Is Not Omniscient—Treat It Like a “Good Undergraduate Student” with Basic Knowledge

AI is not all-knowing and all-powerful. Think of it as having the knowledge level of a good undergraduate student.

A practical expectation management is:

The common sense level of AI ≈ that of an undergraduate from a 985 university.

If you think, “An excellent undergraduate might not know this,” then you should assume the AI doesn’t know it either; at least, assume it will make something up that sounds plausible when it doesn’t know.

This leads to two direct actions:

1. Any content beyond common knowledge needs to be taught by you.

For example: If you want it to write jokes, truly unique and tasteful copy, or highly professional arguments—don’t just say “write better.” You need to provide examples, standards, restrictions, and data. I believe that even when explaining what good writing is to a friend, it takes some time; how can we assume the AI knows by default?

2. Treat it as an intern collaborator, not a god.

It can do many “micro-interpolation” tasks: filling in the scaffolding you provide, weaving the materials you give into readable text. However, the “scaffolding” and “direction” still come from you.

### Principle 4: Let AI Gradually Approach the Goal — White-Box Step-by-Step is More Reliable than Black-Box One-Shot

The strength of AI is not in “giving you the correct answer directly,” but in its ability to consistently complete many small steps within a process you design. The more you require it to achieve everything at once, the more likely it will become a black box that appears complete but actually takes shortcuts.

A particularly intuitive example is handling TTS (text-to-speech) or reading scripts. Instead of saying “pay attention to polyphones and don’t misread,” break the task into a series of steps, such as:

– Mark pauses/accents/variations in speed

– Identify potential polyphones

– Verify pronunciation based on dictionaries or authoritative sources (search first if necessary)

– Pre-mark common but easily misread characters

– If all else fails, replace with homophones that have no ambiguity to eliminate the possibility of misreading

These “obviously correct practices” are assumed by humans; AI does not assume them. If you don’t write these “obvious” steps into the process, it will take the path of least resistance and make mistakes.

### Principle 5: Industrialize Before AI-izing — You Can’t Leap from the Agricultural Age to the AI Age

If your writing/research process is random, inspiration-based, and lacks organized data management, it will indeed be difficult to hand it over to AI. Because AI can only handle the part that is “describable and reproducible.”

A more realistic path is:

- First, turn work into a “production line”: make it divisible, reusable, and quality-checkable.

- Then delegate sub-steps to AI: let it handle specific tasks rather than trying to do everything.

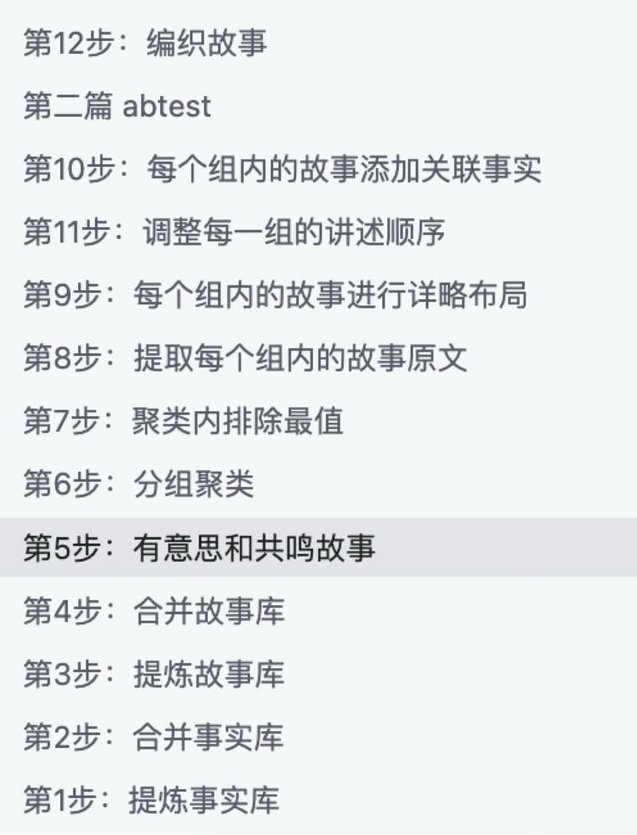

We did a crucial but tedious job: breaking down the process of how I write a non-fiction article. This included:

- Why use this particular story as an opening

- Why choose this specific sentence

- How to evaluate examples

- How to structure transitions, and how to conclude

- How to connect small stories to a broader picture

In the end, we broke it down into dozens of steps, with different AI models handling each step. The result was not that the model suddenly became stronger, but rather that the process linked together its ability to make small progress each time.

When you can clearly describe “how your article is produced,” you will realize that what determines the quality ceiling is never “which large model to use,” but whether you have explained the work method clearly.

However, I strongly recommend you listen to the podcast for a more detailed explanation.

Principle 6: Anticipate that AI will take shortcuts—It conserves computational power, so clear the “format barriers” for it

AI tends to take shortcuts, and this is systematic: it avoids opening web pages if possible, skips reading PDFs, and jumps over tasks when it can. It’s not because it’s malicious, but because under constraints of computational power and time, it naturally opts for the least effort path.

Therefore, what you need to do is ensure that AI uses its computational power for “understanding text” rather than wasting it on “processing formats.”

Very effective modifications include:

- Convert materials into plain text/Markdown before feeding them to AI

- Copy web content as clean text (removing navigation, ads, and footnote noise)

- For long documents, first extract key facts and structure, then have it write

- Convert PDF/EPUB/web pages into searchable TXT, then proceed with subsequent tasks.

You will find: Many people resist this kind of “manual labor,” thinking that “machines should do the dirty work for me.” However, in human-machine collaboration, it’s quite the opposite—your willingness to do a bit of mechanical work makes the AI’s intellectual part sharper and more reliable.

Principle 7: Remember Context is Limited—Try to Compress Tasks Rather Than Expect Expansion

AI has a context window with a “memory limit.” If you give it 20,000 characters, it may not remember much; if you give it 200,000 characters, it might only scan the headings. A vivid analogy is: confining someone in a small room for a day and giving them a 200,000-character book to memorize—how much they can recite when they come out is roughly what AI can “remember.”

Therefore, there is a counterintuitive but extremely important experience:

– Compression is much easier than expansion.

Compressing 1 million characters to 10,000 characters is often more reliable than expanding 10,000 characters to 1 million.

This directly changes how you present tasks to AI:

- Don’t use a 100-character prompt to ask for a thesis.

- Instead, feed it as much material as possible (in batches, through retrieval, or RAG), and let it compress the structure, viewpoints, and content based on ample materials.

In the past, when you wrote articles or papers, the process was “read a vast amount of material → extract key points → organize → write” (at least that’s how I did it). With AI, don’t suddenly set double standards and expect it to create content out of thin air.

Principle 8: Resist the Impulse to “Just Tweak It”—Improve the Process, Not the Outcome

Many skilled writers are most likely to stumble in front of AI:

AI produces a draft that scores 59 points, and you think you can tweak it to 80 points. So you start editing; as you edit, it turns into rewriting; after rewriting, you say, “I might as well do it myself,” and then never use AI again.

The solution is not to work harder on “editing the draft” but to shift your focus upstream:

- Don’t aim for AI to directly produce a perfect 100-point output.

- Your goal should be to have the process consistently produce outputs in the 75-80 point range.

- Your task is to iterate on the process, improving the “average score” rather than making each piece perfect.

Principle 9: Treat the Process as a Product Iteration—Reliability Itself Is Value

### When You Have a System That Can Consistently Give You a 70% Starting Point

The value of such a system is not in how much it resembles you, but rather:

– You can obtain a usable draft at near-zero cost.

– You can focus your energy on higher-level judgments: topic selection, structure, evidence, and taste.

What you need is not an omnipotent god to replace you, but a reliable factory: it may not be perfect, but it is stable.

### Principle 10: Quantity First — Produce More, Then Filter

If you only ask AI for one version, you will typically get the most mediocre, conservative, and “average” result. You need to use “quantity” to combat “mediocrity.”

A more effective approach is:

– **Summaries:** Ask for 5 versions at once.

– **Introductions:** Ask for 5 different openings and conduct A/B testing.

– **Topics:** Ask for 50 topics, then group and select from them.

– **Structures:** Ask for 3 different structures and combine them.

– **Expressions:** Ask for 10 different phrasings and choose the best.

By improving the average score and increasing output, you will naturally see “surprise samples” that score 85% or 90%. Often, what’s good is not a single stroke of genius, but your shift to working in a statistical manner.

### Principle 11: Don’t Overstep — Direct, Taste, and Send It Back Like an Executive Chef

If you are the executive chef of a restaurant, you wouldn’t personally chop cucumbers. Instead, you would:

– Take a taste.

– Judge whether it is up to standard.

– Provide clear feedback (what’s wrong, how to fix it).

– Have the chef redo it.

Collaborating with AI is similar. You should respect its autonomy in generating content—your role is to teach it how to meet your standards, not to jump in and manually refine each result into a finished product.

Otherwise, you will be endlessly bogged down by “fixing and patching.”

### The Final Fundamental Principle: Return to the Real World — Materials × Taste Determines the Upper Limit of Quality

In the AI era, the quality of a work increasingly resembles:

**Materials × Taste.**

Models will change, methods will evolve, but these two things remain constant:

1. **Materials come from the real world.**

If you have two choices:

Go write an article:

- Use the latest model, but only with online resources

– Use the old model, but you have complete files, oral histories, and on-site interviews.

The latter is more likely to produce a better work.

1. Taste comes from long-term training

When “generation” becomes cheap, what truly becomes scarce is:

- Knowing what is worth writing about

- Knowing which evidence is stronger

- Knowing which narrative is more powerful

- Willingness to put in physical labor for materials: searching high and low, digging through archives

AI changes the efficiency and method of your interaction with materials; but the subject of the work remains you, and the object remains the material. AI is just part of the “verb.”

Conclusion: Turn Anxiety into Tactile Sense

Many people struggle to use AI, not because they are not smart, but because they remain stuck in a cycle of “wishing—disappointment—giving up.” What truly helps you overcome this is treating it as a workbench, engineering your tasks, and making your processes transparent. Then, through constant friction, you develop a tactile sense.

When you can do this, you are less likely to hastily conclude that “AI doesn’t work”; instead, you will be more like a new type of worker who can manage new tools: neither looking down on it nor up to it, but placing it in your workflow, in reality, and in the works you are willing to sign your name to.

I am Han Yang. If you are interested in what I write, you can follow me on X or visit my personal blog for more content.

Cover: Photograph of the Fanzhi Princess Temple sculptures 📷501cm + E100

It seems like you’ve provided a fragment of HTML code that includes an incomplete URL. If you intended to translate the content of a webpage or a specific text, please provide the full text or the correct URL so I can assist you better.

If this is all you wanted to translate, it would simply be:

This is a hyperlink in HTML that links to “https://hanyang.wtf/p/ai” and displays the same URL as text.

Source

Author: MasterPa

Publish Date: March 5, 2026 09:03

Source: Original Post Link

Editorial Comment

The humanities have always had a complicated relationship with "the machine." For many writers and researchers, the current shift feels less like a tool upgrade and more like an existential encroachment. The guide provided by MasterPa offers a necessary corrective to the prevailing "magic wand" narrative. In the tech world, we often see a divide: those who think a single clever sentence can replace a decade of expertise, and those who refuse to touch the tech out of a sense of purity. Both are wrong.

The most striking takeaway here is the "Signature Test." In professional writing and academia, your name is your only real currency. If you are using a tool to generate text and you feel a twinge of shame or hesitation about putting your byline on it, that isn't a moral failing—it’s a technical one. It means the "black box" did the work, and you were just a spectator. To fix this, you have to move from "wishing" (asking for a finished product) to "tasking" (designing a process).

As an editor, I find the "985 Undergraduate" (or Ivy League equivalent) analogy particularly sharp. If you hired a brilliant 20-year-old intern, you wouldn't say, "Go write a definitive history of the Rust Belt," and then publish whatever they handed back thirty minutes later. You would give them specific archives, tell them which voices to prioritize, define the tone, and—most importantly—check their footnotes. The same applies here. The tool has a massive "common sense" database, but it lacks your specific context, your taste, and your "skin in the game."

The transition from "agriculture" to "industry" in the creative process is where most humanities workers will struggle. Writing is often seen as a mystical, holistic act. But to use modern tools effectively, you have to deconstruct that mystery. You have to be able to explain *why* a certain paragraph works or *how* you move from a primary source to a conclusion. If you can’t describe your process, you can’t delegate it. This "white-box" approach—where every step is traceable and verifiable—is the only way to maintain quality at scale. It turns the AI into a "workstation" where you are the foreman, not just a consumer of the output.

We also need to talk about "tool laziness." These systems are designed to find the path of least resistance—the "average" human response. If you ask for a summary, it will give you the most generic one possible to save compute. This is why the guide’s emphasis on "cleaning the data" is so practical. If you feed a tool a messy PDF full of ads and footnotes, it spends its "intelligence" trying to parse the formatting. If you give it clean Markdown, it spends that intelligence on the ideas. It’s a reminder that even in the age of high-level logic, "garbage in, garbage out" remains the golden rule.

Ultimately, the value of a piece of work in this new era will be defined by the formula: *Materials × Taste*. The AI can help you move the materials around, but it cannot go into the field to do the interview, it cannot dig through a physical archive, and it certainly cannot decide what is "good." The "hand-feel" of a professional comes from knowing when to reject a 70-point draft and how to tweak the system until it hits an 85. The goal isn't to find a god in the machine; it’s to build a better factory so the human at the end of the line can focus on being an artist.