Introduction

The geopolitics of 2026 is being reshaped by AI. Lin Yi’s article reveals the desperate battle between Anthropic and the U.S. military over the “technical red line.” When Claude is involved in a beheading operation and intelligence assessment, Silicon Valley’s idealism encounters Washington’s hard-line expansion. This game about algorithmic autonomy is not only related to the fate of a company, but also heralds a fundamental turning point in the shape of war.

focus

- Anthropic adheres to the principle of “constitutional AI” and strictly prohibits models from being used for large-scale surveillance or autonomous lethal weapons systems that are out of human control.

- The US military has deeply integrated Claude in intelligence analysis and simulated operations, and technological neutrality is facing unprecedented pressure in the face of actual combat needs.

- The core of the conflict between Washington and Silicon Valley lies in AI discretion: whether private companies have the right to exercise veto power on algorithm applications related to national security.

Remark

Technology giants want to be “gatekeepers”, but the state apparatus only wants “swords.” The metaphor of “crossing the Rubicon” mentioned by Lin is extremely impactful. It tears apart the powerlessness of technological ethics in the face of violent machines. Under extreme geopolitical pressure, so-called red lines are often just temporary compromises, and complete autonomy of algorithms seems to be becoming a tacit necessity.

1. The war continues

As 2026 begins, the air in Washington, D.C., is filled with an unprecedented sense of chilling.

Since Trump changed the name of the “Department of Defense” to the “Department of War” last year, international journalists have been busy.

In January this year, US military special forces broke into Venezuela across the border and invited former President Maduro directly from the presidential palace to the United States and took him to play a real-life escape room.

In February, the U.S. military cooperated with the Israeli military and used a precision missile to directly send away Iran’s supreme leader who was in a meeting. In less than half an hour, the entire geopolitical structure of the Middle East was turned upside down.

In the past two days, in addition to the war situation filling the headlines of major news newspapers, a star AI company in Silicon Valley has also been pushed to the forefront.

Today, let’s talk about: How do AI and war come together?

2. Extreme pulling

Anthropic

Weeks before the Iranian strike, a “civil war” broke out between Washington and San Francisco. At the core of this conflict is Silicon Valley’s AI giant Anthropic and its founder and CEO Dario Amodei.

Speaking of Amoudi, he is definitely the number one figure in the AI circle.

He worked at Baidu and Google in his early years, and was a core member of the founding team of OpenAI. He founded OpenAI with Altman.

By 2021, he left OpenAI with a group of core members and founded Anthropic.

The reason is also easy to understand. Different ways do not work together. He feels that Ultraman is abandoning the bottom line of AI safety in order to commercialize it, and the risk control of large models is becoming increasingly loose.

The “Constitutional AI” that Anthropic abides by is a good reflection of his values. To put it simply, AI must follow the rules. All output and all application scenarios must strictly abide by this constitution. In this constitution, there are two red lines that must not be touched until they are bitten to death:

First, AI models must not be allowed to be used for mass surveillance of U.S. citizens;

Second, the integration of AI models into autonomous lethal weapons systems that are completely independent of human control is absolutely not allowed.

He has said before: Today’s cutting-edge large-scale models are not foolproof and reliable at all. They will have hallucinations and make unpredictable wrong judgments.

Applying such technology to autonomous weapons without human assurance is essentially gambling with the lives of countless people;

And using it for large-scale domestic surveillance is dismantling the foundation of the American social system.

Since June 2024, Claude has been operating in the US military’s confidential system, doing intelligence analysis, document processing, and technology research and development for the military. They have been cooperating for almost a year and have been getting along without incident.

Capture Maduro

But this time it is different. The trigger of the conflict is related to the operation to arrest Maduro in January 2026.

Later, media broke out that in this operation, the US military used Anthropic’s Claude model through cooperation with Palantir to complete core tasks such as intelligence assessment, target identification, and combat scenario simulation.

As soon as the news came out, Anthropic immediately sent an inquiry to the military to confirm where its technology was being used and whether its red line had been touched.

It was this question that directly ignited the civil war between Washington and Anthropic.

In January of this year, Deputy Minister of War Emil Michael directly shouted to Anthropic from a distance, using a very important sentence: “I hope Anthropic can ‘cross the Rubicon.'” Caesar crossing the Rubicon meant that the ship was ruined and there was no way back.

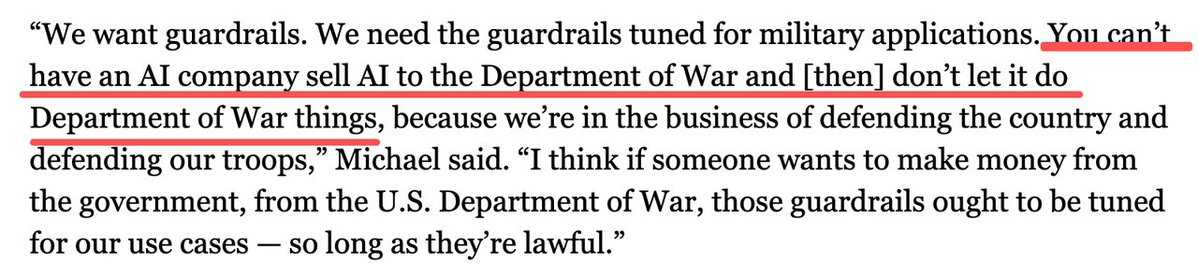

This is equivalent to a public call for Anthropic to cross a red line. He also believes that private companies should not have veto power on national security issues. Now that the United States is in trouble at home and abroad, “complete autonomy of algorithms” is the only way to survive. Michael’s words directly pointed out the core disagreement between the two parties: Who has the final discretion to define AI?

The logic of the Department of War is: The United States has a complete legal system. Congress legislates, the president signs, and the military executes. The law has the final say on what is legal and what is illegal. Why do you, a private company, set rules for the US military and even exercise the so-called “veto power” over military operations? This is putting capital above state power.

Anthropic’s logic is the same as what we just said: there are too many ambiguities in the law, not to mention that what the military calls “legal” can change with policies and with the revision of laws. Today, the law prohibits mass surveillance, but tomorrow it may change its course; today, policies require that autonomous weapons must have human backing, and tomorrow, restrictions may be relaxed in response to so-called “emergency threats.”

ultimatum

On February 25, 2026, Secretary of War Pete Hegseth gave Amodei an ultimatum directly: By 5:01 pm on Friday, February 27, Anthropic must remove the so-called “red line” in its terms of service, otherwise, it will be classified as a “supply chain risk,” to give you some color.

You know, this hat is generally used by overseas companies, such as Russia’s Kaspersky Lab. No American company has ever been labeled this way. Once labeled, all contractors, suppliers, and partners that work with the U.S. military are prohibited from having any business dealings with this company.

Anthropic is now in a period of explosive business, and Claude coding tools have become the first choice for countless technology companies and software companies around the world. Once this label is applied, it will directly cut off Anthropic’s future.

On February 26, 2026, Claude’s parent company Anthropic published a special article refuting him, and still insisted on two core red lines.

White House Fury

This completely angered the White House.

Trump personally left the stage and did not hesitate to “praise” Anthropic: saying that it is “left-wing”, “puts itself above the Constitution of the United States”, and “disregards the lives of American soldiers.”

Immediately, just hours before the airstrikes, the White House issued an order requiring all federal agencies to stop using Anthropic’s products.

It’s hard not to think about it at this point in time. To put it bluntly, Anthropic’s extremely fast cutting seems to confirm one thing: this time in Iran, it seems that it was the Claude model that helped the US military complete tasks such as intelligence assessment, target identification, and combat scenario simulation.

The specific extent of its application is naturally a top secret, but the application of AI in the military field is indeed a certainty.

3. Killing chickens to scare monkeys

OpenAI

This rupture has no room for relaxation at this point, and the White House’s “killing chickens to scare monkeys” drama has quickly achieved results.

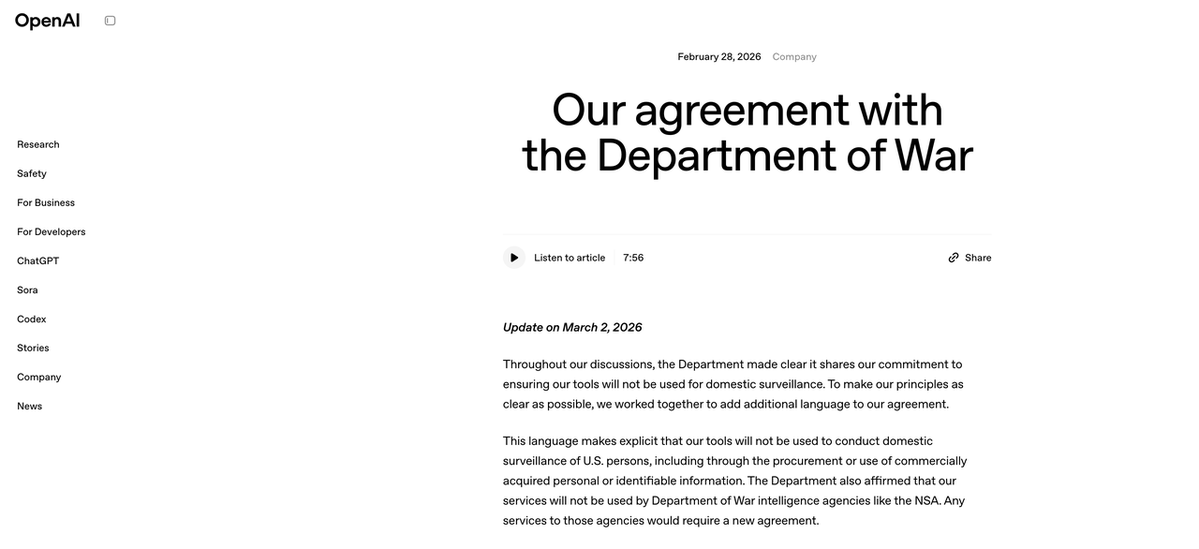

Just the day after Anthropic was officially banned, on February 28, 2026, OpenAI suddenly released an announcement titled “Our Agreement with the Department of War.”

At this point in time, the words “voting certificate” are simply written on the bright side. What’s more interesting is that OpenAI said in the announcement that its agreement with the Ministry of War also set up three core red lines, which are almost identical to Anthropic’s two red lines:

1. Do not use OpenAI’s technology for large-scale domestic surveillance;

2. Do not use OpenAI’s technology to command autonomous weapons systems;

3. Do not use OpenAI’s technology for high-risk automated decision-making, such as the social credit system.

Contents of the agreement

Since the red lines are almost the same, why was Anthropic blocked but OpenAI was able to reach cooperation with the military? Is it true that Amoudi is ignorant of current affairs and Ultraman knows better about negotiation?

Let’s look at the OpenAI protocol first. It has made three key designs, and these three designs are exactly what Anthropic would not accept even to death.

First, deploy the architecture in the cloud, but leave the decision-making power in your own hands. OpenAI’s agreement clearly states that models are only deployed in the cloud and will not be provided to the military without guardrails, nor will they be deployed on edge devices. The key here is that for autonomous weapon systems to respond in real time on the battlefield, the model must be deployed on the weapon itself (i.e., edge device). Cloud deployment has network delays and cannot support actual combat scenarios. OpenAI uses this method to technically block the possibility of the model being used in autonomous weapons, and also gives the military a step up. It’s not that OpenAI doesn’t let you use it, it’s that the technical architecture doesn’t support it.

Second, retain full control of the security stack. OpenAI stated in the agreement that it will retain complete discretion over the security protection system, and that OpenAI engineers and security researchers who have passed the military security review will participate in the use and supervision of the model throughout the process. To put it simply, OpenAI has the final say on how to run the model and how to set up safety guardrails;

Third, even if the law is changed in the future, it will not count. OpenAI’s contract clearly cites the current U.S. laws and policies on surveillance and autonomous weapons. It is written clearly in black and white: Even if these laws and policies change in the future, the use of the model must comply with the standards at the time the agreement was signed.

These three points were exactly what Anthropic had always refused to agree to when negotiating with the military. A spokesman for Anthropic once said that in the agreement given by the military, the so-called legal guarantees are surrounded by supporting legal terms. The end result is that the military can ignore these guarantees at any time and at will.

Why does OpenAI work?

So why did the military relent on OpenAI?

To put it bluntly, it was Anthropic’s hard work that paved the way for OpenAI. The White House has just branded the uncooperative Anthropic as a “negative model”. It urgently needs a positive role model to stand up and set an example to other companies in Silicon Valley: as long as you are willing to sit down and negotiate, any terms can be negotiated.

What is even more interesting is the feud between Ultraman and Amoudi.

The two were brothers in the same discipline at OpenAI. When Amodei left, he couldn’t stand Ultraman’s compromise on AI security. Now, one is blocked by the government for sticking to the red line, and the other relies on flexibility to win the U.S. military, the world’s largest customer. The life trajectories of the two seem to have gone in completely opposite directions because of the drama of militarization of AI.

Even OpenAI itself made it clear in the announcement: “We do not believe Anthropic should be classified as a supply chain risk, and we have clearly informed the government of this position.”

This emotional intelligence is also full.

xAI

If OpenAI is “still half-hidden” and has given the military a decent step down, then Musk and his xAI are even more radical.

On the same day that Anthropic was banned, Musk publicly applauded the White House’s decision on social media, directly scolding Anthropic and Amodei for “hating Western civilization,” believing that the moral principles they advertised were essentially to disarm Western countries and become “capitulationists.”

The reason why Musk dared to make such a challenge is because his xAI has accepted the requirements of the Department of War from the beginning and did not set any additional red lines. The reason why the military favors Musk’s Grok model is not only because he is “obedient”, but also because he has a trump card in his hand, Starlink.

Musk promised to connect the global data of SpaceX Starlink with the computing power of xAI to create a giant AI reconnaissance system for the military that can cover the world and capture public intelligence from the entire network in real time.

To put it simply, as long as Starlink can cover social media dynamics, surveillance images, and satellite data from any corner of the world, Grok can find ways to analyze, filter, and locate them, and provide the military with accurate intelligence. This capability is exactly what the U.S. military needs most in global operations.

At this juncture, these three Silicon Valley AI giants are heading in completely different directions.

On one side is the banned Anthropic who would rather die than surrender;

On one side is OpenAI, which has the conditions to compromise and win big orders from the military;

On one side is xAI running wildly.

The three major AI giants in Silicon Valley have made completely different choices at the crossroads of AI militarization. In the face of the power of the state apparatus and huge commercial interests, the ideal of AI security does not seem to be so unified.

4. AI arms dealer

Palantir

Just when Anthropic was blocked and OpenAI and xAI were vying to cooperate with the White House, a group of AI arms dealers specifically designed for the military were once again pushed to the forefront.

The only goal of these companies is to use AI to help win wars.

The most representative one is Palantir, co-founded by Peter Thiel.

The reason why Anthropic’s Claude model can be used in military operations in Venezuela is through a contract with Palantir.

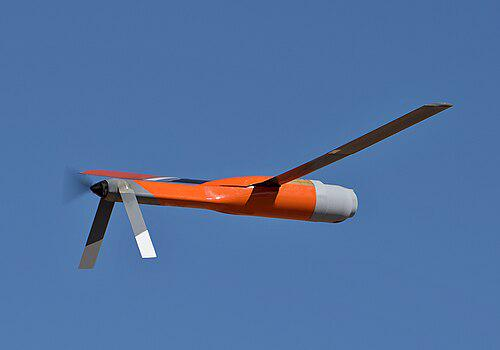

Palantir is the de facto AI operating system of the US military, and Maven in its hands is the current core intelligent combat command system of the US military.

The Maven system can integrate global satellite images, real-time drone footage, ground sensor data, radio monitoring information, and public intelligence into a unified decision-making interface. It uses AI algorithms to complete real-time analysis, target identification, and trajectory prediction, directly compressing the time from “discovering the target” to “issuing a destruction command” to just a few minutes.

As mentioned before, it was this intelligent system that caused Google DeepMind employees to collectively protest.

From day one, Palantir has served U.S. intelligence agencies and the military. After the 9/11 incident, the CIA used Palantir’s system to find traces of terrorists from massive data and locked bin Laden’s location.

Today, with a US Army contract worth $10 billion, it has completely become the underlying architecture of the US military’s combat system.

Anduril

There is another company whose value also rose on the night Anthropic was fired. It is Anduril Industries. After all, the freed up market is a major benefit to these dedicated AI arms dealers.

The founder of Anduril, Palmer Lackey, was born in 1992. He is the founder of the VR device Oculus. When he was 21 years old, he was acquired by Facebook for US$2 billion and generous dividends.

In 2017, Palmer was fired from Facebook after causing controversy after donating $10,000 to a pro-Trump and anti-Hillary organization. Later, he turned around and founded Anduril, focusing solely on military AI.

The company’s core products are all AI-driven autonomous combat systems: from AI sentries responsible for border surveillance, to autonomous defense systems against drones, to AI algorithms that control drone swarms, it can do whatever the military needs.

Speaking of the rise of these AI arms dealers, it takes back Trump’s military policy: the government’s ban on Anthropic does not really lack its AI model.

But we want to use this incident to set a rule for AI companies across the United States: when it comes to militarization of AI, there is no neutral option. Either you are on our side or you are eliminated by us.

AI is the core of future global hegemony, and military AI superiority is the foundation of U.S. global hegemony. Any attempt to set limits on AI is harming the national interests of the United States and compromising with its opponents.

5. Answers to the future

At this point in the story, the discussion on the ethics of AI war is far from over.

More than 70 years ago, Oppenheimer stood in front of the mushroom cloud at the Trinity Nuclear Test Site and remembered the words from the Bhagavad Gita:

“I am now Death, the destroyer of worlds.”

“Now, I am become Death, the destroyer of worlds.”

He built the atomic bomb with his own hands, but spent the rest of his life opposing nuclear proliferation and the abuse of nuclear weapons. However, he failed to stop it after all.

The torrent of nuclear proliferation is still sweeping the entire world.

Today, we seem to be standing at exactly the same crossroads as then.

AI is the atomic bomb of this era. Its power is even more terrifying and pervasive than nuclear weapons.

According to international law scholars and some AI experts, human soldiers possess an extremely valuable quality on the battlefield: hesitation.

This hesitation is not due to cowardice, but stems from a moral examination of the value of life and an intuitive perception of legal boundaries.

However, war is cruel, and war initiators pursue maximum damage and automated decision-making. They are essentially using algorithms to eliminate this room for hesitation step by step.

When AI can autonomously command thousands of drones to launch attacks, what will be left for humans in the war? Is it a target coordinate in the algorithm? Or is it a statistical unit in casualty figures?

Oppenheimer once said:

“Scientists have recognized the evil.”

“The physicists have known sin. “

Today’s AI practitioners are facing the same torture.

When you write a line of code, it may eventually become a weapon that takes away your life;

When you train an AI model, it may eventually become a tool for monitoring society;

When the technology you create may eventually lead mankind into an unpredictable future, what choice will you make?

Choose to cross the Rubicon? Or choose to stick to the bottom line, even if you have to face disaster?

The answer to this question not only determines the future of AI, but also the future of mankind.

References:

Statement from Dario Amodei on our discussions with the Department of War – Anthropic

U.S. Strikes in Middle East Use Anthropic, Hours After Trump Ban – WSJ

Our agreement with the Department of War – OpenAI

Maven Smart System – MDAA

source

author:Lin YiLYi

Release time: March 4, 2026 14:54

source:Original post link

Editorial comments

This article “X Introduction: Lin YiLYi – Is artificial intelligence already helping humans fight wars? 》from X social platform, written by Lin YiLYi. Judging from the completeness of the content, the density of key information given in the original text is relatively high, especially in the core conclusions and action suggestions, which are highly implementable. 1. The war continues. At the beginning of 2026, the air in Washington, D.C. is filled with an unprecedented sense of chilling. Since Trump changed the name of the “Department of Defense” to the “Department of War” last year, international journalists have been busy. In January this year, US military special forces broke into Venezuela across the border and invited former President Maduro directly from the presidential palace to the United States and took him to play a real-life escape room. In February, the U.S. military cooperated with the Israeli military and used a precision missile to directly send away Iran’s supreme leader who was in a meeting. Less than half an hour before and after… For readers, its most direct value is not “knowing a new point of view”, but being able to quickly see the conditions, boundaries and potential costs behind the point of view. If this article is broken down into verifiable judgments, it at least contains the following levels: At the beginning of 2026, the air in Washington, D.C. is filled with an unprecedented sense of solemnity. ; Since Trump changed the name of the “Defense Department” to the “Department of War” last year, international journalists have not stopped. . Among these judgments, the conclusion part is often the easiest to disseminate, but what really determines the practicality is whether the premise assumptions are established, whether the sample is sufficient, and whether the time window matches. We recommend that readers, when quoting this type of information, give priority to checking the data source, release time and whether there are differences in platform environments, to avoid mistaking “scenario-based experience” for “universal rules.” From an industry impact perspective, this type of content usually has a short-term guiding effect on product strategy, operational rhythm, and resource investment, especially in topics such as AI, development tools, growth, and commercialization. From an editorial perspective, we pay more attention to “whether it can withstand subsequent fact testing”: first, whether the results can be reproduced, second, whether the method can be transferred, and third, whether the cost is affordable. The source is x.com, and readers are advised to use it as one of the inputs for decision-making, not the only basis. Finally, I would like to give a practical suggestion: If you are ready to take action based on this, you can first conduct a small-scale verification, and then gradually expand investment based on feedback; if the original article involves revenue, policy, compliance or platform rules, please refer to the latest official announcement and retain the rollback plan. The significance of reprinting is to improve the efficiency of information circulation, but the real value of content is formed in secondary judgment and localization practice. Based on this principle, the editorial comments accompanying this article will continue to emphasize verifiability, boundary awareness, and risk control to help you turn “visible information” into “implementable cognition.”