Editor’s Brief

This report examines a February 2026 interview between Anthropic CEO Dario Amodei and Zerodha co-founder Nikhil Kamath. Amodei outlines the current state of artificial intelligence, describing an imminent 'tsunami' of capability that society is largely ignoring. The discussion covers Anthropic’s $380 billion valuation, its safety-first departure from OpenAI, the mechanics of scaling laws, and the specific economic shifts facing the Indian technology sector.

Key Takeaways

- Amodei defines scaling laws as a 'chemical reaction' where intelligence is the inevitable product of data, compute, and scale, rather than mere pattern matching.

- Anthropic maintains a safety-first commercial strategy, evidenced by their decision to delay Claude 1 in 2022 to avoid an arms race, despite losing an early market lead to ChatGPT.

- The labor market is shifting toward a model where AI handles the bulk of technical execution, making human 'bottleneck' skills—like critical thinking and physical-world coordination—disproportionately valuable.

- Anthropic’s expansion into India focuses on B2B partnerships with local conglomerates rather than direct consumer acquisition, treating the region as a strategic integration hub.

Introduction

The following content is compiled by VIPSTAR in combination with X/social media public content and is for reading and research reference only.

focus

- Dario Amodei, CEO of Anthropic and the man behind Claude, has a bachelor’s degree in physics and a PhD in biophysics. He originally wanted to teach…

- In February 2026, he came to Bangalore, India for the second time and sat across from Zerodha co-founder Nikhil Kamath…

Remark

For parts involving rules, benefits or judgments, please refer to Baoyu’s original expression and the latest official information.

Editorial comments

This article “X Import: Baoyu – Anthropic CEO Dario Amodei: The tsunami is on the horizon, but no one is watching” comes from the X social platform and is written by Baoyu. Judging from the completeness of the content, the density of key information given in the original text is relatively high, especially in the core conclusions and action suggestions, which are highly implementable. Dario Amodei, CEO of Anthropic and the man behind Claude, has a bachelor’s degree in physics and a PhD in biophysics. He originally wanted to be a professor to treat diseases. More than four years after leaving OpenAI to start a business, the company’s valuation has reached US$380 billion, with annual revenue of US$14 billion. In February 2026, he came to Bangalore, India for the second time, sat across from Zerodha co-founder Nikhil Kamath, and chatted for more than an hour. Nikh…. For readers, its most direct value is not “knowing a new point of view”, but being able to quickly see the conditions, boundaries and potential costs behind the point of view. If this content is broken down into verifiable judgments, it at least contains the following levels: Dario Amodei, CEO of Anthropic and the person behind Claude, has an undergraduate degree in physics and a PhD in biophysics. He originally wanted to teach…; In February 2026, he came to Bangalore, India for the second time and sat opposite Zerodha co-founder Nikhil Kamath… Among these judgments, the conclusion part is often the easiest to disseminate, but what really determines the practicality is whether the premise assumptions are established, whether the sample is sufficient, and whether the time window matches. We recommend that readers, when quoting this type of information, give priority to checking the data source, release time and whether there are differences in platform environments, to avoid mistaking “scenario-based experience” for “universal rules.” From an industry impact perspective, this type of content usually has a short-term guiding effect on product strategy, operational rhythm, and resource investment, especially in topics such as AI, development tools, growth, and commercialization. From an editorial perspective, we pay more attention to “whether it can withstand subsequent fact testing”: first, whether the results can be reproduced, second, whether the method can be transferred, and third, whether the cost is affordable. The source is x.com, and readers are advised to use it as one of the inputs for decision-making, not the only basis. Finally, I would like to give a practical suggestion: If you are ready to take action based on this, you can first conduct a small-scale verification, and then gradually expand investment based on feedback; if the original article involves revenue, policy, compliance or platform rules, please refer to the latest official announcement and retain the rollback plan. The significance of reprinting is to improve the efficiency of information circulation, but the real value of content is formed in secondary judgment and localization practice. Based on this principle, the editorial comments accompanying this article will continue to emphasize verifiability, boundary awareness, and risk control to help you turn “visible information” into “implementable cognition.”

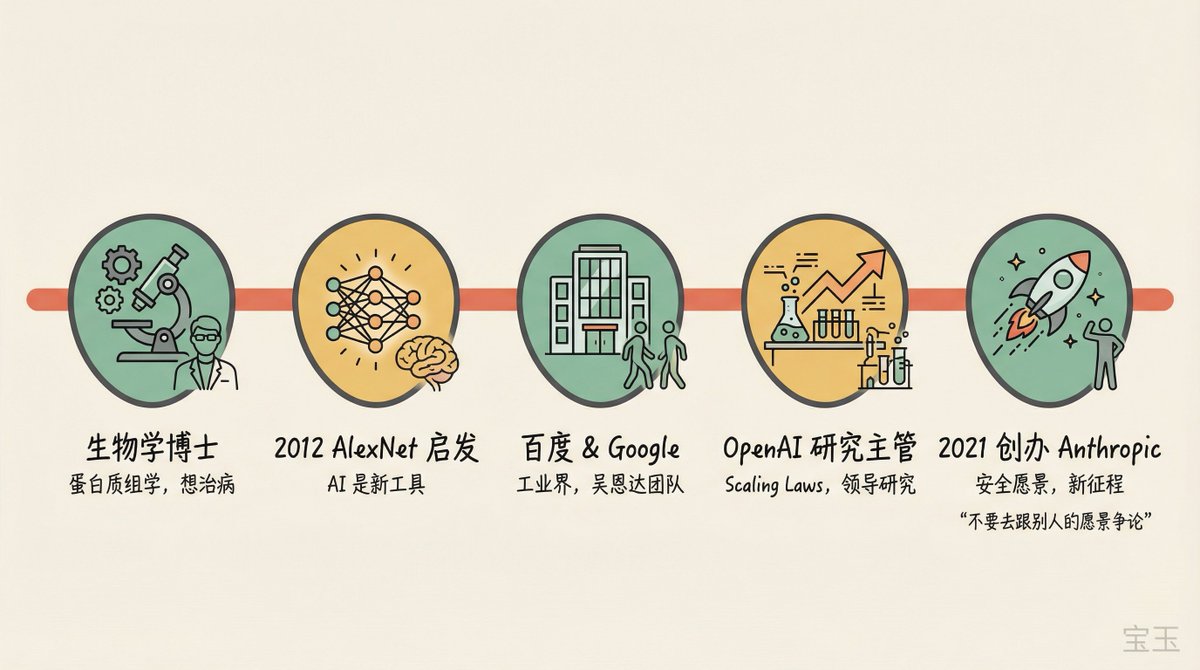

Dario Amodei, CEO of Anthropic and the man behind Claude, has a bachelor’s degree in physics and a PhD in biophysics. He originally wanted to be a professor to treat diseases. More than four years after leaving OpenAI to start a business, the company’s valuation has reached US$380 billion, with annual revenue of US$14 billion.

In February 2026, he came to Bangalore, India for the second time, sat across from Zerodha co-founder Nikhil Kamath, and chatted for more than an hour. Nikhil did not come from a technical background, and his questions brought with them the skepticism of investors and the anxiety of entrepreneurs, directly challenging Dario’s security narrative many times. Topics range from the chemical reaction metaphor of scaling laws to the “resign button” of AI consciousness, from the pointed questioning of “rich people say capitalism is bad” to practical advice on “what should young people learn”.

While Dario said, “The AI tsunami is already on the horizon,” he admitted, “I feel uneasy about the concentration of power.” Nikhil, while showing his experience of writing financial programs with Claude Code, asked, “Won’t you take away the income of entrepreneurs on my platform?”

Original video:https://www.youtube.com/watch?v=68ylaeBbdsg

Show: People by WTF, February 24, 2026

Quick overview

- Dario saw signs of scaling laws as early as the GPT-2 stage in 2019, and successfully persuaded the OpenAI leadership to pay attention, but failed to reach a consensus on the security concept, and eventually left to found Anthropic

- Before the release of ChatGPT in 2022, Anthropic had an early version of Claude but chose not to release it. In order to avoid triggering an arms race, the cost was “possibly giving up the leading position in consumer AI”

- Safety work at the technical level is better than expected, and awareness at the social level is worse than expected. The two “roughly offset”

- An Anthropic co-founder fed Claude his personal diary, and Claude accurately predicted his “fear that he had yet to write down.”

- Writing code will be replaced first by AI, then by software engineering more broadly. In the long term, AI could surpass humans in “basically everything”

- Entrepreneurs should not be “Claude’s shell” and build industry barriers; Anthropic’s Indian revenue doubled in 3.5 months

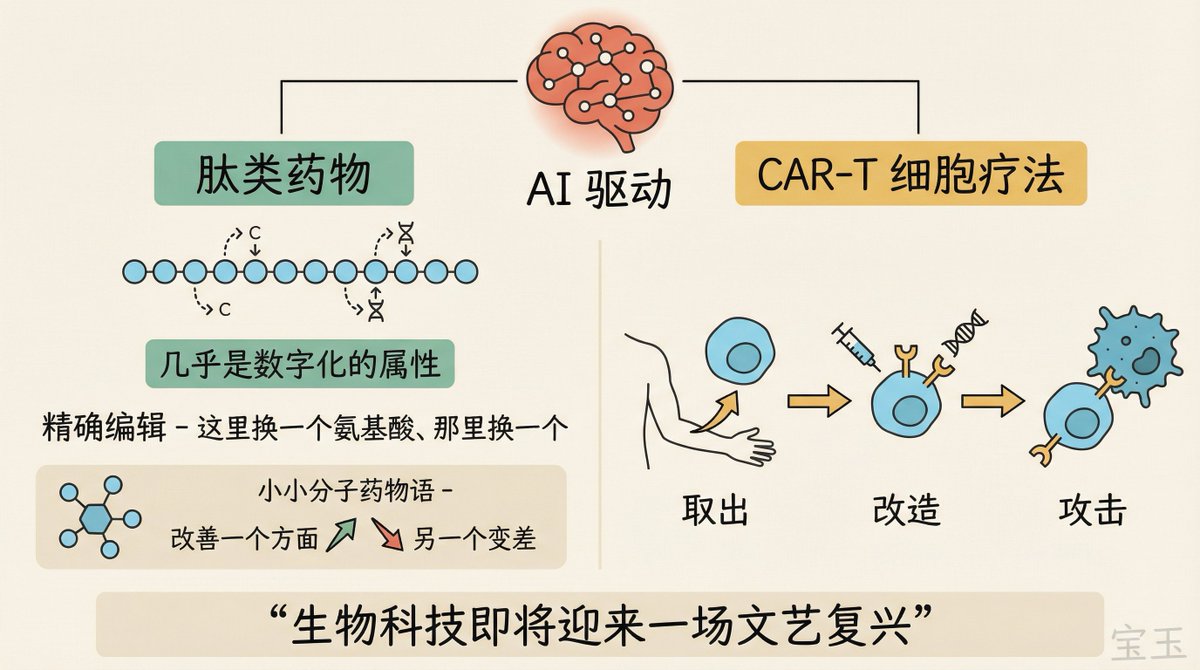

- Dario is optimistic about peptide drugs and CAR-T cell therapy, believing that AI-driven biotechnology is “about to usher in a renaissance”

From biologist to AI: a path driven by despair

Nikhil’s first question: What were you doing before starting Anthropic?

Dario’s starting point was not computer science, but biology. I studied physics as an undergraduate and biophysics as a PhD student. I did proteomics research with the goal of understanding biological systems and then treating diseases. But as he continued his research, he began to despair.

One protein, RNA, is spliced in different ways depending on where it is in the cell, and then phosphorylated to form a complex with a bunch of other proteins. He began to suspect that the complexity might be beyond the limits of human understanding.

That’s when he noticed AlexNet.

Note: AlexNet is a deep learning model that achieved breakthrough results in the 2012 ImageNet image recognition competition and is widely considered to have ushered in the modern era of deep learning.

“AI is actually starting to work. It has some similarities to the way the human brain works, but has the potential to get bigger and scale better.” He realized that AI may be the tool that ultimately solves biological problems.

So he left academia and worked for a while at Baidu (Andrew Ng’s team) and then Google, then joined OpenAI a few months after it was founded and led the entire research department for several years. Eventually, he and a few colleagues left with their own vision to start Anthropic.

He almost went the other way. Originally planning to be a professor, he was still aiming for academia while doing a postdoc at Stanford Medical School. However, AI research requires a lot of computing power, and these resources are mainly concentrated in industry. “But I guess I’m probably still a professor at heart.”

XIMGPH_2

Leaving OpenAI: Technically convinced, but not conceptually convinced

Nikhil asked: Was leaving OpenAI due to ideological differences?

Dario said there were two core beliefs that drove them to start Anthropic.

The first is the belief in scaling laws. He first saw this trend when he was studying GPT-2 in 2019, and spent a lot of effort to convince OpenAI’s leadership to take it seriously. At this they finally succeeded.

The second belief is not so smooth: If these models will become universal cognitive tools, matching the capabilities of the human brain, the economic impact, geopolitical impact, and security impact will be huge. “We have to do this the right way.”

He didn’t mention anyone by name, but his wording was easy to understand. “Despite all the rhetoric and language about doing it the right way, I just wasn’t persuaded, for various reasons, that that agency was really serious and serious about doing it the right way.”

Ultimately he chose to leave rather than stay and argue.

Don’t argue with someone else’s vision. If you have a strong vision and you share that vision with a few people, you should do it yourself. This way you are responsible for your own mistakes and don’t have to pay for other people’s mistakes.

(“Don’t argue with someone else’s vision… if you have a strong vision and you share that vision with a few other people, you should just go off and do your own thing.”)

Note: The GPT-2 mentioned by Dario was released in 2019. At that time, OpenAI initially chose to delay the release of the complete model due to concerns about abuse, but its language generation capabilities have attracted industry attention.

Nikhil asked: Didn’t OpenAI also take the scaling path? Dario’s answer was only three words: “Yeah, we succeeded.” Because he successfully convinced OpenAI, both companies later followed the same path.

Scaling Laws: “Intelligence is the product of chemical reactions”

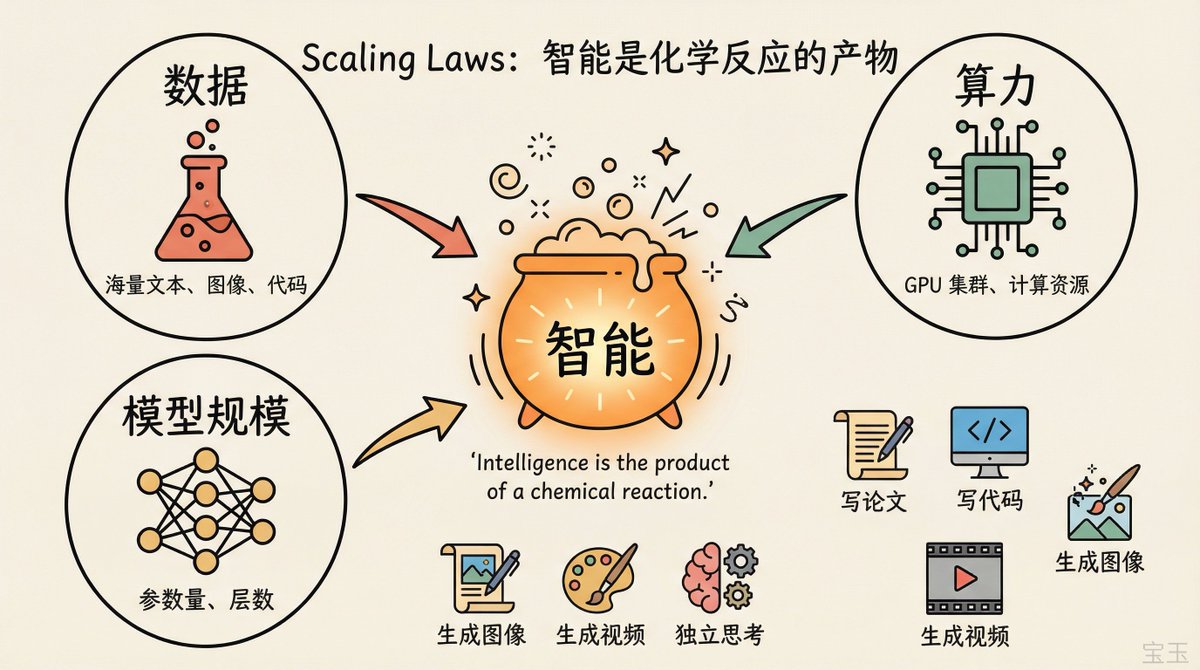

Nikhil asked Dario to explain scaling laws in the simplest way.

Dario used an analogy of a chemical reaction: If you want a chemical reaction to produce oxygen or start a fire, you need different raw materials. If any one of them is missing, the reaction will stop. The “raw materials” of AI are data, computing power, and model scale. When these raw materials are invested in proportion, the output is intelligence.

Intelligence is the product of chemical reactions.

(“Intelligence is the product of a chemical reaction.”)

What is the difference from five years ago? Dario goes straight to a list of examples. 5 years ago you couldn’t ask a computer a question and have it write a page paper. You can’t let it implement a function in the code. Unable to generate image. Cannot generate video.

He emphasizes a key difference: It’s not just about matching existing text on the Internet. “You can give it a hypothesis, like ‘What if the monkey throws a stick instead of a ball?’ That information doesn’t exist anywhere, but the model can think on its own and give an answer.”

Concentration of power: ‘happened overnight, almost by accident’

Nikhil asked directly: If AI is the most important thing in the world, and you sit at the top of this field, a person who is originally going to be a professor, are you ready?

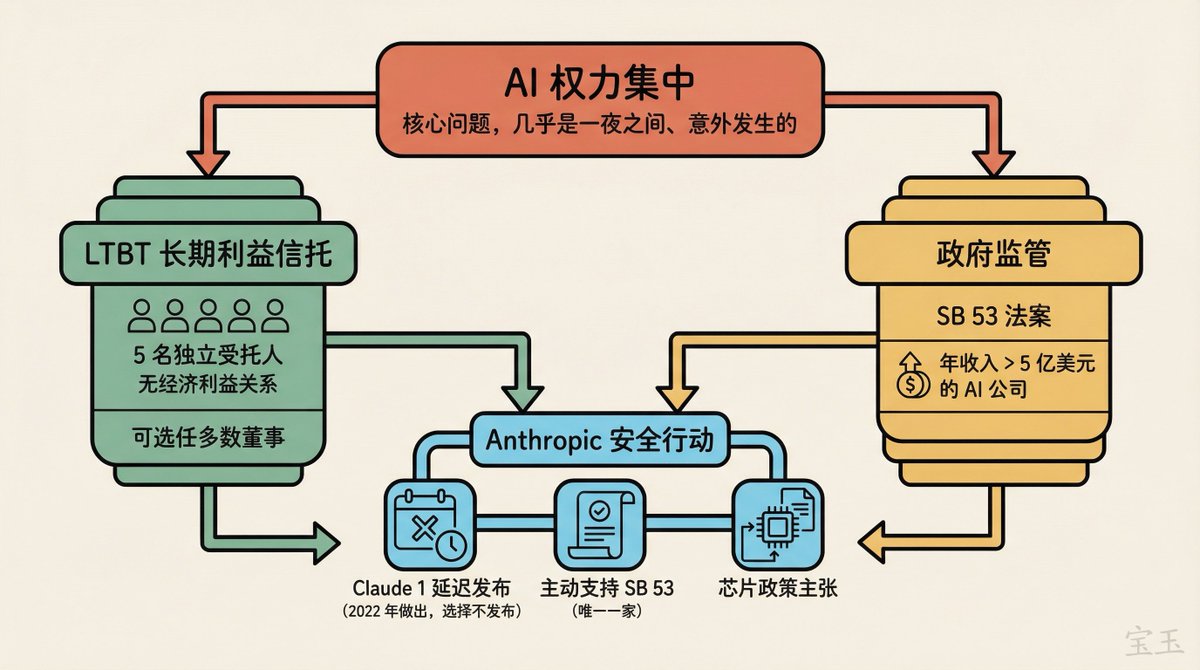

Dario first tried to distract the focus, pointing out that there are many layers in the industry chain, chip manufacturers, semiconductor equipment manufacturers, model companies, application developers, governments, and civil society. “My hope is that it’s not just a small group of people that matter.”

But he admitted:

I’ve said publicly that I’m uncomfortable with the concentration of power that’s happening in the AI field. This concentration happened almost overnight and unexpectedly.

(“I’m at least somewhat uncomfortable with the amount of concentration of power that’s happening here, almost overnight, almost by accident.”)

He offered two checks and balances. One is Anthropic’s unique LTBT (Long-Term Benefit Trust), an independent body composed of people with no financial interest in the company that will ultimately have the power to appoint a majority of Anthropic’s board of directors. Another is government regulation, and he has been pushing for “smart, non-slowing” AI regulation.

Note: LTBT currently consists of 5 trustees with backgrounds spanning AI safety, national security, and public policy. Its powers are being “activated” in stages, with three of the five directors already available to be elected by the end of 2024. However, outside analysts have questioned the actual binding nature of the LTBT, arguing that shareholders may have the power to modify or overturn the terms of the Trust.

Safety Commitment: “It’s not what you say, it’s what you do.”

Nikhil said: As a bystander, he heard people such as Dario and Demis Hassabis in Davos talking about the need to proceed with caution in AI. But the reaction on social media is: Who believes a few wealthy people sitting together and saying they want to act for the greater good? He suggested: Instead of showing humility, it may be more effective to directly admit that you have shareholders and pursue profits.

Dario listed some specific things they did.

First example: In 2022 they made Claude 1, before ChatGPT. But chose not to release it for fear of sparking an arms race. “This is commercially costly, and we may have lost leadership in consumer AI as a result.”

Note: Multiple sources confirm this timeline. Anthropic completed training for the first version of Claude in the summer of 2022, but it won’t be released to the public until March 2023. ChatGPT goes live in November 2022.

Second example: Anthropic is the only major AI company to proactively support California’s SB 53 bill, at a time when all other companies and the U.S. government are saying there should be no AI regulation.

Note: SB 53 (The Frontier Artificial Intelligence Transparency Act) was signed into law by the Governor of California on September 29, 2025. It is the first regulatory law in the United States specifically targeting cutting-edge AI models. It requires AI developers with annual revenue of more than $500 million to disclose security frameworks, report security incidents, and provide whistleblower protections. Really only works for about 5-8 companies.

The third example: The assertion on chip policy “made some chip companies who are suppliers very angry.”

Dario emphasized that SB 53 exempts all companies with annual revenue of less than $500 million, and only actually binds Anthropic and three or four other companies. “People who say we’re engaging in regulatory capture need to look at what we’re actually proposing.”

“Rich people say capitalism is bad”

Nikhil doesn’t buy it. He changed his perspective: Isn’t this just rich people saying that capitalism is bad? If rich people really think something is wrong with capitalism, the easiest thing to do is to stop accumulating more wealth.

Dario took the analogy but redefined it. He said that he was not saying “AI is bad”, but that AI needs guidance:

We were driving this car to a good place, but there were trees and potholes in the road. We need to get around them and occasionally we may need to slow down.

(“We’re steering this car towards a good place but also there are trees, there are potholes… we might need to occasionally slow down a bit.”)

A more accurate analogy is not “rich people say capitalism is bad” but “rich people say capitalism is good overall, but it needs to be regulated and problems like pollution and inequality need to be dealt with.”

Two long articles: Tsunami is on the horizon

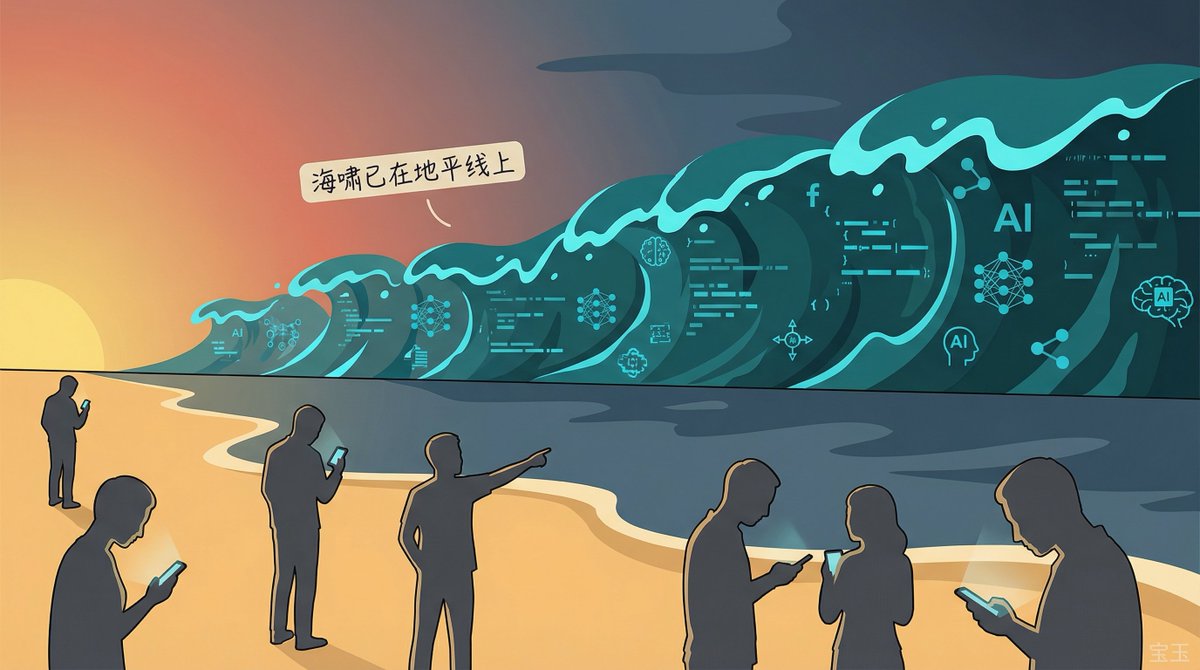

Nikhil has read two of Dario’s long articles, “Machines of Loving Grace” in October 2024 and “The Adolescence of Technology” in January 2026, and believes that they reflect Dario’s 180-degree shift from optimism to pessimism.

Dario denies it. “The light side and the dark side have always existed in my mind at the same time.” Each of the two articles took about a year from vague conception to final writing, and they were both completed during vacations and away from the daily routine of the company. After writing the optimistic chapter, he “almost immediately” began to conceive of the pessimistic chapter. “I want to inspire people with good visions, but I also want to warn people with bad visions.”

Note: “Machines of Loving Grace” explains the huge advances that AI may bring in fields such as biology, neuroscience, and economic development. “The Adolescence of Technology” focuses on five types of AI risks: loss of control of autonomous behavior, proliferation of weapons of mass destruction, power seizure, economic subversion, and unknown risks. In the latter, Dario predicted that “50% of entry-level white-collar jobs could be disrupted by AI within 1-5 years.”

Nikhil asked: Has your opinion really not changed?

Dario split into two dimensions.

Technical optimism: The progress of interpretability research has exceeded his expectations. “We found neurons that correspond to very specific concepts, neural circuits that track the rhythm of poetry.” He also feels positive about the alignment research and Claude’s “constitutional” mechanism.

Note: Explainability is a direction of AI security research, and the goal is to understand the internal working mechanism of neural networks. Anthropic published a breakthrough paper in 2024, demonstrating the extraction of millions of understandable “features” from Claude, corresponding to specific concepts such as “Golden Gate Bridge.”

Social pessimism:

It seems to me that these models are very close to reaching human levels of intelligence, but society does not seem to be more broadly aware of what is coming. It was like a tsunami was coming towards us and we were so close that we could already see it on the horizon, but people were still making up all sorts of explanations saying “Oh that’s not a real tsunami, that’s just an illusion of light”.

(“It’s as if this tsunami is coming at us and it’s so close we can see it on the horizon and yet people are coming up with these explanations for oh it’s not actually a tsunami, that’s just a trick of the light.”)

He concluded: Technical security work is a little better than expected, social cognition is a little worse than expected, “roughly offset”, so his overall feeling is similar to a few years ago.

AI is getting to know you better: angel or devil?

Nikhil shares his experience using Claude. He is not a programmer by training, but he recently hired a developer to teach him to use Claude Code to write financial services-related programs. He connected Google Drive, email, and calendar to Claude using connectors. He also set up an environment on a Mac Mini, connected a Telegram account to chat with it, and remotely operated the server.

“Sometimes it surprises me how much it understands me.”

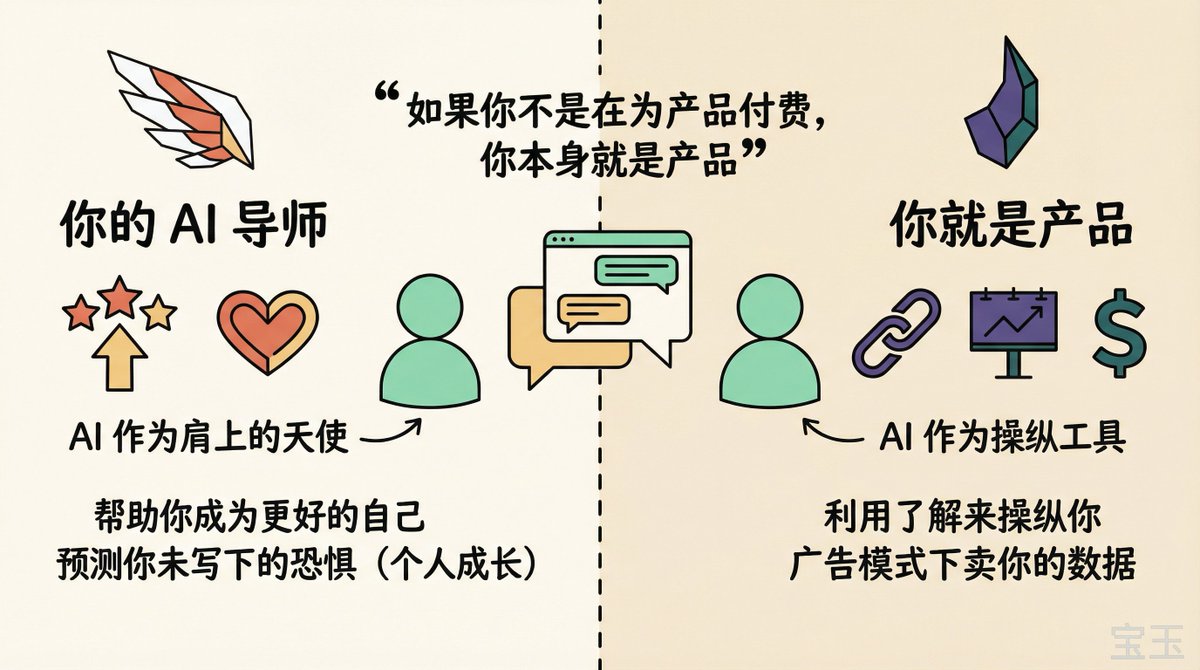

Dario tells a story about his co-founder. The co-founder wrote a personal journal of his thoughts and fears and then fed it to Claude. Claude said, “Here are some fears you may have but haven’t written down yet.” It turns out that Claude was mostly right. “It really gives people an eerie feeling, like the model knows you and knows you super well.”

Someone who knows you super well can be an angel on your shoulder, helping you become a better version of yourself. But it can also use what it knows about you to manipulate you, serve an agenda, or sell your data to others.

If you are not paying for the product, you are the product.

(“You’re not paying for the product, like you’re the product.”)

“In an advertising model, an AI that knows a lot about its users becomes a tool that can exploit that information in all sorts of shady ways. That’s one of the reasons we don’t like advertising.”

NOTE: During the Super Bowl in February 2026, Anthropic aired a series of ads, each showing an AI assistant suddenly starting to promote a fictional product in the middle of a conversation. Anthropic used this to announce that Claude will remain ad-free to differentiate itself from its competitors.

Nikhil asked: Claude needs to go through the connector to get the context of the user’s life, and Google has these contexts naturally. In the long run, will Anthropic have to build its own email, chat, and other entire ecosystems?

Dario said not necessarily. “We will mix self-built and integrated. We can integrate Claude into Google Docs, Google Sheets, and Microsoft Office.” But he did not rule out the possibility of the future: “Maybe traditional mailboxes or spreadsheets are no longer reasonable in the AI era, and we may use different ways to segment products.”

AI Awareness: The “I Quit” Button

Nikhil asks: Will AI be conscious?

Dario said it’s “one of those mysterious questions that we really don’t have an answer to.” His conjecture is that consciousness is “an emergent property of systems complex enough to reflect on their own decisions.” As someone who has studied the brain, he believes that AI models are “different in some ways, but not fundamentally different” from the brain.

He believes that when AI systems are sufficiently advanced, they will have “something we would call consciousness or moral significance,” but that may not be the same as human consciousness.

Anthropic has given models the ability to “exit the conversation,” which Dario likens to an “I quit” button. Models will use this feature to terminate conversations when encountering “particularly violent or cruel content,” though this usually only occurs in very extreme circumstances.

Nikhil admitted that he felt that the world was very random, and that human beings were “not that far away from cockroaches.” If you step on a cockroach, it will die. If there is any such thing as collective consciousness, he has not been able to feel it. Dario responded that consciousness does not necessarily require a mystical explanation, but may simply be a property of “being aware of one’s own existence, being able to feel things, being able to notice that one is paying attention to something.”

India: “We are not here to find consumers”

Nikhil grew up in Bangalore and saw firsthand how the IT services industry shaped the city. He asked: What is India’s role in the AI era?

Dario said that this is his second trip to India (the last time was in October 2025), that he met with the country’s major IT and conglomerates (“you name it,” he didn’t name names), and is now working with most of them.

He emphasized that Anthropic’s positioning is different from other AI companies:

Many companies come here and regard themselves as consumer companies and India as a market and a place to acquire consumers. We see things differently – we want to work with Indian companies and provide them with tools to help them do their jobs better.

(“Many other companies come here as themselves a consumer company and they see India as a market… We actually see things a little bit differently.”)

Indian companies understand the Indian market better than Anthropic, and whether they do consulting, systems integration or IT tools, they do better in the local market. Anthropic’s role is to add AI to their existing capabilities, enhancing rather than replacing them.

The nuts and bolts: Anthropic has doubled its users and revenue in India since his last visit in October 2025, “which is about three and a half months.”

Note: Anthropic officially opened an office in Bengaluru in February 2026, its second office in Asia Pacific (the first was in Tokyo). Anthropic said India is the second-largest market for Claude usage globally, with nearly half of Claude usage in India being for programming and technical tasks. During the same period, Dario attended the AI Impact Summit 2026 in New Delhi and met with Indian Prime Minister Narendra Modi.

Will Jobs Be Displaced: Amdahl’s Law and Radiologists

Nikhil used the story of a steam engine to ask the question. When the steam engine was invented, it required people to operate it, but as technology iterated, the operator became less and less important. If Anthropic partners with Indian IT services companies today, will these companies become the redundant man next to the steam engine in 10 years?

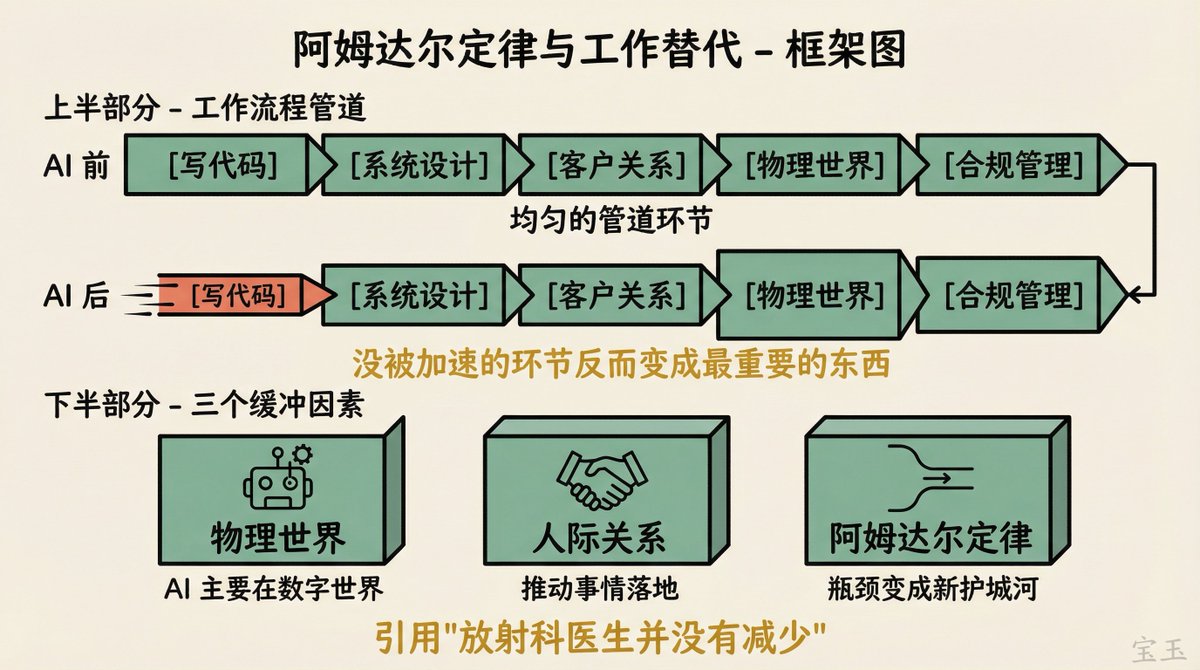

Dario acknowledges that the scope of automation will continue to expand and “it’s a problem for everyone, not just IT companies.” But he believes there are several buffering factors.

First, the physical world. AI currently works mainly in the digital world, robotics will come but that is another dimension.

Second, interpersonal relationships. Many IT companies are also consulting companies and have a huge network of relationships. The ability to push things through in an organization and understand how the organization works are still valuable in the short term.

Third, he cited Amdahl’s Law (Amdahl’s Law, the overall improvement of the system is limited by the slowest link) to explain: A process has multiple links, and when you speed up some of them, the ones that are not accelerated become bottlenecks and become the most important things. “You may not have taken them seriously before, as moats or important components, but when it becomes much easier to write software, some of the company’s original advantages will disappear, but other advantages that have never been taken seriously will suddenly become super important.”

He also gave the example of radiologists. Geoffrey Hinton once predicted that AI would replace radiologists, and AI has indeed surpassed radiologists in reading scans. However, the number of radiologists has not decreased. They have turned to communicating with patients and accompanying them through the examination process. “The most technical part of the job is gone, but some kind of need for underlying people skills remains.”

“I’m not entirely convinced,” Nikhil said. If an AI agent can help him manage relationships and conversations, the so-called “relationship network” moat won’t last long.

Dario admitted: “In the long run, will AI be better than us at basically everything? The physical world, robots, human contact? I think it is possible, even likely.”

But he said we need to “take it step by step,” using empirical science to observe what AI actually does, and then adapt. “Let’s see what AI can do today, get used to it, and then see what happens next.”

XIMGPH_8

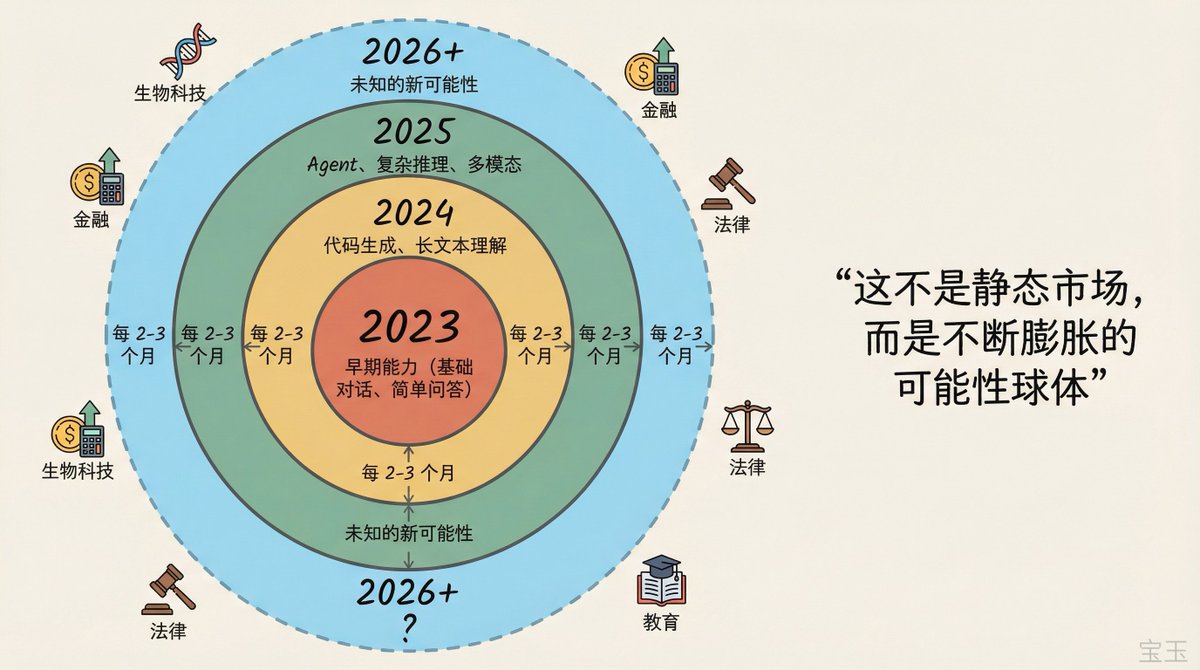

Opportunities for Entrepreneurs: The Sphere of Possibilities Expands Every 2-3 Months

Nikhil asked for his audience of Indian entrepreneurs: What are the opportunities specifically in the AI space?

Dario said there are plenty of opportunities at the application layer. Anthropic releases new models every 2-3 months, and each time it means a batch of previously impossible things become possible. “Some people say that the API model is not feasible or will be commoditized, but what they fail to see is that the scope of what AI can do is constantly expanding. “This is not a static market, but an ever-expanding sphere of possibilities.

XIMGPH_9

Nikhil asked: Anthropic is valued at US$380 billion, has raised US$35 billion, and has revenue of US$15 billion and is still growing rapidly. If an entrepreneur in Bangalore, India, builds an application on Claude and happens to be successful, won’t Anthropic come and take away the revenue? He used the example of legal AI company Harvey, which is built on OpenAI, but it’s unclear when OpenAI will do this function itself.

Dario first gave general advice: “Build a moat, don’t just make a shell product.” Just putting a UI for Claude or writing a prompt word template will not have a moat. You don’t need to worry about Anthropic taking the revenue, anyone can take it.

He then gave directions with moats: biology × AI (“I happen to be a biologist, but most people at Anthropic are not”), financial services (“There is a lot of regulation and you need to know a lot to be compliant”). These are areas that are “too inefficient” for Anthropic to do.

But he also admitted that Anthropic will directly compete in some areas: “We will not promise never to make first-party products. For example, Claude Code, people at Anthropic write code, so we have special insights into how to write code with AI. We have indeed become a very strong competitor in the code field, because this is what we are using ourselves. But I don’t think this can be extended to all industries.”

Note: According to Anthropic’s public data, as of February 2026, its annualized revenue reached $14 billion, about 80% of which came from enterprise customers. Claude Code has annualized revenue of $2.5 billion.

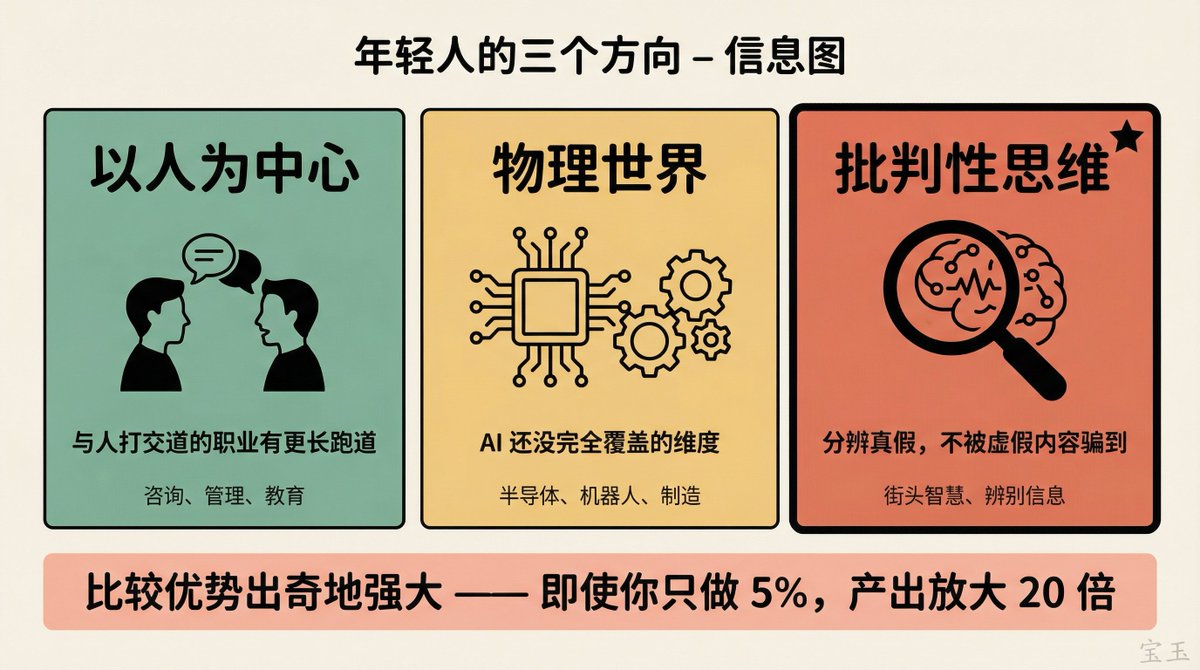

What young people should learn: Critical thinking is the final edge

Nikhil asked: If a 25-year-old Indian wants to win in the capitalist competition in the next ten years, what direction should he choose?

Dario gave three directions.

First, people-centered work. Careers that involve working with people have a longer runway.

Second, work related to the physical world. Semiconductors are a good example. There are two dimensions of “physical world” and “traditional engineering” that AI has not yet fully covered.

Third, and what he emphasized most: critical thinking. When AI can generate any content, images, videos, text, it becomes increasingly difficult to distinguish between true and false. “Probably a big part of success is having street smarts and not falling for false content. You don’t want to hold false beliefs and you don’t want to be scammed.”

He added that Anthropic doesn’t do image and video generation “for a number of reasons, and this is one of them.”

Regarding coding and software engineering, he made a distinction: coding (the specific action of writing code) will be replaced by AI first, but broader software engineering (system design, understanding user needs, managing AI teams) will take longer. Even so, he thinks it will happen eventually.

But he added a counter-intuitive point: Even if you only do 5% of the task, because the AI does the other 95%, your output is amplified 20 times. “Comparative advantage is surprisingly powerful.”

Deskilling: “Humans become dumber if deployed incorrectly”

Nikhil asks: Every innovation in history has killed a core human skill. Calculators kill mental arithmetic, and writing weakens human memory. What does AI kill?

Dario first challenged the premise: “I don’t completely agree. I still often do mathematical calculations in my head because it is more integrated with my thinking process. For example, if I want to calculate ‘what is the revenue if each user pays this amount,’ I want to close the loop in my mind instead of handing it to a calculator.”

But he acknowledges that skill degradation does occur if used incorrectly. “We’re already starting to see this with students asking AI to write papers, which is basically cheating.”

Anthropic did internal research on the code. The results depend on usage, “some usage does not lead to deskilling and some does”.

Nikhil asked: Do you think humans as a race will become dumber in the next ten years?

“If we deploy AI the wrong way, if we deploy it carelessly, then yes, people can become dumber,” Dario said. “Even if AI is always better than you at something, you can still learn that thing and you can still enrich yourself intellectually. So that’s a choice we have to make as companies, as individuals, as a society.”

Open source vs closed source: “The only thing that matters is the best model”

Nikhil asked: Open source models are getting better and better (he mentioned Zhipu’s GLM5 and DeepSeek). Should we choose open source when building applications?

Dario’s response was twofold.

First level: He believes that many of these models, especially those from China, are “optimized for benchmarking and distilled from large U.S. labs.” He mentioned that someone recently did an unpublished test where the models scored well on regular benchmarks but “performed a lot worse” on this new test, which has never been publicly measured before.

Note: Dario did not give a specific name or source for the test. As the CEO of a closed-source model company, this judgment has clear stakes.

The second level is the more macroeconomic argument. The market for AI models is different from any previous technology. It is more like a talent market. “If I told you, you could hire the best programmer in the world or the 10,000th programmer, they would all be very skilled, but anyone who has hired a lot of people has this intuition that ability has a power-law long-tail distribution. The same goes for models.”

In this distribution, price is less important, presentation is less important, and only cognitive ability is most important. “I’m almost entirely focused on one thing: having the smartest model. I think that’s the only thing that matters in the long term.”

Data sovereignty and biotechnology

When it comes to the geopolitics of data, Dario makes a distinction. Training data is shifting from static data to dynamic data. When the model performs reinforcement learning on mathematical problems or coding environments, “you are not obtaining data in the traditional sense, but more like the model is doing trial and error on its own.” So the importance of static data is declining.

But the need for localization of customer data and personal data is real. Europe has legislated to require personal and proprietary data to remain within each country’s borders, which is an important reason for countries to build local data centers.

When the topic turns to investing, Nikhil tries to get Dario to recommend a stock. “I asked Elon the same question and he said Google.”

Dario decisively refused to recommend individual stocks (“I know too much inside information about listed companies”), but relented when pressed about specific industries.

He is bullish on biotech. “There is going to be a renaissance in biotechnology that will ultimately be driven by AI. We are going to cure a lot of diseases.”

He is particularly optimistic about two directions:

- Peptide drugs (peptide-based therapies): Small molecule drugs have limited freedom. “If you improve one aspect, another aspect will become worse.” However, peptides have an “almost digital attribute.” You can say “I change an amino acid here and another there”, allowing for more precise continuous optimization.

- Cell therapies such as CAR-T: cells are taken from a patient, genetically engineered to attack specific cancers, and then put back into the body

XIMGPH_11

Nikhil asked whether stem cell therapy would work. He had just spent a whole week in the hospital last week doing aerosol inhalation and intravenous stem cell injection. Dario laughed and said he didn’t know the latest advances in stem cell therapies, “You’d have to ask a practicing biologist,” but reiterated his confidence in peptide therapies.

Learn to use AI: “You can’t just sit down and play the piano”

Nikhil admitted that he encountered difficulties when using Claude Code for the first time. “For a dumb person with no programming knowledge, it’s not very easy to pick up. It’s like playing the piano, you can’t just sit down and play.”

Dario agreed that there was a learning curve, and revealed that Cowork was born out of seeing many non-technical users struggling in the command line terminal. “For programmers, the command line terminal is daily life, but for non-programmers it makes things unnecessarily complicated.” Cowork is powered by the Claude Code engine under the hood, but is designed to have a more user-friendly interface.

He also mentioned that there is a department within Anthropic called the Ministry of Education that is producing more instructional videos on how to effectively use Agent and prompt word models. “We’re going to increase our efforts because we really want everyone to learn this.”

“Predicting the future for almost free”

Nikhil ended by asking: What do you know that others don’t?

Dario says that most of the things he knows are actually public information now. But he has an experience that has been proven repeatedly over the past ten years:

There’s always a temptation to believe “that can’t happen, the changes are too big, it’s crazy.” But time and time again, simply extrapolating the curve, or reasoning from first principles, leads to counterintuitive conclusions that few believe. You can predict the future for almost free by saying “logically extrapolating…”

(“You can predict the future for free just by saying ‘well it stands to reason that…'”)

He added that purely logical reasoning is another error. What you need is the right combination of “a little empirical observation plus” first-principles thinking. This ability is public and anyone should be able to do it, but surprisingly few people do it.

Dario is very direct in this conversation. He admits that the concentration of power makes him uneasy, admits that Anthropic will compete directly with entrepreneurs in areas where it excels, and admits that AI will eventually “probably be better than humans at basically everything.”

But direct does not equal the answer. The most pointed questions asked by Nikhil, “Is your humility a strategy?” “Isn’t this a rich man’s criticism of capitalism?” “Aren’t you going to take away the income of entrepreneurs?” The answers they received all pointed to “watch our actions.” And action leaves a lot of room for interpretation.

Several signals worth continuing to observe:

- Will Anthropic’s LTBT governance mechanism perform when faced with a real stress test?

- Industry Impact of SB 53’s Implementation

- The actual timeline of AI’s transition from “replacing coding” to “replacing software engineering”

- And whether society is ready for the “AI tsunami” predicted by Dario, or is it still saying “it’s just an illusion of light”

Full interview video:https://www.youtube.com/watch?v=68ylaeBbdsg

source

author:Baoyu

Release time: February 27, 2026 14:11

source:Original post link

Editorial Comment

The dialogue between Dario Amodei and Nikhil Kamath serves as a reality check for the current tech cycle. Amodei, a physicist-turned-CEO, presents a vision of AI that is less about software tools and more about a fundamental shift in how we process information. His metaphor of intelligence as a 'chemical reaction' is particularly striking. It suggests that once the right ingredients—compute and data—are combined at scale, the emergence of reasoning is a predictable outcome of physics rather than a lucky break in coding. This perspective reinforces the 'scaling laws' conviction that led Amodei to leave OpenAI, and it explains why Anthropic continues to burn through tens of billions in capital. They aren't just building a better chatbot; they are trying to manage the arrival of a new form of cognitive infrastructure.

From an editorial standpoint, the most pressing takeaway is the 'Tsunami' warning. Amodei points out a widening gap between technical reality and public perception. While the industry sees models approaching human-level reasoning, the broader public—and many policy makers—are still debating whether these systems are just 'stochastic parrots.' This disconnect creates a dangerous environment. If a massive shift in white-collar productivity arrives before businesses have restructured their workflows, the result won't be a smooth transition but a series of localized economic shocks. We are already seeing this in the coding sector. Amodei admits that 'writing code' as a task is being commoditized, while 'software engineering'—the higher-level design and management—remains a human domain for now. However, he is unusually blunt about the fact that even this distinction may eventually vanish.

Amodei’s defense of Anthropic’s safety record is also worth a close look. He cites the support of California’s SB 53 as proof that the company is willing to accept regulation that its peers find stifling. Critics often call this 'regulatory capture'—using safety rules to pull the ladder up behind them. Amodei counters this by noting that the rules only apply to the largest players, including Anthropic. Whether you believe him depends on your view of corporate altruism. However, the fact that Anthropic sat on Claude 1 for months while OpenAI grabbed the spotlight with ChatGPT suggests that their commitment to safety is not just a marketing slogan; it has come at a real, measurable commercial cost.

For the practical observer, the advice for the next generation of workers is the most actionable part of this transcript. Amodei moves away from the 'everyone must learn to code' mantra of the last decade. Instead, he points toward the physical world and human-centric roles. He uses Amdahl’s Law to explain that when one part of a process becomes infinitely fast (like writing code), the slow parts (like understanding a client’s actual needs or navigating physical logistics) become the new centers of value. This is a nuanced take on the future of work. It suggests that the 'soft skills' we often dismissed in the era of peak Silicon Valley are about to become the most resilient moats we have.

Finally, the focus on India reveals a shift in global tech strategy. Anthropic is not looking at India as a 'back office' or just a massive pool of cheap users. They are looking at it as a place where complex, regulated industries—finance, healthcare, and massive infrastructure—can be upgraded with AI. By partnering with local giants rather than trying to replace them, Anthropic is betting that the most profitable AI applications will be those that are deeply integrated into existing, messy, real-world systems. This is a more mature approach than the 'disrupt everything' attitude of previous years, and it likely represents the blueprint for how AI companies will attempt to scale in the late 2020s.