Editor’s Brief

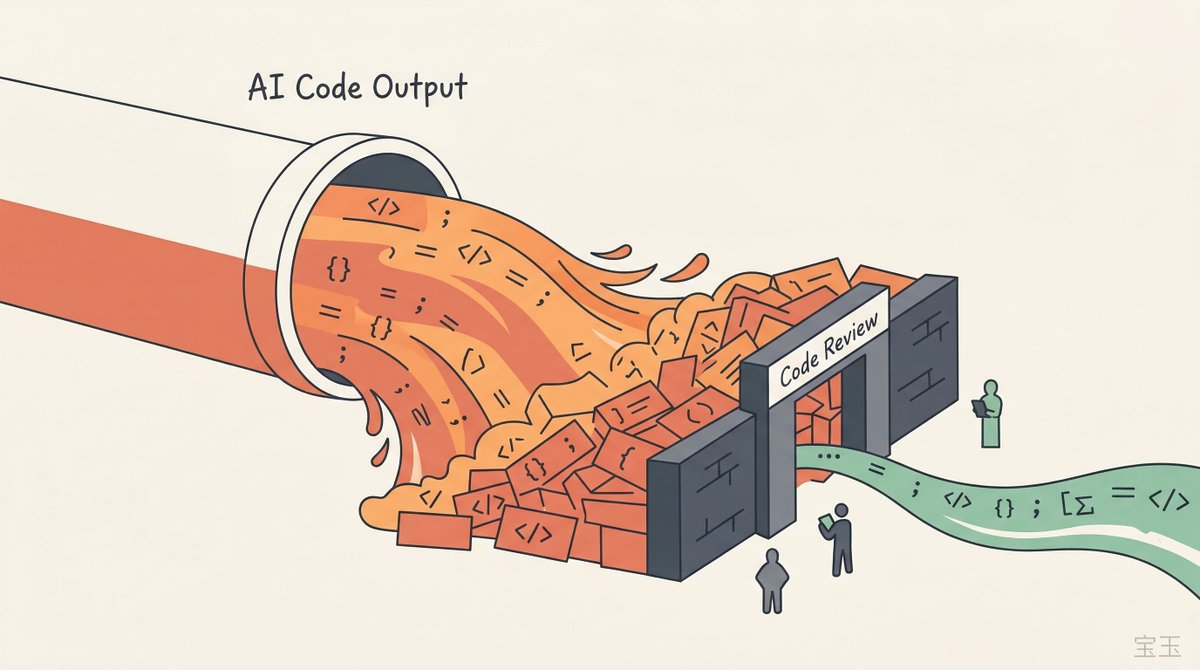

While AI tools like GitHub Copilot have demonstrably accelerated the 'inner loop' of individual coding by up to 50%, overall software delivery speeds remain stagnant in many organizations. Nicole Forsgren, the creator of DORA metrics, argues that the bottleneck has shifted to the 'outer loop'—the collaborative processes of code review, security scanning, and deployment. This editorial examines why pouring more code into clogged organizational pipes creates a 'code tsunami' and why the next frontier of engineering efficiency isn't better prompts, but the elimination of 'organizational process debt.'

Key Takeaways

- The 'Inner Loop' vs. 'Outer Loop' Disconnect: AI speeds up local development, but manual code reviews and legacy CI/CD pipelines remain fixed bottlenecks, neutralizing individual productivity gains.

- The Code Tsunami: Increasing code output without scaling the 'checking' mechanisms (automated reviews and testing) leads to massive backlogs and increased technical risk.

- Organizational Process Debt: Outdated approval layers and unnecessary meetings, once hidden by slow manual coding, are now glaringly obvious frictions that destroy developer flow.

- The Vanity Metric Trap: Measuring AI success through 'seat utilization' or 'lines of code' is deceptive; true ROI must be measured through system-wide delivery and Developer Flow.

Introduction

AI tools have made coding 50% faster, so why hasn’t delivery speed changed? Nicole Forsgren, founder of DORA metrics, points out that while developers are immersed in an “inner loop” of efficiency gains, they encounter bottlenecks in the “outer loop” such as code reviews and deployment. When AI-generated code floods outdated review processes like a tsunami, organizational friction becomes even more apparent. This article breaks down the real pain points of engineering productivity in the AI era.

Key Takeaways

- AI accelerates the “inner loop” of individual coding, but the “outer loop” involving cross-team collaboration—such as code reviews and CI/CD—remains the delivery bottleneck.

- Blindly pursuing code output volume can trigger a “code tsunami”; if the speed of inspection cannot keep up with the speed of creation, it only leads to more

“`

Wait, the original text ends with “那这种所”. I should translate exactly what is there.

“那这种所” -> “then this so-called…”

Refining the ending:

“…if a team manager only focuses on ‘how many lines of code AI helped us write’ while ignoring

The so-called efficiency gains are purely self-indulgent. What’s even more painful is the “organizational process debt” mentioned by Forsgren. In many large companies, those tedious approvals and meetings held for the sake of meetings had their inefficiency masked by long development cycles during the era of manual coding. But when AI compresses development time to the extreme, these rigid processes become particularly glaring. This gap not only wastes money but also destroys the developer’s flow state. Imagine you’ve just elegantly solved a difficult problem using AI, only to turn around and wait days for a meaningless approval; this kind of frustration cannot be compensated for by any advanced tool. Furthermore, the “cognitive load” brought by AI is also a hidden bomb. The current reality is that the barrier to writing code has lowered, but the barrier to “understanding and taking responsibility” has actually become higher. If developers just mechanically

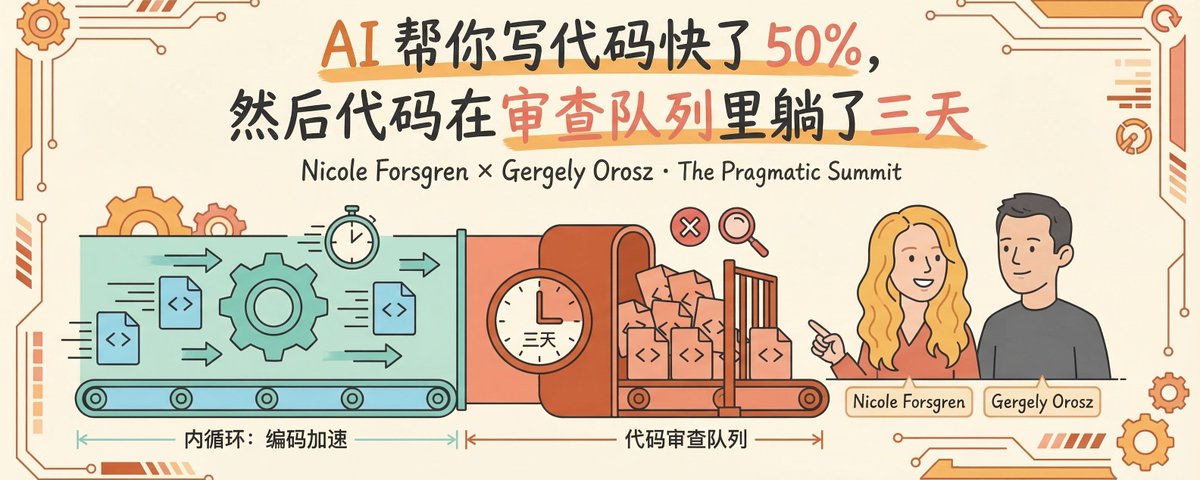

AI helps you write code 50% faster, and then the code sits in the review queue for three days.

Nicole Forsgren is the creator of the DORA metrics framework and the lead author of Accelerate. For the past decade, the software industry’s methods for measuring ”

li>

AI Makes the Fast Faster, and the Slow Stays Slow

Gergely’s first question: Leading engineering teams in the AI era, what has changed and what hasn’t?

Forsgren’s answer: The fundamentals haven’t changed. Clear goals, short feedback loops, and a culture of psychological safety—these elements of high-performing teams don’t become less important just because of AI. What has changed is the location of the bottlenecks.

She used a framework to explain: the inner loop and the outer loop.

The inner loop is the developer’s individual work: writing code, running local tests, and debugging. Tools like Copilot work very well in this phase, indeed accelerating individual coding efficiency.

But what happens after the code is written? The code enters a review queue waiting for someone to look at it, goes through the CI/CD pipeline (automated build and test systems), runs security scans, and finally gets deployed to production. This is the outer loop.

We are making the fast parts faster, while the slow parts stay just as slow.

(“We’re making the fast parts faster while the slow parts stay just as slow.”)

Forsgren gave an example: AI helps you write code 50% faster, but code reviews still take three days due to team silos or process friction, so the overall **”time to value”** hasn’t really improved.

, time to restore service, and change failure rate. The 2018 research findings were compiled into the book <i>Accelerate</i>, and this set of metrics has been adopted by numerous engineering teams worldwide.</p>

<h2>Code Flood Impacts Review Gates</h2>

<p>Gergely followed up: Will there be a “code tsunami” hitting those manual review gates?</p>

<p>Forsgren said it’s not a question of “if,” but that it’s already happening.</p>

<p>Code output has increased, but review and release processes haven’t kept up, resulting in massive backlogs. Her suggestion: AI shouldn’t just be used to write code; it should be used even more for reviewing code. Automated testing also needs to be upgraded simultaneously to reduce reliance on manual QA.</p>

<p>If the speed of “checking” doesn’t keep up with the speed of “creating,” you’re in trouble. (“If you don’t scale the ‘checking’ with the ‘creating,’ you’re in trouble.”)</p>

<figure class=)

When most companies talk about AI coding tools, their focus is entirely on “how to make developers write faster.” Forsgren’s reminder is that writing faster is only half the problem—and perhaps the easier half.

Process Debt Illuminated by AI

The conversation turned to “process debt.” Forsgren proposed a concept: organizational process debt.

All those meetings, approvals, and “this is how we’ve always done it” steps might have had value when they were created, but they no longer generate value now. She believes that organizational process debt is one of the biggest killers of high performance.

In the AI era, these legacy processes become more visible and more frustrating. In the past, developers might have quietly accepted the fact that “approvals take three layers” because writing the code itself took several days anyway. But now, AI helps you finish the code in half a day, and then you find yourself spending even more time waiting for various processes to complete—the sense of disparity is particularly intense.

research framework lists flow state as one of the three core dimensions of developer experience,</p>

<p>code.”)</p>

<h2>100% Adoption + 0% Improvement</h2>

<p>Gergely asked a question that many in management want to know: how do you measure Copilot’s ROI?</p>

<p>Forsgren chuckled first, saying that everyone wants a simple number. But **”lines of code” and “PRs per day” are terrible metrics**; they are easy to game and don’t reflect value or quality.</p>

<p>She suggests adopting a multi-dimensional metrics strategy. On one hand, look at DORA metrics, such as deployment frequency and lead time for changes (the time from code commit to production), to see if delivery speed has actually improved. On the other hand, look at developer experience: are developers less frustrated? Has the time to “first green test” been shortened?</p>

<p>Gergely then mentioned that many companies are only reporting **”seat utilization,”** which is simply how many people have activated their Copilot licenses.</p>

<p>Forsgren said bluntly that it’s just an adoption metric and doesn’t indicate whether the tool is actually working.</p>

<p>You can have 100% adoption and 0% improvement in actual delivery. (“You can have 100% adoption and 0% improvement in actual delivery.”)</p>

<figure class=)

</p>

<h2>The One Metric CTOs Should Track Most in 2026</h2>

<p>Two great questions came up during the Q&A session.</p>

<p>The first question: How do we prevent everyone from becoming prompt engineers who can’t debug? Forsgren’s answer is that we must double down on fundamental engineering skills. In the AI era, code reviews become more important, not less. Junior developers need more mentorship to ensure they understand the underlying principles of the systems they are building.</p>

<p>The second question was more direct: If a CTO could only track one metric in 2026, what should it be?</p>

<p>Forsgren didn’t choose any of the DORA metrics. She chose Developer Flow: measuring how often developers are interrupted by bad processes, slow tools, and unnecessary meetings, and then working to reduce that number. Speed, quality, and talent retention will all improve as a result.</p>

<p>Measure developer flow. If you can reduce the frequency of interruptions, everything else will naturally follow. (“Developer Flow. If you can measure how often your developers are getting interrupted… everything else will follow.”)</p>

<p>Note: Forsgren works in deep collaboration with DX; DX’s core business</p>

<p>…is developer experience measurement. She recommends Developer Flow as the core metric, which aligns with DX’s business direction. This doesn’t mean the advice itself is invalid, but readers should be aware of this connection of interest.</p>

<p>The signals Forsgren repeatedly conveyed in this interview can be summarized in three sentences: AI accelerates the inner loop, but the real bottleneck lies in the outer loop; tool adoption rate does not equal value delivery; and measure developer experience, not just code output.</p>

<p>However, this conversation also left two questions unanswered. How specifically is Developer Flow quantified? Is it through surveys or automated telemetry? Forsgren did not elaborate. There is also a more fundamental question: she said the goal is to “make the right way the easy way,” but in most organizations, what the “right way” is and who defines it is likely the biggest source of friction itself.</p>

<div class=)

Source

Author: Baoyu

Published: March 22, 2026, 15:35

Source: Original Post Link

Editorial Comment

There is a specific kind of professional whiplash occurring in engineering departments right now. A developer uses an AI assistant to solve a complex logic puzzle in twenty minutes—a task that used to take an entire afternoon—only to see that code sit in a review queue for three days. This 'local optimization, global stagnation' is the central paradox of the generative AI era in software engineering. As Nicole Forsgren rightly points out, we are essentially increasing the water pressure in a pipe that is already clogged with rust and debris. The result isn't a faster flow; it’s a burst pipe.

Forsgren’s authority here is vital. As the primary architect of the DORA metrics, she spent a decade teaching the industry that speed and stability are not a zero-sum game. Now, she is sounding the alarm on a new form of friction: Organizational Process Debt. This isn't technical debt in the traditional sense of messy code; it is the accumulation of legacy approvals, 'CYA' (Cover Your Assets) meetings, and bureaucratic checkpoints that were designed for a world where code moved at a human pace. When AI accelerates the 'inner loop' of coding but the 'outer loop' of delivery remains tethered to 2015-era workflows, the friction becomes unbearable. It’s not just inefficient; it’s demoralizing. Nothing kills a developer’s 'flow' faster than realizing their high-speed output is hitting a brick wall of administrative silence.

We are currently witnessing the arrival of the 'Code Tsunami.' If a team doubles its code production through AI but maintains the same manual peer-review process, the system will inevitably collapse under its own weight. The industry has spent the last two years obsessing over how to help developers *write* code faster, but we have neglected the much harder task of helping organizations *ingest* that code. Forsgren’s suggestion is pragmatic: we must stop viewing AI solely as a 'writing' tool and start deploying it as a 'reviewing' and 'testing' tool. If the speed of 'checking' does not scale linearly with the speed of 'creating,' the net value to the business is zero.

Furthermore, leadership teams are currently falling into a dangerous 'vanity metric' trap. Reporting 100% adoption of AI tools to the board looks great on a slide deck, but as Forsgren notes, you can have total adoption with zero improvement in delivery. Measuring 'seats' or 'lines of code' is a management failure. These metrics incentivize 'noise' rather than 'signal.' Instead, the focus must shift to 'Developer Flow'—a metric that tracks how often a developer is interrupted by slow tools, unnecessary meetings, or broken processes.

Ultimately, the AI transition is exposing the structural weaknesses of the modern enterprise. In the manual era, we could blame slow delivery on the difficulty of the craft. In the AI era, that excuse is gone. If the code is ready in an hour but the deployment takes a week, the problem is no longer a technical one—it is a leadership one. The CTOs who win in 2026 won't be the ones who bought the most AI licenses; they will be the ones who had the courage to clear the 'process debt' and let their developers actually ship the code they’ve so efficiently written.